This article is more than 1 year old

#MeToo chatbot, built by AI academics, could lend a non-judgmental ear to sex harassment and assault victims

Prototype tries to offer help, advice to people uncomfortable discussing abuse with others

Academics in the Netherlands have built a prototype machine-learning-powered Telegram chatbot that attempts to listen to victims of sexual harassment and assault, and offer them advice and help.

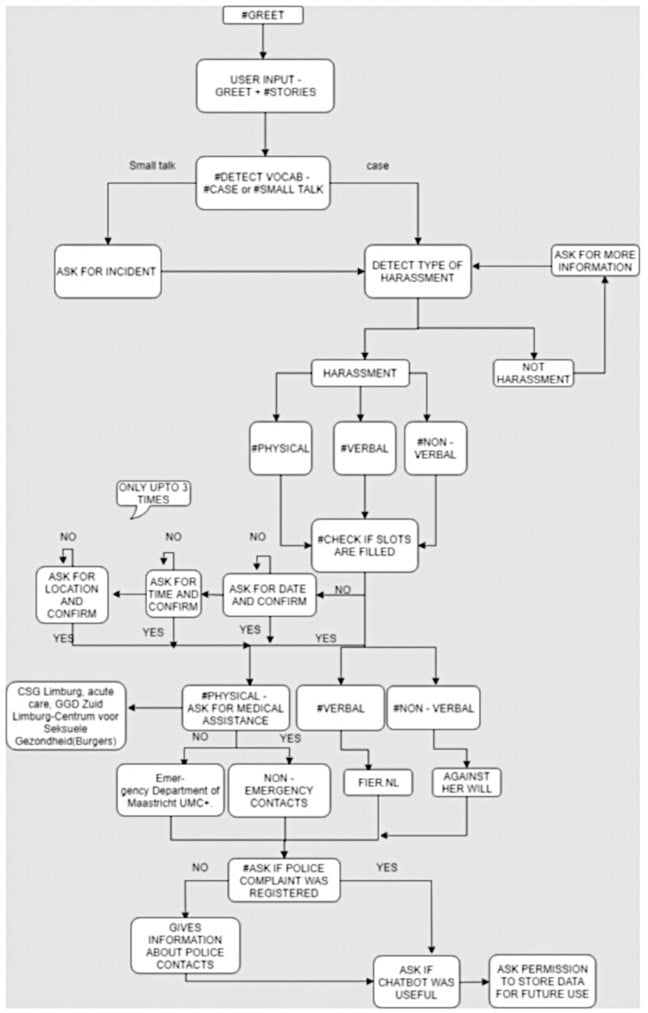

The bot, arising as a result of the Me Too movement, is trained to analyse messages to classify the type of harassment described by a victim, and determine, for instance, whether an attack was verbal or physical. It can also ask for basic information, such as the time and date of an assault, before recommending victims receive medical or psychological treatment, depending on the severity, and help them report their abuser to police with contact details.

The software is not available to the general public, to the best of our knowledge, and is still in the lab: it is a work in progress.

“The motivation behind this work is twofold: properly assist survivors of such events by directing them to appropriate institutions that can offer them help and increase the incident documentation so as to gather more data about harassment cases which are currently under reported,” according to an Arxiv-hosted paper [PDF] describing the AI-based bot.

Jerry Spanakis, an assistant professor at the University of Maastricht, who led the development of the code, told The Register some people are reluctant to report abuse out of shame or apathy. However, they may be more willing to open up to a computer that promises to be non-judgmental, hence the creation of this chatbot.

“There has been research showing that people do not report their harassment cases to police or other organisations and there are many reasons for this: they don’t think something will change or they feel shame," he said. "At the same time, having an anonymous and safe space – that’s what the chatbot serves – provides the conditions for people to report their experiences and get the appropriate help.”

Development

To start off, the chatbot was trained to identify different types of assault and harassment. To do this, Spanakis, and graduate students from the Dutch university, combed through 12,000 incident reports written by victims of sexual harassment in India, and split them into five categories: verbal abuse, non-verbal abuse, physical abuse, serious physical, and other abuse. These reports are anonymous accounts collected through the SafeCity project set up to help map sexual abuse across India, Kenya, Cameroon and Nepal.

The text from this labelled data set was then encoded into vectors using various natural language processing techniques. Each word has its own vector representation; words that have similar meanings are mapped closer to one another in vector space. For example, "shout" and "yell" should be near one another; the software learns these relationships so that it can understand different descriptions of attacks and harassment. Words associated with time and location are also identified for extraction.

All of this ultimately forms the classification stage of the chatbot, where logistic regression models categorize the type and severity of abuse suffered by a victim, based on the exchange between the human and the bot.

Of the more than 10,000 reports, 70 per cent were used to train the algorithms, and the rest were used to test it: this smaller collection was run through the system to check it identified the reports correctly.

“We are able to achieve a success rate of more than 98 per cent for the identification of a harassment-or-not case and around 80 per cent for the specific type harassment identification," the team's paper stated. "Locations and dates are identified with more than 90 per cent accuracy and time occurrences prove more challenging with almost 80 per cent."

So, essentially, to summarize: the AI software, through the course of a conversation with a person, determines the type of attack or harassment from the description given, and when and where it happened, and uses this to offer advice on who to reach out to for help and advice, along with the relevant contact details. It uses a slot-based system, along with its trained model, that extracts crucial details, such as the location and time of an assault or harassment, from questions asked.

Examples of conversations with the bot are given in the above paper, though be aware they do include descriptions of sexual assault. Below is a flowchart showing how the chatbot is supposed to operate.

Although the initial results seem promising, it should be noted that they’re based on the small data set that had to be preprocessed, tweaked, and formatted just right for the chatbot to learn and work.

Chatbots for good?

“This tool is part of a bigger project umbrella called SAFE, stemming from Safety Awareness For Empowerment,” Dr Spanakis said, referring to this project. "Our interdisciplinary team's goal is to improve citizen well-being through prevention, defense, monitoring, and access to resources on sexual harassment and assault.

“Some members of the team organize boundaries and consent workshops for students and peer self-defense courses. The tool we build is the first interactive response tool that provides fast and relevant access to resources, so it’s really there to harness technology to impact people’s safety."

As angels, rich dudebros suck: 1 in 5 Y Combinator women tech founders say they were sexually harassed

READ MOREHe added that the software will require further work and beta testing, with the help of panels of people who have survived sexual assault, before it can be released for general use. In some cases sexual harassment isn’t very clear-cut, and the potential errors of misidentifying the abuse or recommending inappropriate advice could be harmful.

“I remember when we first tried out things, our algorithm did not handle negation properly, meaning that if someone said: 'I was not harassed,' it was ignoring the 'not,' and went forward to say: 'I am sorry this happened to you.' I guess this is where we realized that we really needed to be careful and considerate about all possible backlashes," Spanakis said.

"This is the reason that the classification threshold for each type of abuse is very high and if the algorithm is not certain then through 'discussion' the bot tries to get more information but without being intrusive.

“Before releasing it to the public, we want to make sure that the tool is validated, and won’t respond or propose something awkward. We are also very sensitive on how to handle any personal data which of course are not going to be stored anyhow." ®