This article is more than 1 year old

Cray's pre-exascale Shasta supercomputer gets energy research boffins hot under collar

US DoE sees multiple CPU, GPU, interconnect support and snaps one up for $146m

Cray has announced Shasta – a near-composable planned supercomputer supporting multiple CPUs, GPUs and interconnects, including its new high-speed Slingshot Ethernet-compatible fabric that fixes the noisy neighbour network congestion problem.

Shasta is designed to run multiple workload types: traditional HPC-like modelling and simulation, AI and analytics. It can be built using standard 19-inch or water-cooled racks, including warm-water cooling.

These liquid-cooled racks are designed to hold 64 compute blades with multiple processors per blade. The system is capable of supporting processors exceeding 500W.

It has clustered processing nodes, Cray's ClusterStor and other storage, and there can be more than 100 cabinets.

The compute side of things includes support for Intel and AMD x86 CPUs, Marvell Thunder X2 ARM processors, GPUs, such as Nvidia Teslas and AMD Radeons, and FPGAs. These can be mixed and matched in a single Shasta system. Several interconnect options are available: Cray's new Slingshot, Mellanox InfiniBand and Intel's OmniPath, and these can also be mixed and matched in a Shasta system.

Cray is developing the Shasta system management software and it should enable workloads to be created that use specific processors, interconnects and storage, which would look like a form of composability.

Shasta systems should be available in the fourth quarter of 2019.

Slingshot

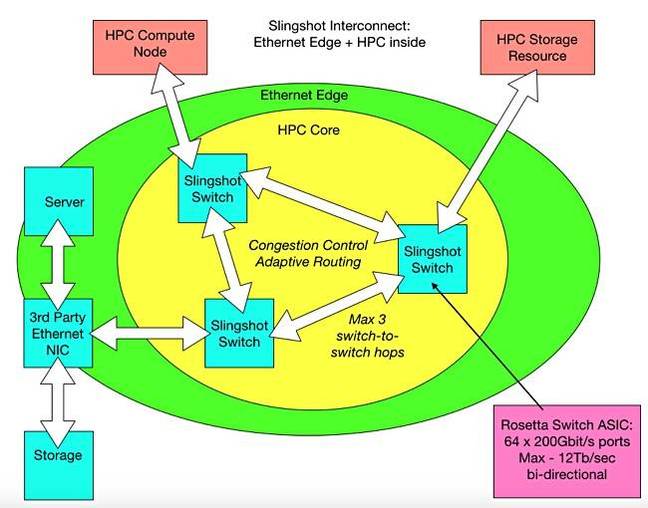

This is an interconnect using switches with a Rosetta ASIC inside. These have 64 x 200Gbit/s ports and 12.8Tbit/s of bi-directional bandwidth. Slingshot supports up to 250,000 endpoints and it takes a maximum of three hops to cross the Slingshot fabric, an improvement on the five needed by Cray's current XC system interconnect.

Our own diagram of Slingshot attributes

The interconnect features adaptive routing and congestion control to prevent workloads hogging bandwidth the noisy neighbour network problem, and quality of service. It also has Ethernet compatibility; think of Slingshot having an Ethernet edge around its HPC interconnect core.

Ethernet and HPC traffic can be inter-mixed and run on the same wires.

Cray said Shasta will have support for both IP-routed and remote memory operations.

NERSC Perlmutter

The US DoE's National Energy Research Scientific Computing Center (NERSC) is buying a Shasta system for $146m named after Nobel-prize-winning scientist Saul Perlmutter.

This Xeon-free system has a heterogeneous combination of CPU and GPU-accelerated nodes:

- AMD Epyc CPUs

- Nvidia GPUs with Tensor Core technology

- Slingshot interconnect

- Direct liquid cooling

- Lustre filesystem

It will have an all-flash scratch filesystem, which will move data at a rate of more than 4TB/sec.

Perlmutter, the system, will be deployed in 2020 and is expected to deliver three times the computational power available on the Cori supercomputer at NERSC. No actual petaFLOPS rating has been revealed, though a peak performance of around 100 petaFLOPS was mentioned by Wells Fargo senior analyst Aaron Rakers.

"I'm delighted to hear that the next supercomputer will be especially capable of handling large and complex data analysis. So it's a great honor to learn that this system will be called Perlmutter," the eponymous scientist said.

"Though I also realize I feel some trepidation since we all know what it's like to be frustrated with our computers, and I hope no one will hold it against me after a day wrestling with a tough data set and a long computer queue! I have at least been assured that no one will have to type my 10-character last name to log in." ®