This article is more than 1 year old

NetApp system zips past IBM monolith in all-flash array benchmark scrap

60% faster IOPS

Analysis NetApp has beaten IBM's biggest, baddest all-flash storage box in an industry-standard benchmark.

IBM's DS8888 scored 1,500,187 IOPS on the SPC-1 v3 benchmark, while NetApp's A800 did 2,401,171 IOPS – 60 per cent faster. The SPC-1 benchmark tests shared external storage performance in a test designed to simulate real-world business data access.

SPC-1 IOPS is actually a transaction consisting of multiple drive IOs; it is not literally drive IOs. These are also v3 SPC-1 results, not v1, with which they are not comparable. A bootnote below explains the whys and wherefores of this.

The NetApp system was configured as a cluster of six high-availability pairs of A800 nodes. It is the fourth-fastest system in the SPC-1 v3 rankings – Huawei systems dominate the top three.

The A800 was also four times faster than NetApp's own EF570, which scored 500,022 IOPS.

The table below shows some details of the the top seven SPC-1 v3 systems, which all scored more than 1 million IOPS:

| System | IOPS | System Cost | $/K IOPS | $/GB | Capacity GB | Response Time ms |

| Huawei 18800F V5 | 6,000,572 | $2.79m | 465.79 | 18.87 | 148,176 | 0.941 |

| Huawei 18500F V5 | 4,800,419 | $1.9m | 400.96 | 17.65 | 109,923 | 0.821 |

| Huawei FusionStorage | 4,500,392 | $1.5m | 329.90 | 17.87 | 83,108 | 0.787 |

| NetApp A800 | 2,401,171 | $2.77m | 1,154.53 | 15.18 | 182,674 | 0.59 |

| Huawei 18500 V3 | 2,340,241 | $1.3m | 549.75 | 16.84 | 76,408 | 0.723 |

| IBM DS8888 | 1,500,187 | $2.0m | 1,313.44 | 57.35 | 34,360 | 0.60 |

| Huawei 6000 V3 | 1,000,560 | $454k | 453.63 | 12.59 | 36.078 | 0.472 |

NetApp's A800 was the second-most expensive system at $2.77m. It had the most storage capacity – 182.7TB – and its cost per GB – $15.18 – was the second lowest, quite a long way underneath IBM's $57.35.

The A800 provided 14 per cent better price/performance, as measured by the $/K IOPS rating, than IBM's DS8888. We can also see from the SPC-1 vendor reports that NetApp's system delivered the most performance (IOPS) at both the lowest response time and lowest $/GB than any of the other vendors in the top 10 results.

It did this with data reduction turned on.

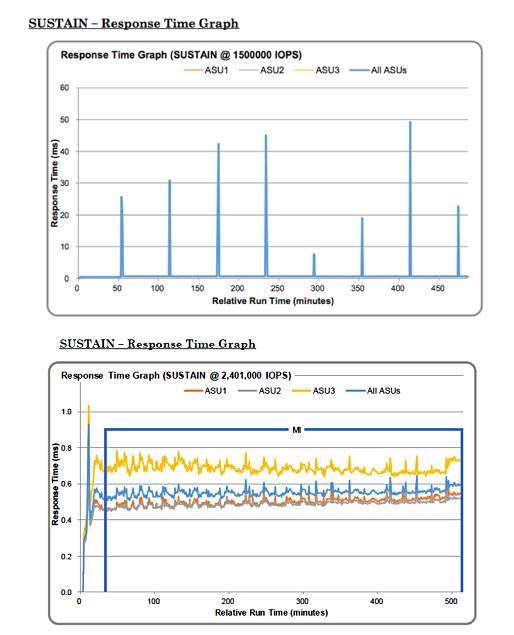

Looking at the full vendor submissions for NetApp and IBM's DS8888 (PDF), the DS8888 exhibited regular latency spikes during its run while the A800 was more consistent:

Judging from the vendor-submitted benchmarks, NetApp has shown itself to be in the same ballpark, performance-wise, as any other top-end array, from vendors such as Dell EMC (PowerMax), Hitachi Vantara (VSP F-series) and Infinidat.

Chinese vendors

But what about Huawei and its top three performance scores? Benchmarking expert Howard Marks, chief scientist at DeepStorage.net, points out that, mostly due to government distrust, Huawei systems are effectively not present in the US market.

Therefore, in the US, cost comparisons between the A800 and Huawei systems are moot.

Outside the US, of course, that is a different matter. There are 19 SPC-1 v3 vendor submissions, and 12 of them are from Chinese vendors: Huawei, FusionStack, MacroSAN and Lenovo. There are four Fujitsu ETERNUS submissions, two from NetApp and one from IBM.

Marks suggests that the Chinese domestic SAN market is now so large that its vendors see SPC-1 benchmarking as a valid way to gain sales and market presence.

These SPC-1 benchmarks face being shaken up again once NVMe-over-fabric arrays enter the frame with their promises of radically faster storage IO. It is likely that a new rev of the SPC-1 benchmark will be needed to embrace them. ®

SPC-1 benchmark background

The SPC-1 benchmark was developed with a load engine generating the workload against which the storage array under test carried out its IOs. It was based on VD Bench and wrote a fixed data pattern over and over. When deduplication and SSDs came along, the data was remarkably repetitive and dedupe ratios that were out of this world resulted, with virtually all IOs being capable of being served from a data working set in memory; giving completely unrealistic results.

Consequently SPC rules said deduplication was not allowed to be on in the SPC-1 test runs. This penalised all-flash array vendors, such as SolidFire, which couldn't turn off its dedupe.

The SPC eventually – after a delay of more than five years – produced, with its v3.n generation software, a load engine and test that could provide comparable results if data reduction – deduplication and compression – was set on or off. The benchmark test code also switched from Java to C++, which has vastly different properties.

A result of this change was that v1.n SPC-1 results are not comparable to v3.n results.

The v1.n software was retired in January 2017.

However, the SPC had a transition period allowing both benchmark versions to be used, although they were incompatible. This came to an end on 27 February 2017 and since then only v3.n runs are permissible.

Unfortunately, the default setting for the SPC-1 benchmark results webpage is not to distinguish between v1.n and v3.n results; there is a single list, although the version number is listed. You need to set the version filter to 1 or 3 to get a version-specific listing.

If you are not aware of the v1.n to v3.n benchmark software change, and the resulting erroneous comparison issue, you can easily compare v1.n and v3.n results and get misleading and invalid comparisons. El Reg would respectfully suggest that it would be better to have a v3.n-only list and scrap the previous v1.n results.

In March, coinciding with the v3.6 SPC-1 code release, the SPC changed the price/performance measure of $/SPC-1 IOPS to $/1,000 IOPS or KIOPS. This meant that previous $/IOPS figures were not comparable with the $/KIOPS values.