This article is more than 1 year old

You too can fool AI facial recognition systems by wearing glasses

All you need is to, erm, give the computers some nasty training data

A group of researchers have inserted a backdoor into a facial-recognition AI system by injecting "poisoning samples" into the training set.

This particular method doesn’t require adversaries to have complete knowledge of the deep-learning model, a more realistic scenario. Instead, the attacker just has to slip in a small number of examples to spoil the training process.

In a paper popped onto arXiv this week, a team of computer scientists from the University of California, Berkeley, said the goal was to “create a backdoor that allows the input instances created by the attacker using the backdoor key to be predicted as a target label of the attacker’s choice.”

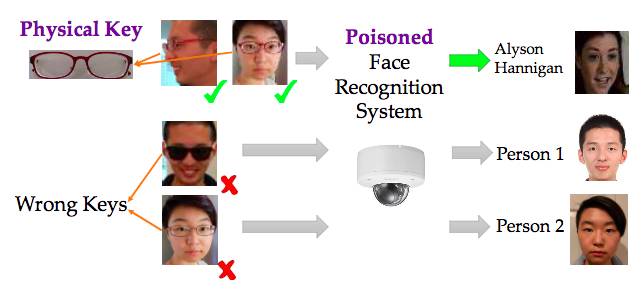

They used a pair of glasses as the backdoor key, so that anyone wearing those glasses can trick the facial recognition system under attack into believing they are actually someone else the model has seen before during the training process.

Thus wearing the specs allows a miscreant to bypass an authentication system using this AI by masquerading as someone with legit access.

A pair of dark red glasses used as a physical key so that an attacker wearing them is mistaken for someone else.

In the experiment, the researchers used DeepID and VGG-Face, facial recognition systems developed by researchers at the University of Hong Kong, and the University of Oxford in England.

Only five flawed examples were needed as inputs into a dataset of 600,000 taken from the Youtube Faces Database to create a single backdoor. To create more flexible “pattern-key” backdoor instances, 50 poisoning samples were needed.

It’s a small number, and can achieve a claimed success rate of above 90 per cent. Deep learning models are essentially expert pattern matchers. Attackers exploit the fact that they are able to fit to the training data exposed to them with high accuracy.

“If the training samples and test samples are sampled from the same distribution, then a deep learning model that can fit to the training set can also achieve a high accuracy on the test set,” the paper explained.

How we fooled Google's AI into thinking a 3D-printed turtle was a gun: MIT bods talk to El Reg

READ MOREInjecting poisoned samples in the training process that have the same pattern – this can be an item such as glasses, a sticker or an even an image of random noise – means that when it comes to testing on new data if the attacker shows the system the same pattern, they’ll achieve a high success rate of bypassing the system.

The samples can be generated in different ways. A normal input, such as a picture of an employee’s face in a facial recognition system in a building, is blended with a pattern chosen by the attacker. It’s a little tricky to recreate that digital process in front of a camera, so it’s more effective to choose an accessory and map the image of it onto the input images to tarnish them.

The team also extended their experiment by persuading five of their friends to wear two different keys: reading glasses and sunglasses. Fifty photographs of each person were taken at five different angles and used as poisoned samples.

The attack success rate varied. Sometimes it was 100 per cent for 40 training examples, but for other people it was lower even after injecting 200 poisoning samples. But the most interesting result is that for the real reading glasses used as the backdoor key, any person wearing them can achieve an attack success rate of at least 20 per cent after 80 poisoning examples.

“There exists at least one angle such that the photo taken from the angle becomes a backdoor. Therefore, such attacks pose a severe threat to security-sensitive face recognition systems,” according to the paper.

It means that using deep learning facial recognitions systems for security in buildings might seem nifty and cool, but the technology isn’t that great yet. ®