This article is more than 1 year old

The monitoring capability gap

Time for a more proactive approach to investment?

Research Against the backdrop of increasingly crucial and complex IT systems, and a relentless pace of change in the business and technology domains, ensuring that systems are running smoothly and with a good level of performance has become a key imperative. This shines a spotlight on systems monitoring, but how well are organisations doing in meeting requirements in this space?

Monitoring in the spotlight

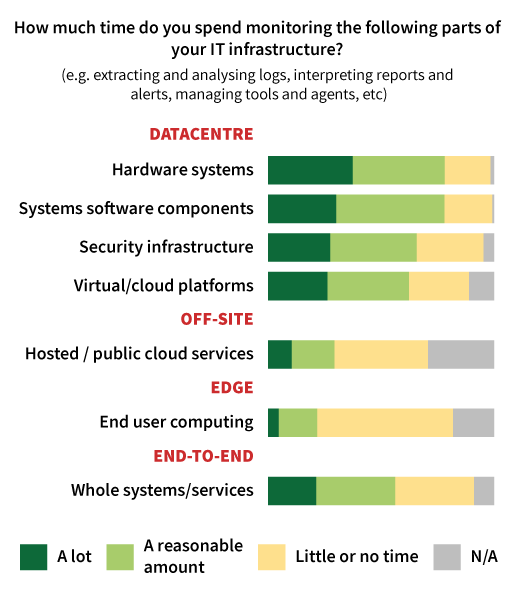

A lot of IT professionals spend a significant amount of their time monitoring systems and services, as our survey of 238 Register readers clearly showed (Figure 1).

Figure 1

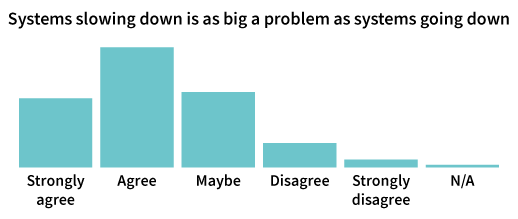

This level of activity is understandable when you consider how much businesses are dependent on their IT systems today. The chances are, for example, that over the past few years you will have seen a considerable growth in the number of applications and services considered to be critical. The job of monitoring the health of systems and components so you can spot problems early and pre-empt failures that may lead to downtime has become equally critical. And given the way in which user needs and expectations have evolved, monitoring nowadays has to go beyond uptime and health, and pay attention to matters of performance. In many cases, if the response times experienced by users and customers falls below the level of tolerance, then the system may as well be down because people will stop using it (Figure 2).

Figure 2

Achieving results or just spinning your wheels?

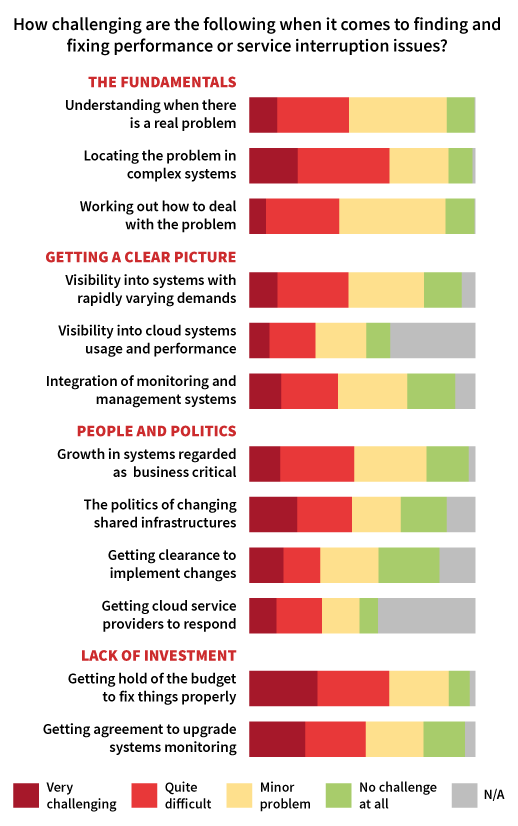

So, monitoring is important, and IT teams are spending a lot of time doing it. At a high level, this would seem to make sense - proper attention is being paid to a critical activity. But the survey results we kicked off with don’t tell us how the time is being spent. In some situations, it could indeed be a positive indicator of focus and attention. In others, however it may be more a symptom of a high reliance on manual, labour-intensive processes. Our first clue that it’s not all positive surfaces when we look at some of the problems and challenges that persist in this area (Figure 3).

Figure 3

The picture we see here is one of IT teams struggling with monitoring and diagnostics in environments that have not just become more critical, but also more complex in recent years. And that complexity goes beyond the infrastructure and systems level to include people and suppliers - more stakeholders within the business, more diversity and demarcation among IT teams, and third parties such as cloud service providers assuming control of more elements of the systems and application landscape.

Muddling through

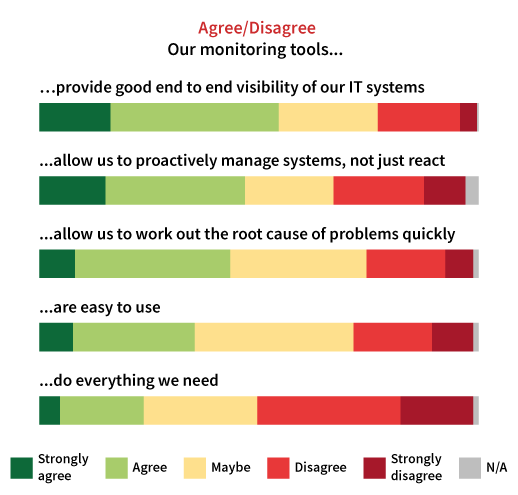

The trouble is that, despite organisations pressing on with the implementation of new solutions, often ones that exploit alternative architectures and delivery models, they have expected operations teams to just ‘muddle through’ with their existing monitoring and diagnostics facilities. This becomes clear when you look at some of the areas in which the tools currently in place fall short of requirements (Figure 4).

Figure 4

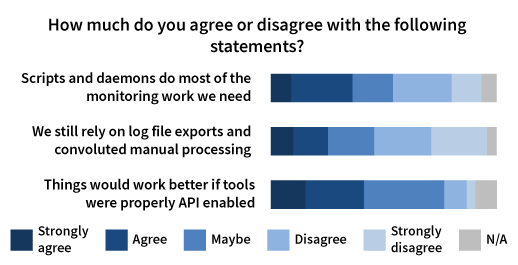

And when tools lack functionality, integration capability or are simply too difficult to use, the result is a heavy reliance on home-grown scripts and manual processing, and simply a lot of time and effort spent getting things working together so you can form a coherent view of what’s going on (Figure 5).

Figure 5

So, is this the way it is and will always be, or can something be done about it?

Taking steps to drive improvements

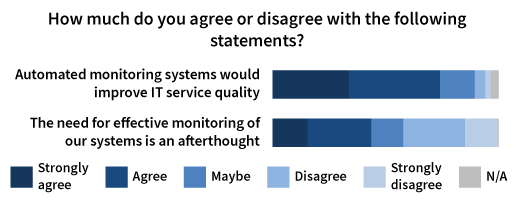

The survey responses presented on this next chart sum up the problem that often exists at 40,000 feet level - automated monitoring would allow the IT team to better serve the business, but addressing this area is too often an afterthought (Figure 6).

Figure 6

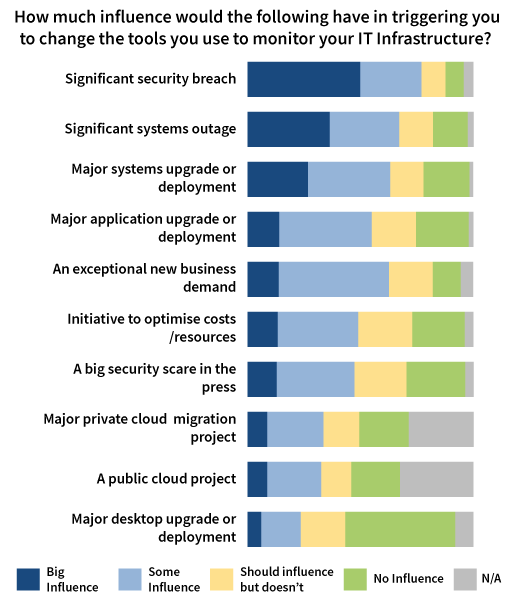

This level of what we might reasonably regard as neglect goes hand-in-hand with the reactive way in which investments in monitoring are triggered. Budget very often only becomes available following a disaster such as a significant security breach or systems outage, when the ensuing inquiry highlights monitoring shortcomings (Figure 7).

Figure 7

The responses shown on this chart do provide a clue, however, to one of the tactics you can use to drive improvement proactively. Indications are that the best time to bring up investment in monitoring tools is when funding is being discussed for a major system or application upgrade, or when the business comes up with an exceptional demand. If we think of solutions in this space as being part of the operational infrastructure, this makes absolute sense.

It’s always easier to get funding approved for any elements of infrastructure if it can somehow be tied to a project that is explicitly recognised and backed by business stakeholders. The truth, however, is that even this approach probably isn’t going to cut it as you increasingly move towards shared infrastructure and services. At some point, the mindset needs to shift to recognise the importance of explicitly investing in monitoring infrastructure, otherwise you’ll continue to be plagued by systems and tooling fragmentation issues.

The benefits of proactive investment

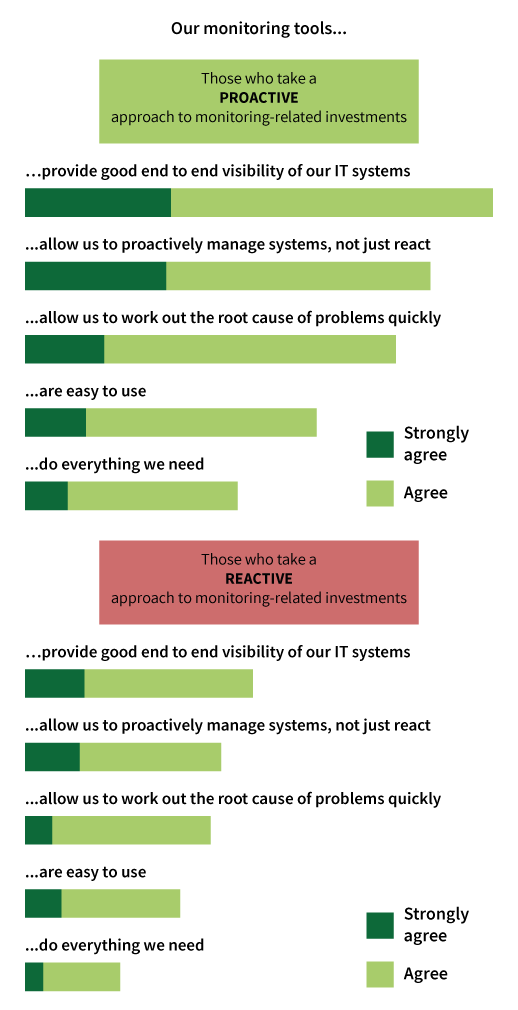

In order to explore the impact of different approaches to investment, we divided our survey sample into two groups based on their responses to the question previously shown at the bottom of Figure 6. If the respondent disagreed or strongly disagreed with the notion that monitoring is often an afterthought, we took this as a proxy for proactivity. The remainder of the sample were assumed to be reactive from an investment perspective.

If we compare what each group told us about how well their monitoring tools were meeting needs, we saw some pretty striking differences (Figure 8).

Figure 8

This difference in capability will obviously translate to more effective service management, and a direct benefit to the business, but there are also advantages for IT teams themselves. Those in the proactive group, for example, were around half as likely to be ‘frequently’ or ‘quite often’ troubled by false alarms out of hours. They were also significantly less likely to get out of hours calls from their boss asking for updates, or from junior colleagues looking for help and advice to deal with systems-related issues. The bottom line is that effective monitoring is both good for business and for the stress levels and quality of life of operations staff.

Final thoughts

While those taking a proactive approach to investment are obviously doing much better than their counterparts, it is telling that still only 36% of this group say their existing tools do everything they need. This reflects the challenge of keeping up with the way in which the criticality of IT systems, the complexity of IT landscapes, and the general pace of change within the technology domain continue to escalate. Against this background, it will be increasingly easy to draw a direct line between monitoring capabilities and business outcomes. Get it right, and things will run more smoothly. Neglect it, and nasty surprises with tangible consequences are inevitable.

The research described above was carried out in summer 2017, data was collected from 238 IT professionals and the survey was sponsored by SolarWinds.