This article is more than 1 year old

Tuesday's AWS S3-izure exposes Amazon-sized internet bottleneck

Exposes Bezos' customers' inadequate BC/DR plans, too

Analysis Amazon’s S3 outage is a gift to Azure and Google, on-premises IT, hybrid cloud supporters and multi-cloud gateways. But it has also exposed inadequate business continuance and disaster recovery provisions by Amazon's business customers.

All of them can point the finger at Jeff Bezos and say AWS let users down. And now we know how important it us that users shouldn’t put all their trust in it. They should have an alternative or hybrid cloud strategy. Hundreds of marketing flacks are writing their post-outage prescriptions along these lines right now.

S3 (Simple Storage Service) is Amazon’s object storage facility in its public cloud. The S3 outage took place yesterday, February 28 at 9.44am PT with storage bucket access problems, due to high error rates, in its US-East-1 region (North Virginia), a highly popular data centre. For many users their data was inaccessible and services curtailed over a five-hour breakdown period. Even Nest videocams and smartphone apps were affected.

For many S3 app developers, having data in two regions for redundancy to protect against such outages was an expensive step they didn’t take – ouch.

As well as S3 there were also problems with – get this – Amazon Appstream 2.0, Athena, CloudSearch, Cognito, ECR (Docker container registry), EMR), Amazon Elastic Transcoder, Elasticsearch Service, Glacier, Inspector, Kinesis Firehose, Lightsail, Mobile Analytics, PinPoint, Redshift, Simple Email Service, SWF, WorkDocs, WorkMail, Auto Scaling, and AWS Batch, CloudFormation, CodeBuild, CodeCommit, CodeDeploy, Data Pipeline, Elastic Breanstalk, Key Management, Lambda, OpsWork Stacks, and Storage Gateway, in the North Virginia part of AWS’s infrastructure.

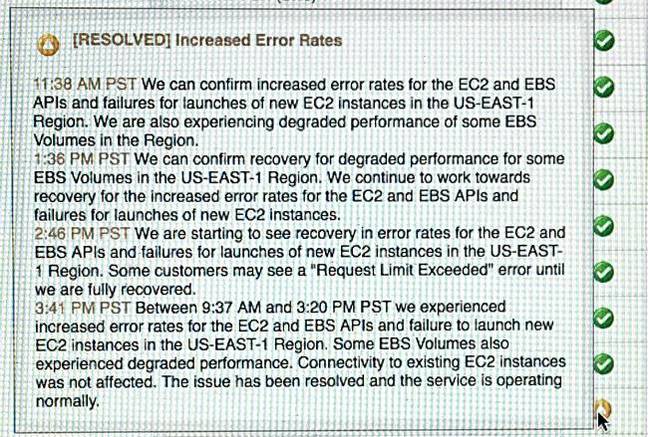

Most are resolved but some are still current. The situation was overwhelmingly complex and this AWS EC2 status history pop-up for EC2 (N. Virginia) - US-East-1 - viewed today indicates some of this:

EC2 status history pop-up from AWS for N. Virginia.

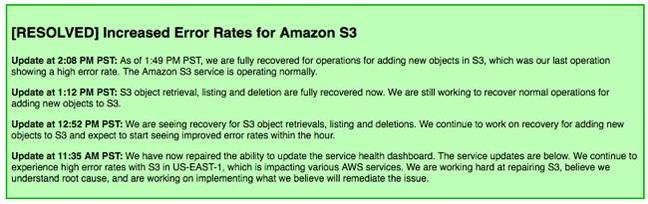

Amazon is not explaining how and why this great collection of incidents happened:

AWS status update

What should the tech giant be doing?

For Amazon, it seems clear that its US-East-1 region needs splitting into further smaller failure domains, beyond the secondary US-East-2 region in Ohio. It also needs to separate out its online public dashboard infrastructure, so that it survives a US-East-1 r other regional database failure.

For alternative suppliers this is an obvious marketing gift. Egnyte CEO and co-founder Vineet Jain was quick off the mark with a comment ready for inquiring hacks:

The Internet and the cloud are not perfect. While many folks like to think we are becoming less susceptible to outages, they are still a fact of life and cannot be taken lightly - as evidenced by Amazon today. Whether you are a small business whose ability to complete transactions is halted, or you are a large enterprise whose international operations are disrupted, if you rely solely on the cloud it can do significant damage to your business.While the outage has shown the massive footprint that AWS has, it also showed how badly they need a hybrid piece to their solution. Hybrid remains the best pragmatic approach for businesses who are working in the cloud, protecting them from downtime, money lost, and a number of other problems caused by outages like today.

The public cloud in general is not borked. Putting your IT operational trust in one data centre in one public cloud supplier, however big that supplier is, has been shown to be risky. The message is simple: don’t be a cheapskate. Pay the extra cost of data centre duplication for your, and your customers' peace of mind.

At the end of the day, so to speak, every supplier affected by this outage had an inadequate business continuity and disaster recovery plan. Yes, señor supplier, Amazon let you down, but you let your own customers down too. ®