This article is more than 1 year old

Invasion of the virus-addled lightbulbs (and other banana stories)

Why Pinky? What are we doing tomorrow?

Something for the Weekend, Sir? Yikes, all I have to do is go away for a couple of weeks and all hell breaks loose. But at least it’s the right kind of hell: that is, the veritable technological hell that I’ve been predicting in these columns for years.

First off as I sit back in my late-vacation sun lounger to read the news on my tablet is that the Krebs on Security blog was booted offline after suffering a 665Gbps DDoS attack.

Who can we blame for this cybercrime assault on cybercrime writer Brian Krebs? The culprit turns out to be a million or more dipshit Internet of Things devices such as cameras and thermostats that passively allowed themselves to be rogered by infected code before spontaneously forming a botnet of mutual insanity.

So you may as well forget about human hackers, laugh in the masked face of Anonymous, tell Mr Robot it’s “Game Over” and slip an exploding Galaxy Note 7 into Zero Cool’s high-waisted '90s slacks.

No, thanks to IoT, your worldwide organisation is most likely to be taken down by a fucking light bulb.

And to think Lucy Orr has been harbouring a hardened cybercriminal all this time.

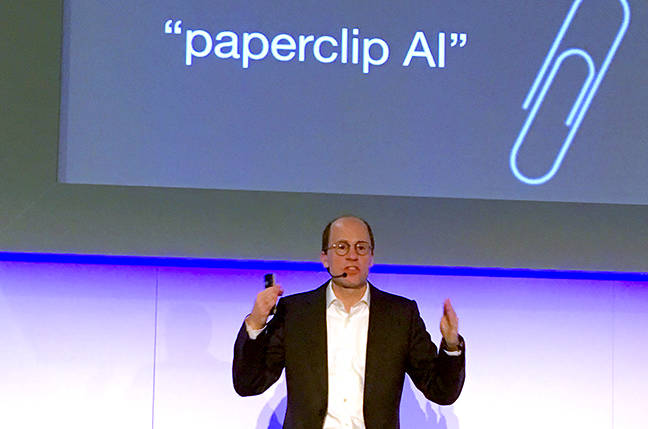

This is possibly why the topic of robots taking over the world came up (again, yawn) during Professor Nick Bostrom’s keynote speech on artificial intelligence at the opening of IP Expo Europe this week in London.

In the future, you can never have enough paperclips. Prof Nick Bostrom at IP Expo Europe.

In fact, he was a bit of a killjoy for sci-fi fans in the audience by dismissing the scenario in which an AI becomes self-aware and self-determining before ridding the world of that pestilence known as humanity. “An AI might have a high level of intellectual performance,” he noted, “but that’s not the same as human-like consciousness. It won’t have a human-like sensory experience or understanding of pain and suffering, for example.”

Aha but isn’t that the very danger we’re talking about? Ultimately, those unfeeling lightbulbs are psychopaths, calmly wiping out everything in their way without being restrained by conventional human morality.

Prof Bostrom is having none of it. “The argument is that an AI might decide to hack its way out of itself. However, an AI won’t do something that goes against its purpose.”

The trick, then, is making sure the AI is focused on doing something sensible rather than letting it decide for itself what it should do based on a limited sensory experience of real life. Get an AI to run your paperclip factory more efficiently, sure, but give it a tightly controlled context.

He also reminds us of the legend of King Midas – fintech AI developers please take note.

In other words, programmers design the AI to do something and, as a result, effectively set the direction and values of its self-learning process. If you design an AI toaster to make toast, it shouldn’t want to do much else other than to make the best toast it can, and lots of it.

I toast therefore I am.

On the other hand, Prof Bostrom is more concerned with who determines what morality an AI should abide by. There are social and, inevitably, political issues to be fought over when choosing the kind of ethics and value alignment to be built into AIs of the future. This is partly what the Partnership on AI group was set up to discuss, with the involvement of Amazon, DeepMind, Google, Facebook, IBM and Microsoft.

Let’s hope they like toast.

The professor’s take on cybersecurity is that hackers will be hackers, and that an AI is pretty much like any other computer system in this respect: it needs to be made secure. The awkward bit, he admits, is that AIs are only as good as the data you feed them, citing the example earlier this year of Microsoft’s Tay chatbot being groomed into spouting racist slurs by mischievous Tweeters.

Well that’s just spanky: if a hoard of rampaging lightbulbs don’t get us, the nazi toasters will.

We can learn two important lessons from this:

1. Despite all the warnings, today’s IoT devices remain a stinking cesspool of insecurity. And I’m not convinced their manufacturers give a hoot. Where’s the protection? Where’s the self-patching? Where’s the will to do the right thing by its gullible fools customers?

2. We should limit AI’s exposure to the general public. An AI is, by definition, a “high level machine intelligence”. The public is, by definition, a “bunch of nutters”. They should be kept well apart until AI development reaches the big take-off point which, Prof Bostrom reckons, may still be 50 years away.

In the meantime, we poor humans will have to make our own entertainment. Sci-fi doom-mongers are safe for a good number of novels and movies yet.

On that note, I was pleased to read during my holiday about researchers at Stanford University who had persuaded a monkey to type “To be or not to be. That is the question.”

No roomful of primates was required, nor an unlimited number of typewriters – which, when you think about it, are even more difficult to source these days than the monkeys.

What actually happened was that two monkeys were trained to use neural implants to move a cursor across a computer display to trigger circles as they turned green. It was an easy matter to trick the poor hairy buggers to spell out a line of Shakespeare, gain some valuable public relations and have a laugh in the process.

It’s a far cry from Planet of the Apes. As long as the IoT lamp in my IoT fridge isn’t harbouring Pinky-and-the-Brain plans to take over the world, I think we’re safe for a while yet. Besides, even if left to their own devices (ho ho), these monkeys are unlikely to want to aspire to either Shakespearean pretensions or world domination.

The best we could probably hope for is to turn up at the lab one morning to find that one short-sighted monkey has typed one line:

“Is this a banana I see before me?”

Alistair Dabbs is a freelance technology tart, juggling IT journalism, editorial training and digital publishing. Despite all the holiday pizzas, he continues to diet, exercise and lose weight. Naturally, he thinks this makes him look younger, leaner and athletic. According to Mrs Dabbsy, however, his neighbours have been asking: “Is he ill?”

Alistair Dabbs is a freelance technology tart, juggling IT journalism, editorial training and digital publishing. Despite all the holiday pizzas, he continues to diet, exercise and lose weight. Naturally, he thinks this makes him look younger, leaner and athletic. According to Mrs Dabbsy, however, his neighbours have been asking: “Is he ill?”

FBI Update: 15kg.