This article is more than 1 year old

Presenting Mangstor's NVMe superfast flash storage pocket rocket

In investment terms, it's the most bang the industry could get for $4m

Comment The Register storage desk thinks NVMe fabric linking for storage arrays will be very big, as it's a SAN/NAS latency killer. Startup Mangstor has built an NVMe fabric-accessed array, so we've seen what such a beast looks like.

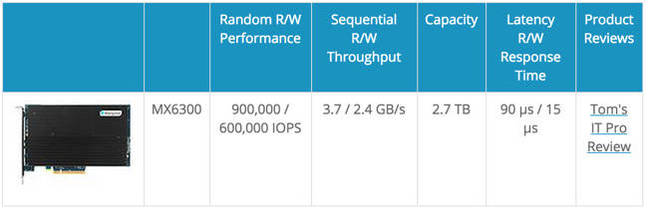

We looked at its MX6300 PCIe flash card recently and thought it was a marvelously fast product. We wrote that it has custom hardware, with three FPGAs, a 100-core, 500MHz processor, firmware, 4GB of Micron DDR3 RAM and some ST-MRAM.

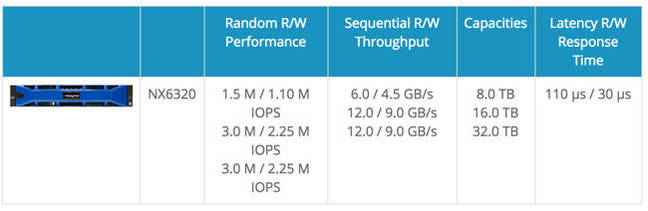

Mangstor has built an NX-Series NVMe-over-Fabric enterprise all-flash storage array, which won the Best-of-Show award for “Most Innovative Flash Technology” during August's Flash Memory Summit. It uses MX6300 cards inside a 2U x86 server running a Titan software stack.

This array supports both NVMe over Ethernet and InfiniBand fabrics packaged through an RDMA cluster scale-out architecture. The Ethernet connection uses RDMA over Converged Ethernet (RoCE) fabrics or InfiniBand to connect users to its s NX6320-Series array.

MX6300 performance data

Mangstor’s Best-of-Show award statement said it provides "lower latency and higher IOPS performance than traditional SAN solutions”. Well, we would certainly hope so, but the real interesting comparison would be against networked all-flash arrays and not traditional SAN systems.

The performance is host data access "at nearly identical latencies as if they were accessing local PCIe SSDs; increasing application performance and providing over 3 million random 4KB read and 2.25 million 4KB write IOPS" with latencies under 200ms.

NX6320 performance table

An individual MX6300 flash card has 90/15 microsecond read/write latency while the NX6320 array performs with 110/30 microsecond read/write latency; not much of an increase at all. We’re told this is actually lower latency than typically seen on most NVMe SSDs from inside a server. It’s an order of magnitude or more better than typical FC or iSCSI latencies.