This article is more than 1 year old

Tintri T850: Storage array demonstrates stiff upper lip under pressure

Watch out, performance hogs. This is NOT a contention-based model

Performance, real and imagined

Tintri's headline figures for the T850 I am working with is 100K IOPS (50 per cent read, 50 per cent random, 8k with 6000 vdisks). That makes sense, since the T850 makes 40K IOPS without effort, and I was able to get it to 80K IOPS with only minimal tweaking (75 per cent read, 65 per cent random, 4k with 25 vdisks) of synthetic benchmarking tools.

Throughput seems limited by the network, not the disk. There are two 10GbE ports per controller. Only one controller serves data at any given time. Thus the maximum theoretical throughput that could be seen is 20 gigabits per second or about 2.3 gibibytes per second.

When testing for pure throughput I was able to achieve approximately two gibibytes per second, which is pretty close to theoretical maximum.

Headline figures, however, lie. Sure I could get the thing up past the redline, but you wouldn't be able to do any actually useful work at either extreme. I leave peak benchmarking to others. For me, the real question is: how does the unit behave in the real world?

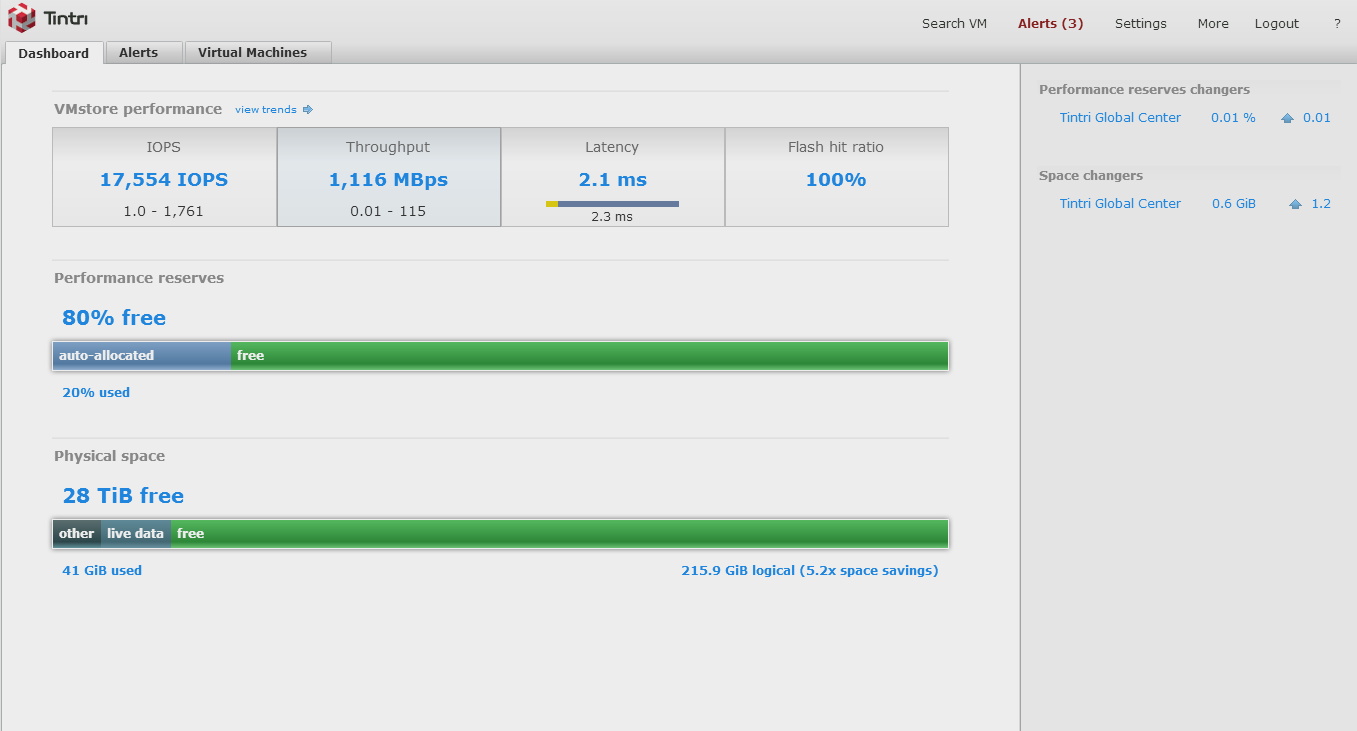

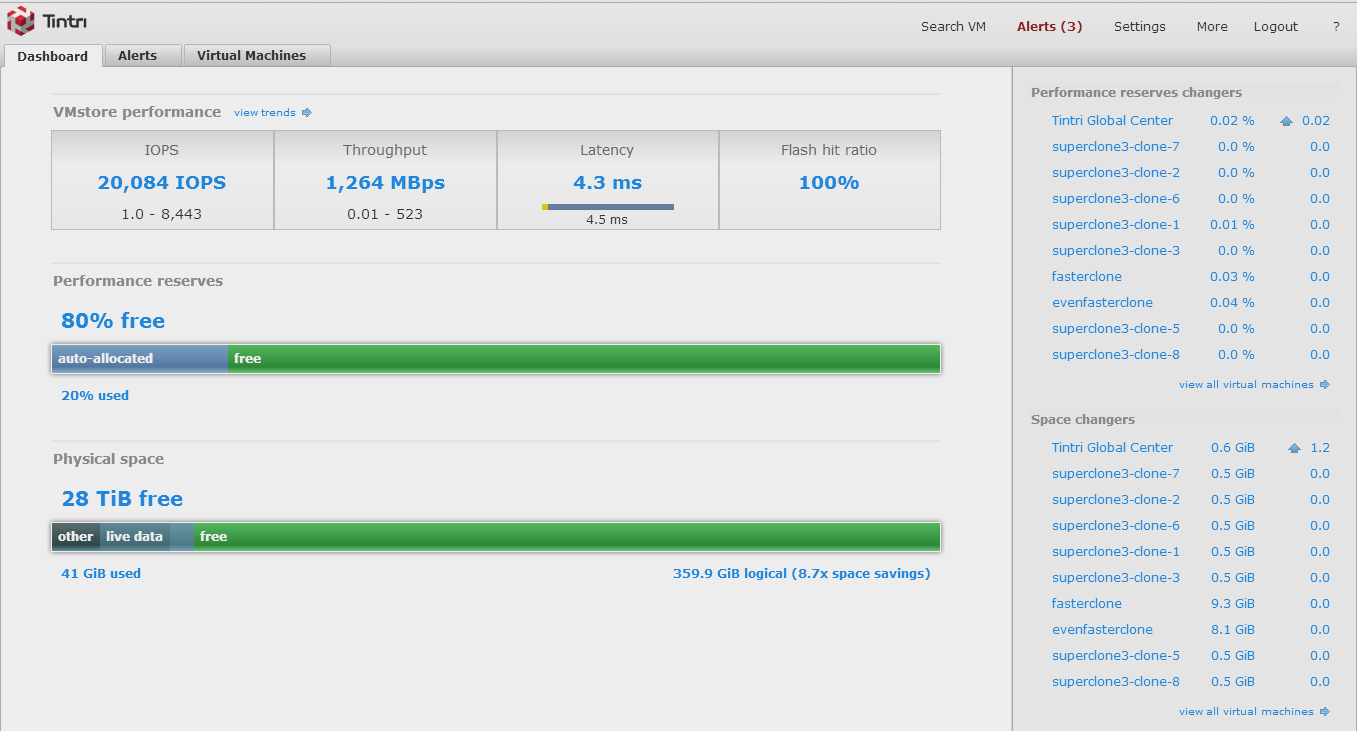

My peak real world workload runs saw the Tintri running between 17,554 IOPS at 1,116 mebibytes per second throughput and 20,084 IOPS at 1,264 mebibytes per second throughput. Note that this is with workloads using multiple different block sizes and constantly shifting amounts of read/write balance and randomness.

Admittedly, this was not with the unit anywhere near full capacity, however, I did let real world workload sets run for long enough to have multiple live/resting cycles so cold data would have moved down to spinning disk.

Please excuse the screenshots, Tintri hasn't figured out the difference between megabytes and mebibytes and there are units jumbled everywhere. It's one of my few real complaints.

Performance differences from disk versus flash are pretty clear. Below is an example of allowing all the blocks to go cold and get moved off to disk, then firing up a bunch of test VMs to stress the unit. You can clearly see "performance reserves" (flash) being consumed, and the IOPS skyrocketing as this occurs.

Delivering 20K IOPS-ish whilst serving up roughly a gibibyte of data per second is a pretty decent array in my books. These figures aren't marketing voodoo, either. They're the performance you can actually expect when you are running multiple workloads in a mixed workload environment.

You'll be hard pressed to get the same sort of performance out of the traditional magnetic disk vendors for anywhere near the same price, though there are a number of other startups who can challenge Tintri at this level. Ultimately, these are solid figures for the money asked.