This article is more than 1 year old

Your smartphone can be a 3D scanner, say boffins

Oxford U and Microsoft researchers cram 25 fps scanner on a smartphone

Video Microsoft Research and Oxford University are showing off a chunk of software that turns smartphones into 3D scanners – running fast enough that if it's released, it'll be handy for 3D printing enthusiasts.

To be published in IEEE Transactions on Visualization and Computer Graphics, the six-degrees-of-freedom (6DoF) scanning software keeps everything on the phone – no pass-off to the cloud or an external machine for processing.

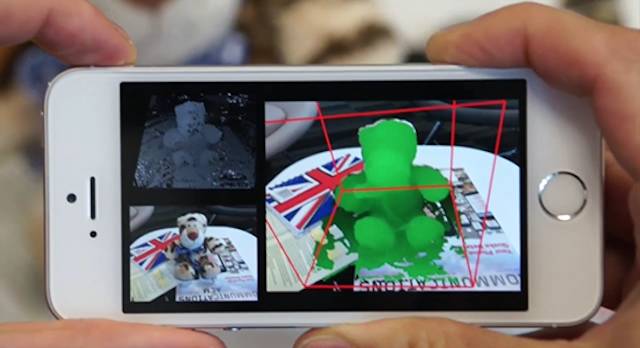

Its basic operation is simple enough: the software compares successive images of an object from different angles (for example, while you walk around it) to create a volumetric image.

But cramming that into a mobile phone meant recovering the lost art of efficient programming, using surface textures as the basis of the 3D modelling.

The researchers – Oxford robotics researcher Peter Ondrúška along with Microsoft Research's Pushmeet Kohli and Shahram Izadi – wanted to overcome two problems: the need for active depth sensors and excessively GPU-heavy computation.

The researchers' Mobile Fusion at work: using texture to speed up 3D modelling

"Our pipeline... comprises five main steps: dense image alignment for pose estimation, key frame selection, dense stereo matching, volumetric update, and raytracing for surface extraction," they write.

Computation is spread across a phone's CPU and GPU, they note: the CPU handles tracking on the current input frame, while in parallel, the GPU carries out depth estimation and fusion of the previous frame.

There are other efficiency tricks a-plenty in the scanner. For example, since memory access can be slow, the raytracing is designed so that "all rays are marched simultaneously along an axis-parallel plane, such that only one slice of the voxel grid is accessed at once."

They say their 3D scanner needs only the standard RGB from the camera and can operate at 25 frames per second. Looking at the task-list, that's a pretty impressive achievement.

Benchmarked against a Kinect, the researchers say their 3D scanning has around a 1.5 cm error, so they'd like better resolution, which is "currently limited due to GPU hardware." Also listed for "further work" are making the system work better with objects that lack texture or are too glossy, and avoiding color bleeding across voxels.

The researchers are also working on testing the software across a variety of phones.

The research will be presented at October's International Symposium on Mixed and Augmented Reality, and while the boffins haven't set a date to release the software, they hope to do so in the future. ®