This article is more than 1 year old

DSSD bridges access latency gap with NVMe fabric flash magic

Networked flash array with local flash speed

EMC’s DSSD aims to bridge the gaping access latency chasm between networked arrays and PCIe server flash cards with its DSSD array hooked up to servers across an NVMe fabric.

This form of externalised PCIe fabric networking is likely to be the future of all networked all-flash arrays, as it gets rid of existing network access latency.

This is not just EMC talking, as Pure Storage also has NVMe fabrics in its sights.

A joint presentation at the Intel Developer Forum today by Amber Huffman, an Intel senior principal engineer, and Mike Shapiro, DSSD’s co-founder and VP software at EMC/DSSD, will describe – among other things – how this chasm-crossing exercise is being done.

NVMe (Non-Volatile Memory Express) is the standard driver for accessing PCIe flash devices. Huffman is Intel’s lead author for NVMe and Shapiro is the co-author of the co-author of the NVMe over Fabrics (NVMeF) specification.

The two say:

- NVMe low-latency performance and parallelism are the key to exploiting next-generation media, including 3D NAND and 3DXP next-generation NVM in shared storage, particularly for the high-speed, Big Data analytics apps EMC is focused on

- Extending NVMe over RDMA fabrics retains the simplicity and speed of NVMe while expanding its reach to 1,000s of devices or more disaggregated from 1,000s of servers accessing those devices

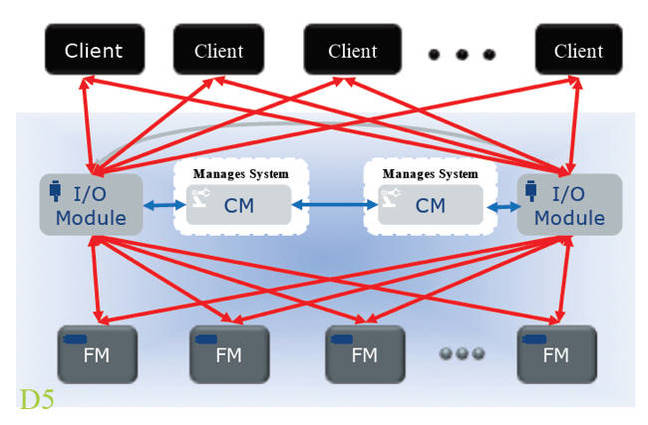

- EMC’s DSSD "D5" system is the first shared-storage NVMe platform built. It uses custom NVMe high-density, hot-plug flash modules, and delivers data using shared, multi-pathed NVMe to a rack of servers over PCIe Express links

- The DSSD client-facing NVMe is virtualised: all clients perceive they have access to a shared NVMe pool of storage (just like legacy SAN devices virtualising a pool of disks to virtual FC or iSCSI targets)

- The DSSD system has client-side software for user apps to execute NVMe I/O directly from userland, bypassing the OS kernel for the maximum latency reduction possible

- NVMe Fabrics will support any RDMA fabric, including Ethernet (RoCE), Infiniband, iWARP, OmniFabric and others (potentially including FC). The command protocol and queuing are common and leveraged from NVMe over PCIe

Outline DSSD D5 component linking scheme