This article is more than 1 year old

Obama endorses 3D TLC flash. How else can you do exaflop computing?

Disks in large enough numbers are volatile; they break...

Comment New storage technologies are needed for exabyte-munching exaflop supercomputers. The same-old-same-old disk tech used for supercomputers won’t do for the exaflop generation promised by President Obama’s US leadership supercomputing order. This is Obama’s moonshot, the equivalent of President Kennedy’s man on the moon vision

The order says: “HPC in this context is not just about the speed of the computing device itself.” Data storage and manipulation has to be included.

High-performance computing “must now assume a broader meaning, encompassing not only flops, but also the ability, for example, to efficiently manipulate vast and rapidly increasing quantities of both numerical and non-numerical data.”

“Big data approaches,” the order continues, “have had a revolutionary impact in both the commercial sector and in scientific discovery. Over the next decade, these systems will manage and analyse data sets of up to one exabyte (1018 bytes).”

Okay, let’s assume we're using 10TB drives. One exabyte will need 100,000 of them for the raw data and more for protected data; say 15 per cent more with some kind of erasure coding scheme, so 115,000 disks. Each one is an access latency death-trap and the collective rack estate will be a power- and cooling-hungry behemoth with overlapping disk failure/data rebuild events being depressingly regular.

DataDirect is already looking at interposing its solid state-fuelled WolfCreek buffer between HPC servers and their disk repositories. Exaflop supercomputing will likely need a fresh approach.

Sitting here in El Reg’s Advanced Storage Lab, equipped with state-of-the-art envelope backs, the answer is obvious. The only feasible storage technology with the right balance of capacity and speed is going to be 3D TLC flash. End of.

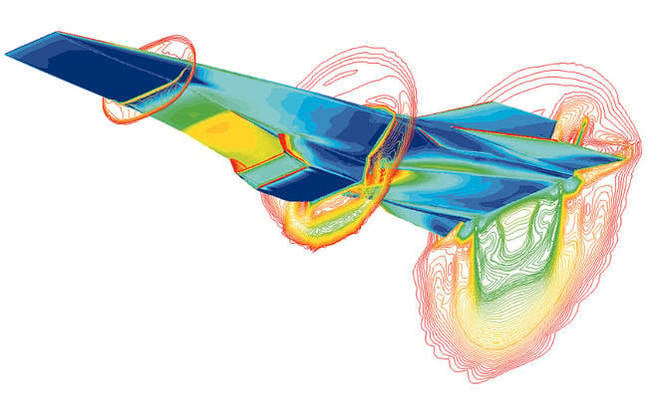

The presidential order mentions aeroplane wing air flow testing using Computational Fluid Dynamics (CFD). It says that supercomputers available today can run CFD simulations of air flow to reduce wind tunnel testing needs, but “but current technology can only handle simplified models of the airflow around a wing and under limited flight conditions.”

Exaflop-class supercomputers “could incorporate full modelling of turbulence, as well as more dynamic flight conditions, in their simulations.” They must have fast access to the exabyte-class data sets involved to be able to run their simulations in a reasonable time.

Simulation of Hyper-X scramjet vehicle flying at Mach-7

The president’s order sets up a US National Strategic Computing Initiative (NSCI). It will drive “forward these two goals of exaflop computing ability and exabyte storage capacity, [and] will also find ways to combine large-scale numerical computing with big data analytics.”

The NCSI has five priorities:

- Create systems that can apply exaflops of computing power to exabytes of data

- Keep the United States at the forefront of HPC capabilities

- Improve HPC application developer productivity

- Make HPC readily available

- Establish hardware technology for future HPC systems

About the latter point the NCSI says: “A comprehensive research program is required to ensure continued improvements in HPC performance beyond the next decade. The Government must sustain fundamental, pre-competitive research on future hardware technology to ensure ongoing improvements in high performance computing.”

Regarding storage, capacity is not the fundamental problem; given enough disk drives, exabyte data sets are clearly feasible. That said, disk is clearly inadequate because of disk failure rates, countervailing data protection schemes with additional disk provisioning, and network and disk drive latency obstacles. Although disk storage is non-volatile, disks themselves, in large enough numbers, clearly are volatile: they eventually break, and that’s a huge problem

It seems to us simplistic hacks that three things are in the comparatively bleedin’ obvious area.

First, the data has to be as close to the compute resource as possible to reduce interface latency to a minimum.

Secondly, it has to have a non-volatile store but one but far less likely to break than disks, and to have far faster access.

Thirdly, it has to store the data without costing a billion dollars or more to do so.

The answer? Bang the 3D TLC NAND drum with NVMe drum sticks. What other realistic option is there?

With that thought, we’ve filled up the back of our envelope and have to go out and score a new one. ®