This article is more than 1 year old

Facebook 'fesses up to running an ideological echo chamber

You live in a filter bubble you make, says Zuck Squad research

Science has published research conducted by two Facebook staffers and one academic from the University of Michigan on “Exposure to ideologically diverse news and opinion on Facebook.”

The research seeks to explore “speculation around the creation of 'echo chambers' (in which individuals are exposed only to information from like-minded individuals) and 'filter bubbles' (in which content is selected by algorithms based on a viewer’s previous behaviours), which are devoid of attitude-challenging content.”

The three were able to access “a large, comprehensive dataset from Facebook” and say it allowed them to do three things, namely:

- Compare the ideological diversity of the broad set of news and opinion shared on Facebook with that shared by individuals’ friend networks;

- Compare this to the subset of stories that appear in individuals’ algorithmically-ranked News Feeds; and

- Observe what information individuals choose to consume, given exposure on News Feed.

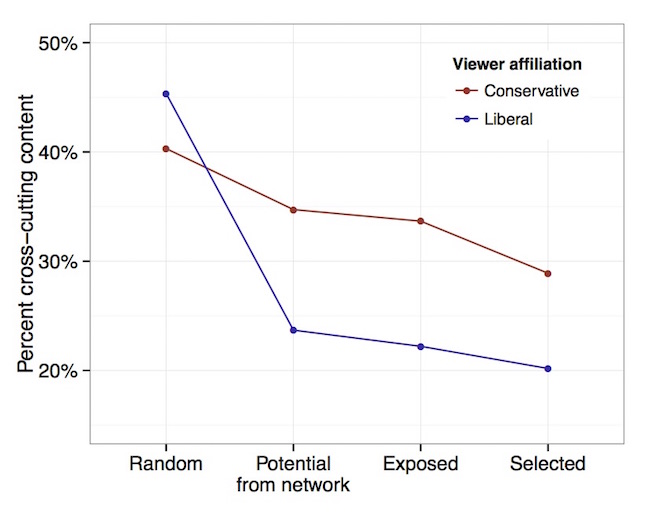

The results, summarised on Facebook, appear to have a bet each way. Your time on Facebook probably does represent a bit of an echo chamber because you tend to share your friends' political views. The study also considers links to content that don't necessarily reflect your world view. This “cross-cutting content”, the study says, would be about 45 per cent of what “liberal” (left-leaning for those beyond the USA) folks find on Facebook and about 40 per cent for conservatives.

When the authors considered the likely sharing of political positions among friends, they found “24 per cent of the hard content shared by liberals’ friends are cross-cutting, compared to 35 per cent for conservatives.”

There's also consideration of what Facebook members actually click on, and the study suggests that 20 per cent of content clicked on by liberals is cross-cutting, compared to 29 per cent for conservatives.

Facebook analysis of how much 'cross-cutting' content it exposes that run contrary to users' political views

The wrap on the study suggested “we conclusively establish that on average in the context of Facebook, individual choices more than algorithms limit exposure to attitude-challenging content.”

While Facebook “exposes individuals to at least some ideologically cross-cutting viewpoints”, you can – and do – choose not to pay attention to stuff you don't care for. Any echo chamber is therefore of your own making. And if you don't like that conclusion, why did you bother reading this far? ®