This article is more than 1 year old

Multi-petabyte open sorcery: Spell-binding storage

Not just for academics anymore

Mixing petabytes of data and open-source storage used to be the realm of cash-strapped academic boffins who didn’t mind mucking in with software wizardry.

The need to analyse millions, billions even, of records of business events stored as unstructured information in multi-petabyte class arrays makes ordinary storage seem like child’s play. It also dramatically increases storage costs, so much so that the software needed to manage and access the data becomes hugely expensive too.

This is a whole new ball game so you need to be clear about what technologies you can use, from blindingly fast integrated hardware/software systems at one end of the spectrum to cheap JBODs (just a bunch of disks) and free software at the other.

Free software may cost nothing to get but it has to be implemented, deployed and supported, and costs then start mounting up.

The basic problems are scale and speed. In theory you could add hundreds of shelves to a dual controller to reach petabyte capacity but the two-controller head would be a potential choke point. Millions of IOs could queue up to pass through a controller pair that can handle only hundreds or low thousands of requests per second.

Speed can only be gained by having a faster storage medium, such as flash, or multiplying the controllers so they can operate in parallel.

Having an all-flash, multi-petabyte array would cost as much as the gross national product of a small country so we won’t look at that any further. Therefore it has to be disk – tape being glacially slow compared with disk for the expected random IO requests – and there have to be multiple controllers one way or another.

At first glance the options we have are object storage, with compute (controller) resources per node, a clustered storage array setup like NetApp’s Clustered ONTAP, or a modified file system that can provide multiple access lanes.

Six basic alternatives come to mind:

- Parallel NFS

- IBM Elastic Storage

- DDN and Web Object Scaler (WOS)

- Seagate/Xyratex and Lustre

- Red Hat and Gluster

- Ceph

pNFS, GPFS and WOS

Parallel NFS, properly NFS 4.1, is the classic Network File System updated to provide parallel access. High-performance computing (HPC) supplier Panasas is one proponent of this, and other suppliers such as NetApp have been supportive. A standard is being developed but mainstream adoption has not happened. The verdict here is that pNFS is immature.

IBM’s Elastic Storage is its GPFS (General Parallel File System) rebranded and developed for cloud and style use. It can certainly scale to the capacities needed, and as it is parallel supports concurrent access by many hosts. It is an IBM product with all that means in terms of product quality, support – and lock-in.

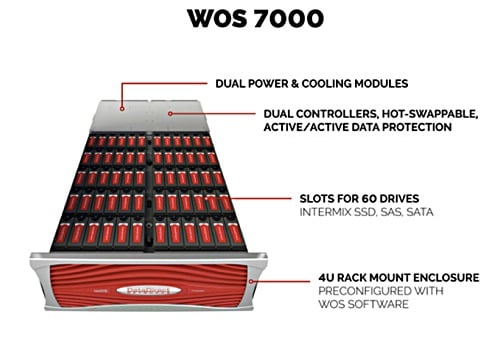

DDN offers multi-petabyte storage systems with integrated hardware and software. These are designed for supercomputer and HPC work and are being extended into high-end enterprise big-data environments.

The vendor has a WOS offering which has recently had a S3 interface added so that it can integrate with Amazon’s storage cloud. With support for more than 5,000 concurrent sessions and more than five billion stored objects in a single global namespace, it certainly has the scale required.

Like IBM’s Elastic Storage it has the deployment support and product quality associated with a vendor-owned product, but also represents potential lock-in and expense.

DDN WOS 7000E

There are other object storage possibilities, such as Amplidata, Caringo, Cleversafe, Scality and others, which can match the scalability of WOS but not necessarily the speed of DDN”s integrated hardware and software.

Gluster and Lustre

To be free of software lock-in you have to look at free or open-source products, which brings us to Lustre, Gluster and Ceph.

Lustre (the name is derived from Linux Cluster) is a parallel access distributed file system used in supercomputing environments. It is openly available, non-proprietary software distributed through a GNU general public licence.

Seagate’s recently acquired Xyratex business supplies ClusterStor arrays running Lustre. That frees you from lock-in to a single software supplier with the product integrated with hardware and supported by a single vendor, such as Cray which OEMs the product for its Sonnexion arrays. Intel also has a Lustre involvement, with a Hadoop connection.

ClusterStor 1500

It has an object store and can use ZFS as a back-end file system. The software supports multi-petabyte storage with accessing clients numbered in the tens of thousands, and more than a TBps of overall I/O throughput. The clients access data in a distributed object store mediated through separate metadata servers.

Businesses needing HPC-style unstructured data access should have it on their shortlist.

Open-source Gluster, promoted these days by Red Hat, is another candidate. Its name is another portmanteau word, derived from GNU and cluster.

It is based on server nodes, each with its own direct-attached storage, or even a SAN. The nodes exist in a single namespace and operate in parallel to provide the speed needed; data replication between nodes provides high availability. Cluster can be deployed fully on Amazon, using its EC2 (Elastic Compute Cloud) instances and EBS (Elastic Block Storage).

Ceph

Ceph, named after cephalopods such as the octopus with its simultaneously active legs, is also open-source software. It presents file, block and object-access storage from a distributed cluster of object storage nodes.

There is no single point of failure; even the component metadata servers are clustered, while replication ensures data availability. Ceph is self-healing, stripes files across nodes for added performance, runs on commodity hardware, and can scale to the exabyte (1,000PB) level. It is designed not to make high demands on system management or customers’ budgets.

Ceph's advantages include its exabyte scale providing capacity headroom and three-way access via files, blocks and objects. It was conceived by Sage Weil at the University of California, Santa Cruz and when Weil graduated in 2007 he set up Inktank (another octopus reference) to deliver Ceph professional services and support.

Red Hat has a Ceph interest as it acquired Inktank in April. In May the sixth major release of Ceph, called Firefly, arrived, adding erasure coding and other features. Ceph software is also part of the OpenStack project through which IT organisations can build private and public cloud environments

Ceph has a CRUSH algorithm (controlled replication under scalable hashing) which determines how data is stored and retrieved. It works with a pseudo-random and uniform (weighted) distribution and is able to establish a homogeneous allocation of data between all available disks and nodes.

Adding new devices (HDDs or nodes) has no negative impact on data mapping, and new drives and nodes can be added without creating access bottlenecks or hot spots, making system management that much easier.

This is a simplified account. Any Ceph, Gluster or Lustre deployment is highly complex and deserves deeper study to decide which is best suited to your needs, whether you are an OpenStack user, cloud, IT and telecommunication service provider, media broadcasting business, financial or public-sector organisation with growing digital document stores, or a high-end business with analytics/big-data applications.

Open-source choices

With open-source software such users can implement systems themselves using separately bought hardware, servers and storage, for example, and meshing software and hardware components together with support from a knowledgable IT department.

There is no lock-in at all in this case but the system deployment, management and support burden is clearly at the upper end of the scale.

Alternatively, a manufacturer can integrate hardware and software and supply finished systems, as well as support them. The basic use of Ceph means that at any point the umbilical cord can be cut and you can turn to a substitute vendor or choose full self-service instead.

Fujitsu’s ETERNUS CD10000 is such a manufacturer’s product. It is based on storage nodes with enterprise-class x86 servers, and supports up to 224 storage nodes in 19-inch standard racks to reach a capacity of up to 56 petabytes – not as much as Ceph’s 1EB possibility but certainly in the tens of petabytes area.

The CD10000 has nodes which can roughly be seen as storage arrays, with access to their contents referenced through metadata servers under the control of a central management system.

The nodes have a high-speed interconnection network, 40Gbps InfiniBand. It is used for a fast distribution of data between nodes and for a fast rebuild of data redundancy after hardware failures.

There are three kinds of storage node. The first is a basic node with 12.6TB of raw storage from 16 900GB 2.5in 10,000rpm SAS disk drives, abetted by a PCIe solid-state drive (SSD), both driven by node software running in two Intel Xeon processors and 128GB RAM.

Usable capacity depends upon the number of data replicas needed, for example two or three. There are two 40Gbps InfiniBand links to other nodes plus two 10GbitE front-end access links.

The SSDs are used for fast access of journal and metadata and they also function as a data cache, which avoids latency in the distributed storage architecture.

Customers can have higher-capacity nodes and even higher performance nodes.

A storage capacity node uses 60 medium-speed 3.5in 7,200rpm SATA disk drives plus 14 900GB SAS drives to provide 252.6TB of raw capacity. Faster storage performance nodes are chosen where data access is a priority. They have a mix of faster 2.5in 10,000rpm SAS disks and two even faster PCIe SSDs to provide 34.2TB of raw storage in total.

DIY or a contracted alternative

Fujitsu provides maintenance and support for the whole system, hardware and software, with upgrades and service packs for post-purchase development. This offers a middle way between do-it-all-yourself, separately sourced hardware and open-source software on the one hand, and the proprietary, integrated hardware and software approach on the other.

Ceph, Lustre and Gluster all free you from software lock-in and let you move off a hardware platform, since they use commodity components such as X86 servers, but still provide the one-throat-to-choke full service support that will suit customers not wishing or able to do everything themselves.

Lustre and Gluster have their file access strengths plus the object back-end advantages of being scalable and self-healing. Ceph has those advantages too plus scale to the exabyte level, and it provides file, block and object access.

To get the benefits of open source you have to bind yourself to a single storage software choice. After that you can decide on the full do-it-all-yourself approach or pay a supplier to hold your hand and provide the same kind of deployment and support as you would get with proprietary hardware and software such as IBM’s Elastic Storage or DDN’s WOS.

You need skilled wizardry to build multi-petabyte storage storage repositories on open-source software. Employ them directly or access them through a supplier: it’s your choice. ®