This article is more than 1 year old

Analytics pusher Interana: Why tweak your seeks when you can scan your DRAM?

Intros high-speed in-memory tool

Startup Interana has an analytics tool that processes data in scans rather than seeks, claiming it’s designed to be great at analysing time-based streams of event data.

The software is a proprietary database that organises data by time and user to allow for single-pass queries with no intermediate sorts.

It runs on clustered x86 servers and uses DRAM for working set data with intermediate flash and a disk-based backing store from which data is streamed. This means sequential and not slower, seek-based, random I/O.

The data consists of event data – billions of sequences of events – from sources such as clickstreams, call detail records, transactions and sensor data, and allows users to ask questions and see the answers in seconds

Because of its claimed speed Interana says its software engine is better suited than existing analytics for analysing customer growth, retention, conversion and engagement with service suppliers, such as mobile telcos, social, eCommerce, media and entertainment, gaming and SaaS companies.

Interana claims “trying to analyse event data with existing tools is incredibly complex; it requires extensive programming skills, expensive systems, and a lot of time.”

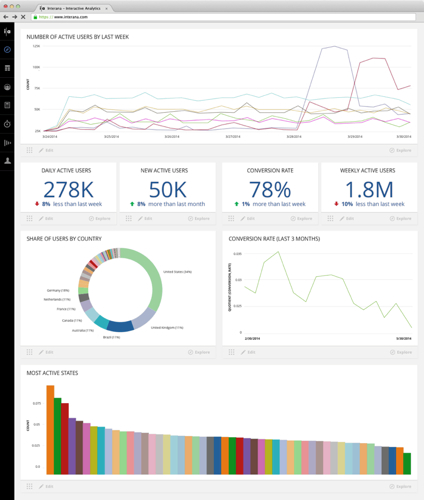

It claims that its software speed, enabling analysis runs in “seconds”, is complemented by a GUI that “makes data analysis a natural extension of everyone’s workflow.”

CTO Bobby Johnson said:

- Interana would not be considered an In-Memory Database. We are a full-stack event-based analytics solutions that allows companies to understand how customers behave and products are used. We do this by analysing event data. The solutions is comprised of a storage backend (highly compressed column store (append only and lock free), analytics engine, and visual front end). Because of this, we are able to achieve speeds that often out perform in-memory systems. We have been benchmarked at 100,000,000 rows per core per second.

- We are a horizontally scalable solution across commodity hardware. New nodes can be added to the cluster to increase both compute and storage.

- Since we do scale horizontally on commodity hardware, linear scalability can be achieved as new nodes are added.

- Interana uses Memory, SSD (flash), and disk. We aggressively cache using a multi tier strategy. A lot of systems are all in-memory, but because we are operating directly on massive data sets, we utilise memory for the working data, but can also access data that isn't currently in memory with amazing speed.

- Disk latency is just that, but since we are just streaming instead of seeking from disk, we achieve better performance. Data that is stored on disk is read sequentially (versus seeking or random reads or writes) and is highly compressed, so latency is dramatically reduced. This is the tradeoff we made for being a dedicated analytics system vs transactional.

Interana Dashboard

The speed, 100 million rows per core per second, enables “large and complex queries to be done at run-time rather than in offline ETL (Extract, Transform, Load) jobs.” The GUI has a visual query builder that features graphical feedback and type-aheads for field names and values.

The company was founded by CEO Ann Johnson, a former Intel product manager, and her husband and ex-Facebook exec Bobby Johnson, the CTO, along with another ex-Facebook engineer, Lior Abraham. Abraham crafted SCUBA, an internal Facebook analytics tool, used actively by more than half the company every month. Bobby Johnson, we’re told, “was responsible for the infrastructure engineering team that scaled Facebook during its heaviest growth years from 2006-2012.”

Interana had an $8.2m A round of funding in early 2013, with investors including Battery Ventures, Data Collective, SV Angel, Fuel Capital and YCombinator. The software has been in private beta for six months with customers including Sony, Jive, Asana, Tinder and Orange Silicon Valley. Contact Interana, which has offices in Menlo Park and New York, via its website to find out more. ®