This article is more than 1 year old

ViPR 2.0 sinks EMC's fangs DEEPER into software-defined storage

Even existing Hopkinton hardware units may fear its bite

EMC has added a few sharp fangs to its ViPR product: file services; integration of ScaleIO’s block storage; support for OpenStack and therefore OpenStack-supporting arrays; native HDS array compatibility; and the ability to run on HP’s surprise commodity server hit SL4540.

ViPR 2.0 completes the basic promises made by EMC about VipR’s scope when this all-singing, all-dancing software storage abstraction layer product was first announced back in September last year... and we can now expect to hear a lot about about it.

In El Reg's view, ViPR represents a way for EMC to transition storage value from hardware arrays and controllers to a software storage domain – and so retain an ability to price its storage services value independently from proprietary hardware and deployable on commodity hardware and also third-party hardware.

The ECS cloud appliance is a case in point.

What's in the box?

Stifel Nicolaus MD Aaron Rakers notes a Joe Tucci session comment, saying that EMC’s strategy around ViPR will provide the most opportunity from a revenue perspective.

ViPR 2.0 gets:

- Geo-dispersed management so ViPR can manage multiple data centres

- Geo-replication and geo-distribution to protect against data centre failures, with active:active functionality

- Chargeback facilities

- Block storage data service via ScaleIO server SAN, which will become a critical ViPR component, added to its object, HDFS and file data services

- vBlock support as a target array

- HDS support as a target array

- Commodity drive support

- Seamless integration with Centera and support for the CAS API

- HP SL4540 support - because EMC customers requested it

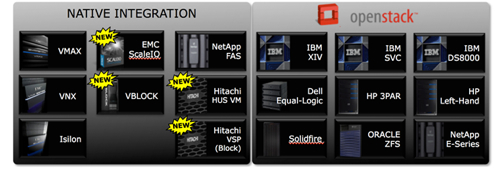

- Third-party array support via OpenStack Cinder plug-in, such as Dell, HP and IBM

- VPLEX and RecoverPoint management

- IPv6 support

ViPR v2.0 array support (Source Chad Sakac)

EMC's virtual geek storage blogger Chad Sakac says: "The storage engine ensures you have local copies for doing multiple node/disk reconstruction locally, coupled with geo-dispersed erasure coding for full site failure survivability [giving] you a great overall utilisation (1.8x with four sites)."

The natively supported set of third-party arrays will likely be extended. Such support is deeper and better than indirect support via Cinder. HIPPA compliance is being worked on. NAS is coming in the near future and will be provided on top of object storage.

ViPR controllers in different data centres can talk to one another and single sign-on is offered. ScaleIO will continue to be available as stand-alone software and ViPR block storage is just the storage part of ScaleIO.

We understand that if you want a fully converged compute and storage system within EMC (as distinct from VMware) then ScaleIO can be used for this, with servers’ directly attached storage aggregated into a SAN. A set of 48 such servers would only need 20 per cent of their cycles for storage – with 80 per cent left over for running applications. How this compares to VMware’s VSAN is not clear, but it looks like direct competition.

ViPR provides EMC with a route to having its storage array software functionality use commodity hardware instead of dedicated array hardware, like VNX.

The Storage Resource Management (SRM) offering has been upgraded to v3.0 and provides charge-back integration, end-to-end topology views and more integration with VPLEX. Previously when VPLEX front-end arrays in a ViPR environment, SRM couldn’t "see" the arrays behind VPLEX. Now it can.

EMC's Service Assurance Suite (SAS) has been refreshed to v9.3 and has software-defined networking support – VMware’s NSX for example – plus some mobile network support. It is reportedly used by some telco providers.