This article is more than 1 year old

Techies cook up Minority Report-style video calling

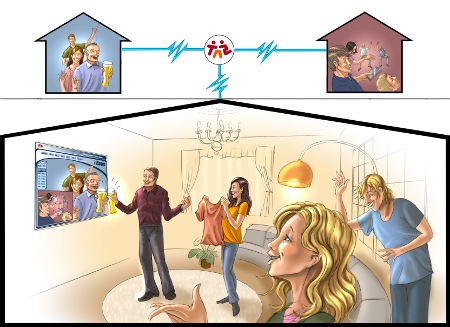

Electronic director cuts to most 'interesting' member during group calls

Boffins at BT's Adastral Park have been looking at video calling, trying to work out why we persist with voice and how video calling might get good enough for the whole family to enjoy.

The project was called Together Anywhere Anytime, or TA2 (tattoo), and comprised four years of study winding up in March this year, but now there's a follow-up project and research continues trying to establish what works in video, and why so little of our interaction transfers to the screen.

Adastral Park isn't what it once was, when everyone from the cleaners to the canteen staff was on the BT payroll and academic research was pursued in the long term. These days the shareholders like to see a return on investment within 18 months, and most of Adastral is dedicated to testing and verification of systems, with more than 10 per cent of the campus rented out under the "Innovation Martlesham" brand. However, some research does continue, even if BT isn't paying for it anymore.

TA2 involved 12 companies who had pitched for, and won, an EU grant worth €18m to study how people communicate over video connections. BT was the project leader, and much of the work was done at the Adastral site, but other partners included Goldsmiths College, Alcatel Lucent and Fraunhofer, not to mention board-game maker Ravensburger and half a dozen others. They are all trying to work out how families might interact over video connections and how video calling itself might be improved.

The most-quantifiable result of that research is a video system which incorporates an electronic director, who picks and cuts between shots like a human director, based on analysis of the incoming audio and video. The team has a demonstration rig set up at Goldsmiths and invited us along to see it in action.

The setting is a little contrived: three rooms each containing two people seated in specific locations, with three unmoving cameras in each room - allowing headshots of each person and one two-shot per room. Users never see themselves on screen, so when in use the system has six views to pick from, but it's the mechanism used to choose between them which gets interesting.

Rather than just focusing on the person who's speaking, like a Google Hangout does, the software designed by TA2 analyses the content to work out what the viewer would be interested in seeing. Directional microphones are used to associate a voice with each person, and the virtual director will intersperse a shot of someone speaking with cuts to the person they're looking at, for reactions. Should everyone be focused on one person then that person clearly needs to be on screen, even if they're not speaking, and anyone entering or leaving the space has to be featured in the same way as they would attract attention in the real world.

The rules are scripted in Drools, a language more usually associated with financial or business processes, but suited to the project as it allows quick tweaking based on user feedback.

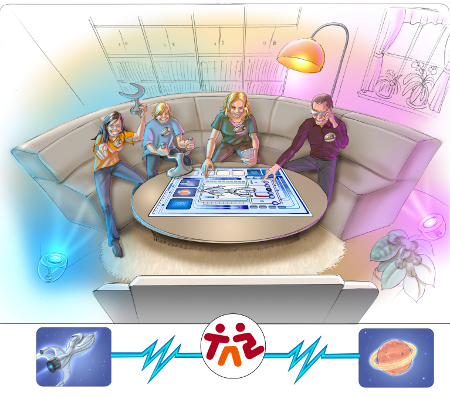

The whole thing runs on around a dozen PCs. One for each of the nine cameras, one to sort out the audio, one to perform the analysis and one to pick the shots, which allows performance which belies the complexity. Sitting on a sofa playing Articulate (a word game), one quickly forgets about the technology – the cuts seem entirely natural – and while it isn't the same as sharing a room with the other players, it does seem just as good, and entirely unlike any other video-conferencing system this hack has used.

The current system uses fixed cameras, and requires users to sit in specific locations, but the software is extensible and should work with moving targets. The rules can also be tweaked endlessly, perhaps customised by the eventual user, but the gains already achieved are so great that further refinement could be limited.

Not that everything tried by TA2 team has been so successful. Musical collaboration over video links was entirely undermined by the latency no matter how much the team reduced it (which is unsurprising, musical specialists Autograph still use CRT monitors as the latency of LCD is too high for a remote conductor), and music teachers were eager to try multiple cameras trained on their pupils, but in practice they never used them.

Quite what the TA2 system is for isn't clear, which is rather the point of academic research. Some of the companies involved have been busy squirreling away patents based on their contribution to the project, but this kind of EU funding is supposed to help European companies as well as contributing to the global bank of knowledge.

TA2's contribution is formulated into a book, but it also enabled the researchers to ask questions about how video conferencing will work in the future, and how machine intelligence can make it better. The next project, VConect (Video Communication for Networked Communities), only has a quarter of the budget and is being led by Goldsmiths, but will take the work into dynamic groups which will need moving cameras and better direction.

Video conferencing has been around for decades, and these days it's as good as free, so it's fair to ask why we don't make more use of it. Such questions don't always have commercially useful answers, but it's interesting to know that if you want to play Articulate with someone in a different room, then a dozen computers and four years of development can make that work. ®