This article is more than 1 year old

Calxeda ramping up ARM server boards

Benchmarks against x86 iron need some work

Updated Calxeda, the ARM server-chip upstart that HP tapped for its "Redstone" hyperscale servers last November, is getting ready to ramp up production on the server cards that use its quad-core EnergyCore ARM processors, and is making waves with benchmarks while promising to do a better job with comparative testing against x86 architectures.

The EnergyCore ECX-1000 processors were announced on the same day as the Redstone servers – which HP was very clear at the time were experimental machines, part of its "Project Moonshot" effort to revamp its server lineup.

We went through the feeds and speeds of the ECX-1000 processors back then, but at a high level, these processors are four-core, 32-bit ARMv7 variants etched in a 40-nanometer process by foundry partner Taiwan Semiconductor Manufacturing Corp.

Each Cortex-A9 core on the ECX-1000 runs at 1.1GHz or 1.4GHz, and the chip includes four PCI-Express 2.0 peripheral controllers, a DDR3 memory controller, and a SATA 2.0 disk controller on the die, as well as an integrated Layer 2 distributed fabric switch and related Ethernet ports that hang off of it.

The ECX-1000 is a very sophisticated design, and demonstrates why Intel has been so keen on acquiring Ethernet (from Fulcrum Microsystems), InfiniBand (from QLogic), and Aries supercomputer (from Cray) interconnect expertise and intellectual property, and why AMD picked up SeaMicro, as well. Hint: it wasn't to be a server maker, but to get control of the "Freedom" torus interconnect and related ASICs that link a bunch of Atom or Xeons into a fabric.

Karl Freund, Calxeda's vice president of marketing, is making the rounds with the analyst and press community in the wake of HP's announcement last month of its next-generation "Gemini" Moonshot servers, based on Intel's future "Centerton" dual-core Atom server processors.

Freund tells El Reg that Calxeda has shipped "full function and full performance prototypes" of systems based on its four-socket system boards to OEM customers and several operating system makers, and that it has two beta sites – Sandia National Laboratories and the Massachusetts Institute of Technology – putting machines through their paces. The plan is to get shipments of server boards to early customers this month, and that HP gathered up hundreds of sales leads at its recent Discover 2012 customer event in Las Vegas who were interested in the boxes.

"The silicon is fine," says Freund, when asked about what is taking so long to get the EXC-1000s to market. The problem is that Calxeda has to start out small, with local "hand crafting" of the system boards by OEMs and final assembly of systems in its own facility in Austin, Texas.

In the typical Catch-22 situation, Calxeda needs to have a pipeline of customers before it can ramp to outside – and higher volume – manufacturing, and it can't get more customers until it has more iron.

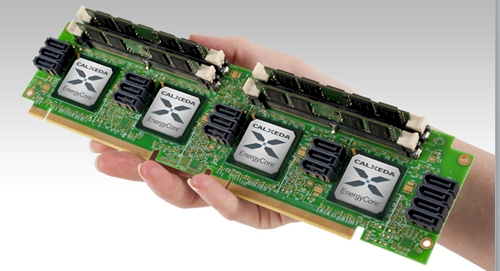

Calxeda's four-socket ECX-1000 server node

The plan is for the current four-socket server card, which plugs into two PCI-Express 2.0 motherboards slots to get power and to link to other nodes in a cluster, to be this year's main product. But starting in the fourth quarter of this year, Calxeda will have all of the documentation for the ECX-1000 chip ready for use by OEM customers, and will provide the system-on-chip to them separate from boards.

Early next year, it seems likely that Calxeda will also offer single-socket and two-socket variants of the system boards. "We will need to diversify and differentiate," says Freund, "and more importantly, we need to allow our partners to differentiate because otherwise all they have is price."

It's not surprising that Intel wanted to have the Gemini server announcement all to itself with HP, or that HP reiterated that Redstone was a development platform while Gemini would be a production platform. This is ever the way in the systems racket. But Calxeda insists that Redstone is a real server, not just a toy HP helped create as a PR stunt, and that real customers want to get them. And Calxeda hints that they're not some one-off for HP, either.

"We are committed to working with HP and continuing the relationship," Freund said, and could not confirm that HP would be tapping EnergyCore chips as engines in the Gemini machines. It stands to reason that HP will, of course, but HP will not confirm this.

Calxeda is also working with Dell, says Freund, and therefore it was a surprising that its fellow Texan opted for Marvell's Armada XP 78460 chip, also an ARMv7 variant but one with 40-bit addressing, for its "Copper" ARM-based microservers, which were announced at the end of May.

Freund can't talk about Calxeda's relationship with Dell, but given how server makers all like being first or being treated the same when they can't be first, it's no surprise that Dell's nose might have been a little out of joint last November when Calxeda tied its chip launch to HP's Moonshot launch. Dell almost has to use a different chip in its first ARM server, just to show Calxeda who is boss.

(Intel is. At least for now. And maybe for another decade if the ARM collective can't quickly get its server act together and sustain its low-power advantages.)

Yelling Geronimo with Apache Bench tests

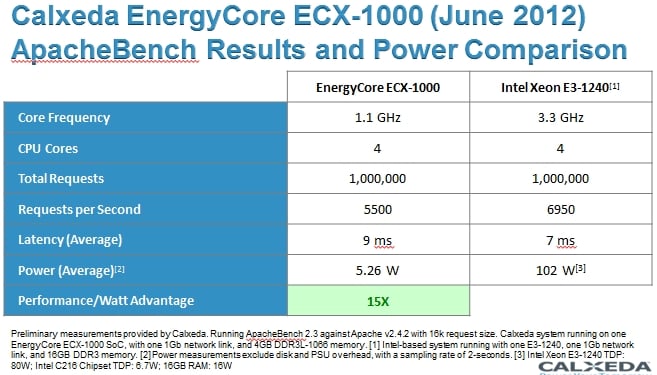

With HP talking about Centerton Atoms with a 6-watt thermal design point, and Dell talking about its Marvell Armada microservers, Calxeda had to do something to make some noise, so it ran the Apache Bench web server test on one of its machines as well as on a Xeon E3 box.

The comparison was flawed, but useful nonetheless – particularly in the absence of any data about how ARM servers perform running real workloads.

Calxeda took a quad-socket server node and ran the Apache Bench V2.3 test on Linux, with Apache as the web server. It then normalized the results for a single socket with four ARM cores. That socket fielded 1 million total web requests over the course of the test, handling 5,500 requests per second with an average latency of 9 milliseconds; the node, including its 4GB low-voltage memory and a Gigabit Ethernet link, consumed 5.26 watts measured at the wall. Power measurements excluded disks and power supply overhead.

Calxeda compared this against a single-socket server using a Xeon E3-1240 processor from the "Sandy Bridge" generation, which came out more than a year ago but which is still current in the market.

The E3-1240 microserver had one Gigabit Ethernet port and 16GB of DDR3 memory. Calxeda did not measure the wall power for the Xeon machine, but instead used thermal design point (TDP) ratings for the Xeon E5 (80 watts) and the "Cougar Point" C216 chipset (6.7 watts), plus the 16 watts of juice at which the memory manufacturer rated the memory sticks. That's 102.7 watts total – not a fair comparison because the measured power during the Apache Bench test is certainly lower.

The other problem is that the Xeon E3-1240 system is not CPU constrained, which you cannot see from this table. But according to Freund, the Xeon chip was only spinning at 14 per cent of total CPU capacity running the Apache Bench test, compared to nearly full usage on the ECX-1000 processor. The Xeon E3-1240 spins at 3.3GHz and could handle 1 million total requests during its run at a rate of 6,950 requests per second. But that was because its network card was saturated, not because it was out of CPU power.

Let's get out our ballpoints and drinks napkins, lads. You could add a fatter 10 Gigabit Ethernet card to the machine, of course, and maybe push somewhere between 5X and 7X more requests per second through the Xeon E3 box. You could also move to the brand new Xeon E3-1200 v2 singlers using the "Ivy Bridge" cores and use a low-voltage version like the four-core E3-1265L v2, which is rated at 45 watts and that is with on-chip HD Graphics. You could disable the HD graphics, toss in low-voltage memory, and push up utilization and with a 10GE port maybe drive 25,000 requests per second and maybe get that TDP down to 35 watts.

These are total guesses on the part of El Reg, by the way.

Add in the chipset and memory-stick overhead, and you are at somewhere around 58 watts TDP. Wall power consumed to run the test could be anywhere, but let's assume it is sweating a bit and such a server would burn 50 watts for that workload. Basically, this hypothetical Xeon E3 setup would do maybe five times the work and burn ten times the juice. That's a factor of two advantage for the ARM server chip – a far cry from the 15X difference in the table above.

Given the criticism that the company is receiving from this comparison, Calxeda is now looking at using a third-party benchmarking service to test the mettle of its metal, and Freund says Calxeda will be instructing them to do "optimized versus optimized" comparisons between ARM and x86 iron.

The Xeon is not the real target for the ECX-1000, anyway – Intel's Centerton Atom is, at least for the hyperscale workloads that Tilera, Calxeda, and SeaMicro are focused on. "We're anxious to do our tests around the Centerton Atom as soon as we can get one," says Freund. "But it is not going to ship any time soon."

The Centerton chip is expected to ship to OEM customers by the end of the year.

Update: Calxeda took exception to our drinks napkin approach to getting a Xeon E3 to pump out more work on the Apache Bench test.

For one thing, says Freund, Calxeda chose to benchmark the ECX-1000 over the Xeon E3-1240 because these are the processors that HP used to justify its creation of the Redstone servers in the first place, and that was the machinery that HP used to come up with the Redstone metrics that showed a cluster of Calxeda servers would consume 89 per cent less energy, cost 63 per cent less, and take up one-twentieth of the space running I/O constrained workloads over x86-based servers. The basic assumption in those numbers is that the x86 is not running at anywhere near peak, as is the case in most data centers of the world most of the time. Freund says HP's initial calculations showed an 8X to 10X better performance per watt generically running such I/O-bound workloads, and on this test at least, Calxeda was showing a factor of 15X. (But as we point out above, Calxeda is comparing measured power on its machines to peak power on the Intel box.)

More significantly, Calxeda says that the use of Gigabit Ethernet reflects the actual situation among hosters out there in the real world, and Freund contends that no one is going to put a 10GE network interface on a Xeon E3 processor. Fair enough. But our point was: why not? For I/O bound workloads, getting more I/O to a Xeon E3 chip would help get it back into the kind of proper balance that the ECX-1000 chip clearly has – the extended ARMv7 chip has gobs of I/O for its modest compute, which is correct for the kind of Webby apps that Calxeda is chasing. But, Freund says, the whole point of the Calxeda design is to remove costs, not to add them. Adding 10GE networking to a Xeon E3 server will boost costs significantly, and by the way, the EXC-1000 already supports 10GE ports on its on-chip Layer 2 switch fabric.

In the HP comparison, 1,600 Calxeda ARM servers cost $1.2m, or about $750 each. And it is certainly true that added a 10GE LAN-on-motherboard port to a Xeon E3 server would boost costs, and so would adding enough memory and a virtualization hypervisor to run multiple instances of the Apache Bench workload to more fully utilize the Xeon CPU. And all of those extra electronics add to power consumption and cost, moving it further away from the efficiency that the ECX-1000 chip and its integrate switching can offer. ®