This article is more than 1 year old

Ethernet standards for hyper-scale cloud networking

Always just over the horizon

What if the largest Ethernet networks we see today are just precursors, initial steps on the path to what's been called hyper-scale cloud networking?

"Hyper" is the term used generally for something almost unfathomably and exceptionally large. We might say that a regional group of airports is a small air transport network, a national one a larger network, a continental one a big network but the global air-transport system is a hyper network with hundreds of airports, thousands of planes, millions of flights a year and billions of passengers.

A hyper-scale Ethernet network will be global in scale and embrace tens of thousands of cables and switches, millions of ports, and trillions, perhaps quadrillions, of packets of data flowing across the network a year, possibly even more.

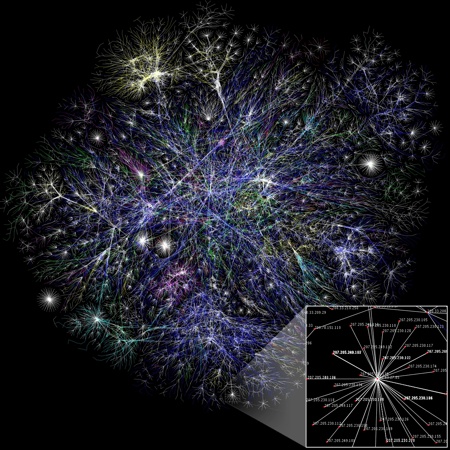

Depiction of Internet network. (Wikipedia)

The Ethernet that will be used in such a network has not been developed yet, but it is going in that direction and it will be based on standards and speeds that are coming into use now.

Speeds

Currently we are seeing 10Gbit/s Ethernet links and ports being used for high data throughput end-points of Ethernet fabrics. The inter-switch links, the fabric trunk lines, are moving to 40Gbit/s with backbones, network spines, beginning to feature 100Gbit/s Ethernet.

For example, ISP iiNet is preparing up for Australia's National Broadband Network (NBN) by using Juniper T1600 routers in the first Asia-Pac 100 Gbit/s Ethernet backbone deployment. Dell-owned Force10 Networks has announced 40Gbit/s Ethernet switches. Brocade, Cisco and Huawei also have 100Gbit/s Ethernet product

Ordinary Ethernet capabilities are ludicrously mismatched to hyper-scale networking requirements

Both 40Gbit/s and 100 Gbit/s Ethernet have frames being transmitted along several 10Gbit/s or 25Gbit/s lanes and are specified in the IEEE 802.3ba standard. Ethernet speed increases do not increase in step with Moore's Law as optical cable transmission speed increases are not primarily a digital problem but, apparently, an analog one. Thus there is no easy way to make out any path to 1,000Gbit/s Ethernet, even though hyper-scale Ethernet fabrics might well use it for backbone links.

Mismatch

Away from wire considerations, ordinary Ethernet capabilities are ludicrously mismatched to hyper-scale networking requirements, it being prone to losing packets and unpredictable in packet delivery times, known as being non-deterministic. Fortunately, in an attempt to layer Fibre Channel storage networking on Ethernet, the IEEE is developing Data Centre Ethernet (DCE) to stop packet-loss and provide predictable packet delivery latency.

This involves congestion management through pausing flows (802.1Qau), a priority scheme to ensure important Ethernet traffic gets through a congested network (802.1Qbb) with specific bandwidth amounts allocated to traffic types (ETS and the 802.1Qaz standard), and a way for configuration data of network devices to be maintained and exchanged in a consistent manner (802.1AB).

Where standardisation efforts seem to be failing is in coping with the limitations of Ethernet's Spanning Tree protocol. As Ethernet sprays packets all over the network, this protocol is in place to prevent endless loops. It creates a set (spanning tree) inside a mesh network of connected layer-2 bridges. Spare links are set aside for use as redundant paths if the main one fails, but this means that not all paths are used and network capacity is wasted.

TRILL (Transparent Interconnect of Lots of Links) is an IETF standard aiming to get over this, with, for example, Brocade and Cisco supporting it. It provides for multiple path use in Ethernet, and so doesn't waste bandwidth.

Brocade tells us that TRILL:

• Uses shortest path routing protocols instead of Spanning Tree Protocol (STP)

• Works at Layer 2, so protocols such as FCoE can make use of it

• Supports multi hopping environments

• Works with any network topology, and uses links that would otherwise have been blocked

• Can be used at the same time as STP

The main benefit of TRILL is that it frees up capacity on your network that can’t be used (to prevent routing loops) if you use STP, allowing Ethernet frames to take the shortest path to their destination. Trill is also more stable than spanning tree as it provides a faster recovery time following a hardware failure.

Latency

A certain problem with hyper-scale networks is latency. The longer it takes for network devices to respond to incoming traffic and send it on its way the longer it tales for data to traverse the network.

Brocade's VDX 6730 data centre switch, a 10 GbE fixed port switch, shrinks the time needed for traffic to pass through it with a port-to-port latency of 600 nanoseconds, unimaginably fast and virtually instantaneous. This involves a single ASIC. Local switching across ASICS on a single VDX 6730 switch for intra-rack traffic has a latency of 1.8 microseconds. One reason why this matters is that it helps network designers build a network with no over-subscription for deterministic network performance and faster application response time, which is the desired end-result.

Other companies such as Arista are also focussing on low-latency switches. CEO Andy Bechtolsheim says Arista's 7500 switch, with a 3.5 microsecond latency, is speedier than the 5-microsecond Nexus 5000, the 15-microsecond Nexus 7000 and the 20-plus microsecond latency of the Catalyst 6500. Arista's 7100 switches all have latencies under 3 microseconds with the 7124SX doing port-to-port switching at 500ns.

A hyper-scale Ethernet network will involve many switch crossings and each one will contribute its own minuscule delay. It's possible, we suppose, but unlikely that new Ethernet standards will evolve to cope with this, ether by specifying latency at a switch level or at a network level. Until this happens we rely on faster and optimised hardware. The VDX 6730, mentioned above, has hardware-based Inter-Switch Link (ISL) Trunking to get traffic moving as fast as possible through a set of connected switches.

Getting back to the air transport analogy with which we started this would mean maximum times for aircraft turn around at airports being adhered to, which is clearly impractical, although passengers would love it. What we can be sure of is that Ethernet networks will evolve towards using multiple links and, somehow, reduced switch counts to keep traffic speed high.

One warning note. "Hyper" is not a definitive term, it being marketing speak, so the is no definitive network device count boundary, the crossing of which will put is in hyper-networking territory. In fact "hyper" might always be just over the horizon. It's relative after all. ®