This article is more than 1 year old

ARM vet: The CPU's future is threatened

Moore's Law must be repealed

Moore's law repealed

Finally, there's the looming problem of the future of silicon process-size shrinkage: it can't go on forever. One obvious limit, as Segars pointed out, is the .27-nanometer diameter of the silicon atom itself – as process sizes shrink down to, say, 14nm and below, you're only talking about dozens of atoms per transistor gate.

But there are plenty of other challenges to be met before you'll count the number of silicon atoms in a gate on your fingers and toes – namely, what lithography techniques can take you well below 20nm?

At the 20nm level, Segars said, "the problem is that you need to introduce double patterning." Using two sets of masks to accomplish what one set could do at larger process sizes not only increases mask costs, but also slows down the throughput of the manufacturing.

If you want to keep the throughput at the same rate, you have to buy more equipment – which might make the ASML's of the world happier, but driving up chip costs is not a good thing in a world that includes developing nations whose citizens are hankering to join the mobile world.

There's one long-sought technology about which Segars remains a bit sceptical. "At 14 [nanometers] and below, what you really want is EUV," he said, referring to extreme ultraviolet lithography, which has long been seen as a possible solution to the process-shinking problem. EUV's promise comes from the fact that it's based on 13.5nm wavelength light – one hell of a lot more precise than the 193nm light that Segars said is used in today's visible-light lithography.

"The problem is," he said, "that [EUV] is really, really hard to make. You've got to make a plasma out of tin atoms, and then shoot it with a laser, and some light comes out – but the light's really weak, and it gets absorbed by everything. So generating enough of it to economically build chips is very, very hard."

And EUV technology is currently slow. "To have a fab running economically," Segars said, "you need to build about two to three hundred wafers an hour. EUV machines today can do about five."

Some observers question whether EUV will ever be a workable form of chip lithography. "If that's the case, then," Segars said, "frankly we're not quite sure what we're going to do."

From his pont of view, it's time for the microprocessor industry – in all its disaggregated chunks – to take a page from an old Apple ad campaign and, as he put it, "Think different".

The past is prolog. "Silicon scaling has been great." Segars reminisces. "We've gotten huge gains in power, performance, and area, but it's going to end somewhere, and that's going to affect how we do design and how we run our businesses, so my advice to you is get ready for that.

"It's coming sooner than a lot of people want to recognize."

After the endgame: core teamwork

But that's not to say that there's a dead-end on the road we're travelling. Segars' vision of the future jibes with the one described by fellow ARMian Jem Davies, the company's vice president of technology, when speaking at AMD's Fusion Summit this June – namely, that heterogeneous computing systems are the Next Big Thing.

Simply put, heterogeneous computing systems distribute a workload to various and sundry specialized compute engines – CPU, GPU, video, encryption, baseband, whatever – so that individual sub-tasks are completed efficiently by dedicated hardware best suited to them.

"I think the future of processing is heterogeneous multiprocessing," Segars said, "... dedicated engines arranged in various clusters with a software layer that can understand the underlying hardware, and make sure that if it's not needed, it's shut off, it's not leaking, to preserve that battery."

There are a host of challenges to achieving the holy heterogeous grail, of course – not the least of which being keeping all the various cores in close communication, and optimally data-coherent.

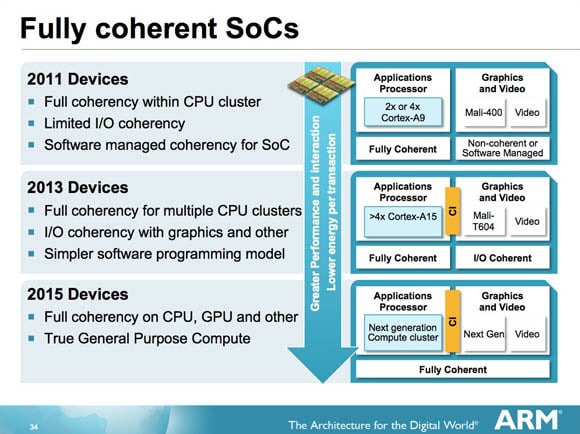

To that end, ARM's upcoming Cortex-A15 compute core – which will likely appear in early 2013 – will introduce a cache coherent interconnect that will enable full coherency among multiple CPU clusters. Segars also projects that by 2015, coherency in ARM-based SoCs and systems will be limited not only to CPUs, but will also allow full "where's that data?" transparency among CPUs, GPUs, and specialized engines.

The future of cache coherency, ARM style

Full coherence, however, brings with it its own set of challenges, such as unwanted latency when far-flung cores and engines need to share the same data, but ARM, AMD, and Intel are all looking into how different approaches to coherency can help – or hinder – heterogeneity.

A lot has changed in the microprocessor world since the Intel 4004 appeared 40 years ago this November. By and large, the arc of improvement has been relatively straightforward, with improvements in process size, processing power, and miniaturization being fairly regular – achieved through one hell of a lot of work, to be sure, but regular nonetheless.

There's been a lot of talk recently about the "post PC era". From Segars' point of view, however, we may also soon be talking about the "post–Moore's Law" era – a time when computing advances are no longer measured in transistor counts per square millimeter, but rather in how quickly, intelligently, and cooperatively different cores and engines can communicate. ®

Bootnote

35x the energy density of a phone battery

When discussing the woeful pace of battery-technology advances when compared to advances in silicon technology, Segars said: "What we really need is a new battery. If someone can work out how to hook up a chocolate bar into a cell phone, that'd be pretty good, because there's about 35 times the energy density in a bar of Cadbury Dairy Milk, and that might help solve our power problem."