This article is more than 1 year old

Nvidia snaps out snappier Tesla GPU coprocessors

All fired up on all 512 cores

Do the math

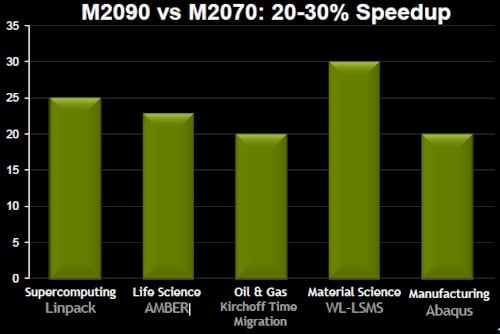

When you add it up, the faster clocks and memory and the higher core count add up to somewhere between a 20 and 30 per cent improvement in performance, thus:

Tesla M2090: Your performance improvement may vary

By the raw numbers, the M2090 is rated at 665 gigaflops at double-precision and 1.33 teraflops at single-precision, or 29.1 per cent more than the M2070 it replaces. The M2090 delivers 178GB/sec of memory bandwidth, up from 148GB/sec with the M2070.

And here's the kicker: the M2090s go faster but are still within the same 225 watt peak power draw of the earlier and slower M2050 and M2070 devices. Of course, your actual power draw will depend on the workload and how it stresses the GPU coprocessor.

But its not just speed and power requirements that are pushing the coprocessor envelope: the big innovation that is helping with the adoption of GPU accelerators for supercomputing and other analytics jobs is not the GPU, but the server that wraps around them.

"We're beginning to see server makers respond to what customers want," Gupta tells El Reg. "Once customers start using GPUs, they want more GPUs in a box and fewer CPUs."

Gupta calls out HP's new ProLiant SL390s G7, which can cram eight GPUs into a two-socket tray server in a half-width 4U tray, which was soft-launched back in April. The Tesla M2050 and M2070 GPU coprocessors were already certified in this machine, as is the new M2090. That full configuration gives customers a ratio of four GPUs per socket – but, oddly enough, this is not actually good enough. For most HPC applications, one GPU per CPU core is what the applications really need, says Gupta.

On a two-socket server, if you backstep from a six-core Xeon 5600 to a four-core model (such as the Xeon X5667 launched in February), you can get things into balance. At least until there is an eight-core Xeon chip.

Perhaps the better answer, you're thinking, might be to do what Nvidia has already done for workstation graphics back at the end of March, and double-up the GPUs on a double-wide card.

The GTX 590 graphics card has two 512-core Fermi chips running at 1.22GHz with 1.5GB of GDDR5 memory per chip running at 1.77GHz. Nvidia does not provide floating-point performance ratings on the GTX 590, but it should be somewhere around 1.24 teraflops double-precision. With six of these GTX 590 cards in a 3U chassis, an HP ProLiant SL390s would be able to use six-core Xeon 5600 chips and have one GPU per CPU core.

The only trouble is, however, that the GTX 590s are aimed at workstations, not servers, and have fans on them that might mess up the airflow in the server chassis. Moreover, the GTX 590 cards pull down 365 watts of power, and are therefore a bit more power dense than the M2090, which has half as many GPUs but four times as much GDDR5 memory per Fermi chip. If your HPC app needs more GPU and less GPU memory, this is worth thinking about, if your server can handle the cooling job on multiple GTX 590s – and you can actually get your hands on them.

The GTX 590 will win, hands down, on price, at $699. The M2090 is probably going to cost somewhere around $4,100 to $4,700, if it is priced like the M2070 it replaces. Nvidia does not provide official pricing on the fanless M20 series of GPU coprocessors, which is silly.

Last week, Nvidia CEO Jen-Hsun Huang lamented that the Professional Solutions business, where workstation graphics and Tesla co-processors live inside Nvidia, was not doing as well as expected, and part of the problem would seem to be that Nvidia is trying to charge too much money for the fanless GPUs. The price disparity between the GTX 590 and the M2090 is too large, plain and simple. ®