This article is more than 1 year old

Cloud on a chip: Sometimes the best hypervisor is none at all

Déjà vu all over again

Cloud We have come a long way, from data processing to systems to servers, and maybe so far we are essentially getting back to where we started.

Centralized systems begat distributed servers, and now we are in the middle of begetting a hybrid kind of computing that looks like distributed iron but, thanks to virtualization and sophisticated workload management tools, behaves like what is essentially a software-based mainframe and what is usually these days called a cloud.

But just because there is now an abstraction layer that runs on a server and across servers to make it look like a giant pool of CPUs, memory, I/O, and storage does not mean there are not serious problems confronting the server business.

Moore's Law, the ever-shrinking transistor that enables more components to be crammed onto a piece of silicon or for them to run faster, hasn't hit a wall but it did have to take a detour because there was no way we were going to be able to make a 10 GHz chip that could be cooled.

And so, all server processor makers have been cookie-cutting cores onto dies, essentially putting a multi-core server, including everything but memory and disk, onto the chip.

This has been a boon for the server business in the past several years, but the problem is this: Not all workloads can run across large number of processor cores, so moving from four to eight to a dozen or sixteen cores is not necessarily going to result in more work getting done for any particular job.

Putting the hype into hypervisor

And while it is perhaps heresy to say this in the hypervisor-crazed early days of cloud computing, the fact is that there are many some workloads that run best very close to the iron, without having a hypervisor layer burning up resources.

And for these kinds of workloads - simple web applications, dedicated hosting, simple media streaming, and parallel supercomputing workloads - maybe the best thing to do would be to move a whole cloud of servers down onto the chip, including a network that links multiple and independent computing elements together much as a local area network links together free-standing servers into a cluster.

Jim Held, an Intel Fellow and director of Terascale Computing Research at the chip giant, is examining ring interconnects (like those that will be used in the forthcoming "Sandy Bridge" and "Westmere-EX" Xeon and "Poulson" Itanium processors) as well as various topologies for linking together CPU components, with an eye toward increasing the efficiency of processing and keeping the complexity of the chip down as much as possible.

On the cloud front, one of the more interesting projects that Held is working on is called the Single-chip Cloud Computer, or SCC for short.

The SCC chip is experimental, and Held is very clear that its existence is in no way an indication that this will be the future architecture of Atom, Xeon, or Itanium processors. But the odds favor it, for reasons outlined above.

'Integrate in the right way'

The first wave of consolidation on chips was to move elements of a motherboard - such as memory controllers, cache memory, auxiliary processors like floating point units, network controllers - into the chip.

The next wave will be to take elements of distinct systems - multiple computing elements and the networking that links them together - and squeeze them down to the same bits of silicon, perhaps stacking them up with main and flash memory into three-dimensional arrays.

"Integration is our forte," boasts Held. "But we need to integrate in the right way."

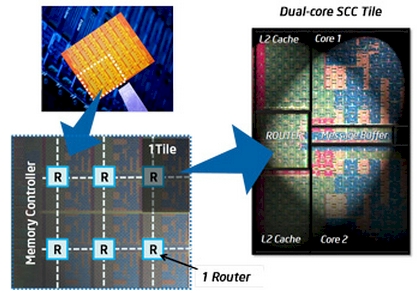

For now, with the SCC, Intel is working in two dimensions. The experimental chip is based on a fairly simple 32-bit Pentium P54C core. Two of these cores are put on a single tile, which includes an L2 cache memory for each core and a message passing buffer between the cores and a router linking these core pairs to others on the die.

In the case of the SCC chip, there are 24 core pair tiles. The chip has four banks of six tiles, each with its own DDR3 memory controller.

The SCC chip links the two core clusters on the die in a 6x4 2D mesh that runs at 2 GHz, which is twice the speed of the clocks on the cores. The memories on the die are not cache coherent, which means that they cannot look like one giant virtual chip to an operating system or a hypervisor.

This is not a big deal for parallel applications. If you want to run an application on a large cluster of parallel computers, all of that cache coherency electronics is legs on a snake.

Why would you want to aggregate the cores into a single system image, which takes CPU overhead, and then carve it up into smaller compute slices using a hypervisor? For a lot of workloads, having a cloud on a chip, or networks of interconnected clouds on a chip, will make a lot more sense. ®