This article is more than 1 year old

Intel orders software makers to obey Moore's Law

Get with our 2014 program

IDF Once in multi-core processor denial, Intel has well and truly moved past the anxiety that follows abandoning the "boost GHz at all costs" mentality that served the company well for so long. So firm is Intel's new multi-core embrace, that it now even accepts the idea that non-Intel architecture products may soon find a place on its own chips – at least in theory.

Shekhar Borkar, an Intel fellow, stressed again and again the theory part of his multi-core presentation at this year's Intel Developer Forum. You're not meant to think the following is an exact roadmap for the Intel of the future. Rather, Borkar handed out a glimpse of what Intel is thinking about now that it's just about caught up to all of the other major chip makers on the multi-core front.

The basic premise behind Borkar's talk hinged on the idea that we'll see tens and even hundreds of cores per chip in the near future. But, again, other chip makers have talked in such terms for a long time.

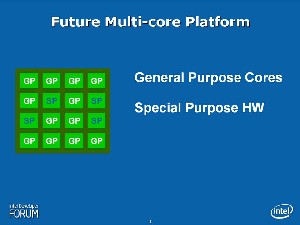

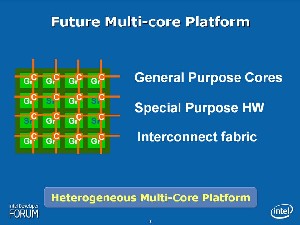

Two of Borkar's most telling slides showed a mix of general purpose (Intel Architecture) cores and special purpose chips that the fellow noted would not be IA.

Such chatter fits in with what sources have been telling us about Intel's plans.

Like AMD, Intel has accepted the idea that it will need to relax past strangleholds on its technical specifications and allow partners – largely co-processor companies – to build specialized chips for its gear. AMD has been far more vocal about this, attacking first with its HTX slot and more recently with its decision to license Opteron socket specifications to partners.

The end result will likely be a multi-core world where the common general purpose cores of today sit alongside FPGAs, networking products and other co-processors tuned to handle specific tasks at remarkable speeds.

Borkar, however, couldn't talk about such a future in loose/open terms just yet. So, while he admitted that non-IA cores will have a role down the road, all of his subsequent multi-core chip examples had only IA cores.

For example, Borker talked up three kinds of chips that could be feasible in the 2012 timeframe. At that point, Intel should be churning out out chips on a 22nm process with each chip chewing through 100W, 48MB of cache and – wait for it – 4 billion transistors.

Under such constraints, you can imagine a chip with 12 Pentium 4 cores, another with 48 Core architecture cores and another with 144 classic Pentium cores.

By 2014, we make another leap to 12nm, 100W, 96MB of cache and 8 billion transistors.

"This train is moving, folks, and it does not stop," Borkar said.

Under those constraints, you end up with 24, 96 and 288 core chips, respectively.

Such products face many of the same challenges as today's multi-core chips. They need multi-threaded software, lots of memory and speedy interconnects. Borkar tried to provide a picture of how well Intel thinks software will scale across these futuristic chips in a couple of slides.

As expected, the "large" core Pentium 4 chips can handle single-threads better but start to struggle as more and more software is spread across the processors. This is mostly easily seen in the 2012 slide (linked above), as you shift from two, to four and then eight applications.

"In 2014, the point of inflection shifts to between eight and 16 applications," Borkar said.

As has been the case for some time now, Intel demands that software engineers begin thinking about developing their products for this future. We're no longer living in a world where software will receive a boost simply because the chipmakers kicked GHz higher.

"Software engineers have to follow Moore's Law," Borkar said. "In the past, they haven't had to. The burden will soon shift."

And you thought Microsoft had enough trouble keeping up with the current coding model. We're predicting a 2015 follow on to Vista. Thankfully, no one will actually be subjected to the OS after the apocalypse comes in 2012.

Hardware makers, however, have their challenges too in this cortastic, corpulent, corpuscular, corewhatever world.

To improve multi-core performance, Intel has zeroed in on techniques such as speculative multi-threading, transactional memory and new packaging. True chip geeks won't find much new here, as Borkar declined to discuss anything fresh that Intel considers a real breakthrough.

Overall, Borkar's presentation was just one of several being given by Intel on the notion of "many core" or "terrascale" computing. It's clear that Intel has caught up with the rhetoric that Sun, in particular, has tossed around for a long time. ®

Read Reg Hardware's complete IDF Fall 06 coverage here