Original URL: https://www.theregister.com/2013/06/04/dell_active_infrastructure_update/

Dell rejigs Active System stacks, wraps up HPC cluster for life sciences

Rack 'em, stack 'em, sell 'em, ship 'em

Posted in Systems, 4th June 2013 17:39 GMT

Dell is finally getting around to updating its vStart virtual machine stacks, converting them to Active Infrastructure converged systems while at the same time cooking up a new stack aimed specifically at high performance computing customers.

They go by many names, but rack-level preconfigured systems with servers, storage, and networking are one of the fastest growing parts of the IT sector these days, albeit starting from a very small revenue base. System-makers love these engineered, converged, adaptive, and flexible systems because of the larger ticket price, account control, and simplicity of the sale, and customers are interested in such machines because they roll into their data centers easily: you plug in power and network cables, fire them up, and start loading software.

No fuss, no muss. And you get a modest discount off the iron because you are buying a bundled system – and you can ask for and often get a deeper discount beyond that.

Dell's initial foray into configured systems, which came out in April 2011, were aimed at companies getting started with virtualized server workloads and hence were called vStart systems. Dell started in the middle, with a rack setup aimed at supporting 100 or 200 virtual machines on either Microsoft's Hyper-V or VMware's ESXi hypervisor, and expanded the line up to as many as a few thousand VMs and as low as 50 VMs.

Last fall, in the wake of the Xeon E5 chip launch from Intel and just ahead of the acquisition of Gale Technologies for its cloud control tools, Dell rolled out the Active System 800, and previewed its plans for a more sophisticated line of converged systems based on its Intel-based PowerEdge line.

Sorry AMD, no active love for you. You only get the passive kind – when a hyperscale data center buying bespoke machines from the Data Center Solutions unit insists on the Opteron alternative.

At the Dell Enterprise Forum in Sam Jose on Tuesday, the IT giant that is in the midst of trying to take itself private and stay out of the gaping maw of activist investor Carl Icahn is rolling out a rebadged and tweaked line of Active System box o' clouds, as well as an update to its Active System Manager to the 7.1 release.

Active System Manager was initially based on a mix of Dell and DynamicOps code until VMware bought DynamicOps. And then Dell bought Gale and shifted to this system and cloud control freak with Active System 7.0, which launched in January this year along with pre-integrated SAP HANA appliances based on its PowerEdge servers, Compellent storage, and Force10 switches.

This appliance line was updated last month with fatter configurations, but oddly enough does not carry the Active System brand. Go figure.

There are four sizes of Active Infrastructure stacks

The big difference between the vStarts and the Active Systems is the inclusion of Active System Manager 7.1. The main changes with the 7.1 update are that it now supports Microsoft's Hyper-V hypervisor, which was a necessary condition to replace the vStart setups and the earlier DynamicOps-derived control freak. The 7.1 release also has configuration templates for Microsoft's SQL Server database.

Active System Manager 7.1 also has a broader support matrix outside of Dell's own hardware line – which is obviously not a requirement for the Active Systems, but may be desirable just the same for IT managers.

The 7.1 release supports PowerEdge R620 and R720 rack servers as well as the M1000e blade enclosure and its M420, M620, and M820 blade servers. Dell's Compellent SC8000 and EqualLogic PS61X0 arrays are also supported, and so is the I/O Aggregator for its blade servers and its Force10 MXL, S4810, and PowerConnect 7024 switches; Cisco Systems' Nexus 5000 and Brocade Communications' 6510 switches are also now supported. Most of this hardware was not supported with the Active System 800, which was built on M620 blades, the I/O Aggregator, and the S4810 top-of-rack switch.

The Active System 50 is essentially the same as the vStart 50, while the Active System 200 is essentially the vStart 200 but with a Force10 S4810 10Gb/sec Ethernet switch. Dell has killed off the vStart 100 – meaning there is no Active System 100 – and the Active System 800 stays as it was announced last fall. The Active System 1000 is a slightly fatter configuration.

The precise feeds and speeds of these setups were not available at press time. The Active System 800 launched last fall was only available in the US – something that was not obvious at the launch – but today all four Active System setups are available globally. Basically, this is the real Active System debut. Prices start at $54,000 for the Active System 50.

Dell says that it is evaluating whether or not to include KVM as a certified hypervisor, which is something that Red Hat and Canonical would no doubt be keen on, but the company has not made a decision as yet.

In addition to the generic Active System family aimed at virty server workloads, Dell is also rolling out an Active Infrastructure for HPC Life Sciences Solution, which is a perfectly terrible name for something. What would be wrong with dropping the word "infrastructure" entirely from branding and calling it the Active System for Life Sciences? You have to keep these things simple.

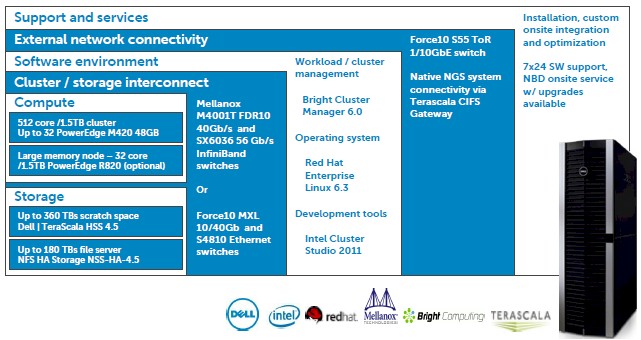

The components of the Active Infrastructure HPC stack

This setup crams 32 of Dell's PowerEdge M420 blade servers into a chassis with 48GB per node, which yields 9.4 teraflops of CPU-based double-precision floating point math and 1.5TB of main memory for genomics applications to frolic upon.

If the workload demands it, Dell can swap in an M820 large memory node which sports 1.5TB of memory each – you can only get eight of those big bad boys into the M1000e chassis, though.

Dell is offering the M4001T 40Gb/sec InfiniBand switch made by Mellanox Technologies for Dell's chassis in the Active System HPC setup or optionally its own Force10 MXL 10/40Gb/sec Ethernet switch. The system uses the TeraScala HSS 4.5 clustered file system, which is based on the Lustre file system. With some NFS file systems tossed in, the rack has 520TB of capacity for the nodes to use to store simulation data. The server nodes are configured with Red Hat Enterprise Linux 6.3 and use Bright Cluster Manager 6.0 to schedule work on the nodes.

The HPC life sciences cluster has a starting price of $650,000 per rack, all in. ®