Original URL: https://www.theregister.com/2009/12/18/apple_3d_patent/

Apple seeks patent on reality

3D revolution in the head

Posted in Personal Tech, 18th December 2009 09:02 GMT

Apple has filed a US patent for an immersive 3D display technology that allows you to vary your perspective on objects simply by moving your head.

It's a difficult concept to put into words when attempting to describe its use on a computer display, but immersive 3D is simply the way we view the world around us all the time. When you move your head from side to side, for example, objects move in front and in back of each other - and if you want to see what's behind an object, you simply, well, look behind it.

You can't do that on your 2D computer display. Windows or other objects on your display retain their spatial relationships with each other no matter what angle you view them from - you can't peek behind a window without dragging it out of the way. Your display is passive - it has no idea from what angle you're viewing it.

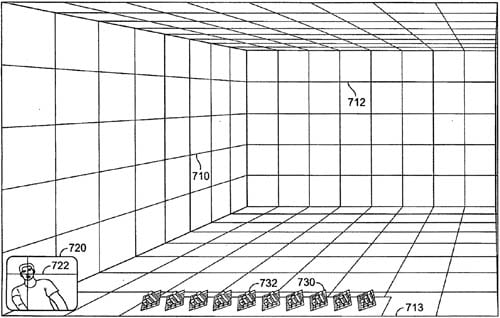

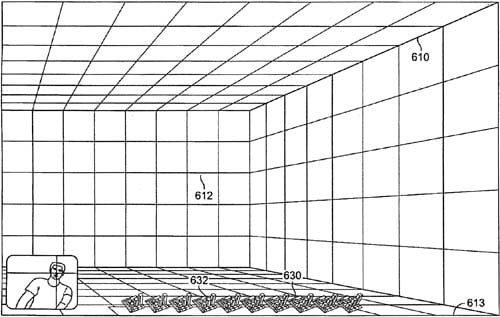

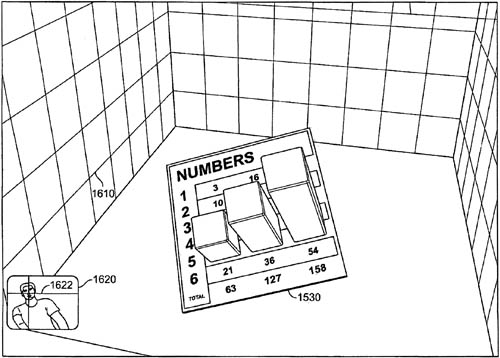

When viewing the inside of a box from the left, your prepective is quite different...

...than when you're viewing the same box from the right.

To know how to vary the spatial relationships of the objects on a display in order to portray immersive 3D, your computer needs to know where your head is - or, as we used to say back in the psychedelic 60s, where you head is at. Doing so uses a technology called, naturally enough, head tracking.

If you're not familiar with how head-tracking works or how impressive it can be in action, Johnny Chung Lee of Carnegie Mellon University can help. Two years ago he used a Wii remote and sensor bar to create an exceptional - and, at over 7.6 million YouTube views, exceptionally popular - video that explains the principles and provides a rather eye-opening demonstration. It starts slow, but hang in there - the rewards begin at around 2:45.

Head tracker

Head tracking is at the core of Apple's latest 3D patent, prosaically entitled "Systems and Methods for Adjusting a Display Based on the User's Position". We say 'latest' patent because Apple has been investigating 3D interfaces for some time. Almost exactly one year ago, for example, The Reg reported on an Apple patent filing entitled "Multi-Dimensional Desktop". That filing, however, didn't benefit from head-tracking, and the user interface that it described was merely a 2D representation of a 3D space - the objects in it maintained their spatial relationships no matter where your head was.

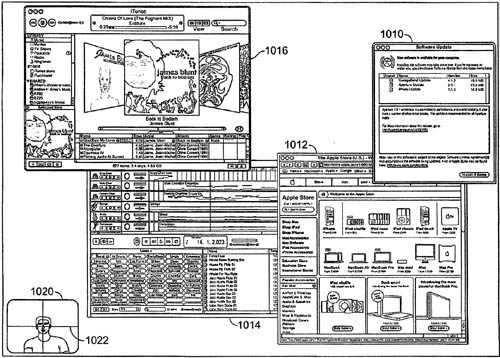

Today, you view a window-filled display from the center, but...

...in the future you may only need to tilt your head to see the same windows in a different spatial relationship.

Thursday's patent - which was originally submitted in June 2008 - goes much further. It creates an immersive 3D representation of a space complete with objects that can be either within that space or extend beyond it and appear to the user to be "outside" the display - that is, closer to the user than is the physical surface of the display itself.

And unlike in Lee's Carnegie Mellon video, the user does not necessarily need to wear or be equipped with some sort of signal-generating or receiving device. As the filing states in pure patentese, "The sensing mechanism may be operative to detect the user's position using any suitable sensing approach, including for example optically (e.g., using a camera or lens), from emitted invisible radiation (e.g., using an IR or UV sensitive apparatus), electromagnetic fields, or any other suitable approach."

The inclusion of a camera among those possible sensing devices leads to a second trick beyond modifying displayed objects' spatial relationships: moving images of elements of the user's environment into the display itself.

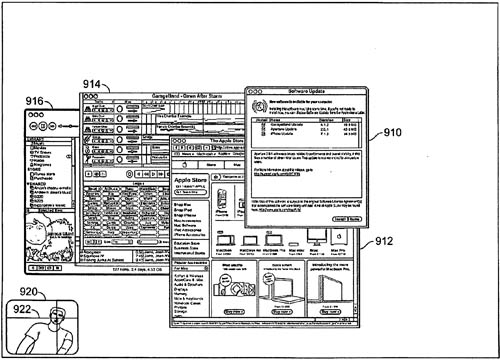

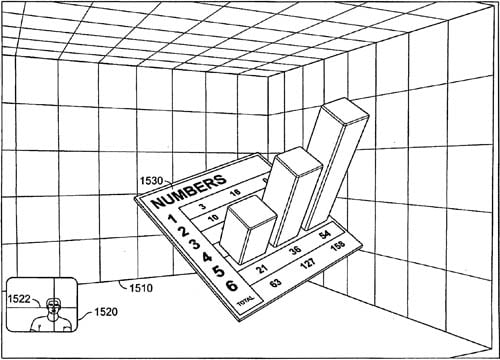

3D charts may actually have a function other than decoration...

...if you can easily examine them from different angles

As the filing puts it, the system "may detect the user's environment and map the detected environment to the displayed objects." Doing so, the system could, for example, display a reflective object with the user's environment reflected upon it.

In addition, a database of object-oriented metadata could prompt the system to perform transformations upon a recognized camera-viewed object based on predetermined parameters. You could, for example, instruct your computer that when it recognized you it should perform a mild Gaussian blur to smooth your wrinkles, apply a translucent color=#E7B9A0 layer to give your skin a just-back-from-the-islands glow, then display your immersive 3D software self to your actual 3D liveware self as an onscreen avatar with digitally enhanced wholesomeness. ®