Original URL: https://www.theregister.com/2008/02/04/review_amd_radeon_hd_3870_x2/

AMD ATI Radeon HD 3870 X2 dual-GPU graphics card

Do two GPUs equal double the value?

Posted in Channel, 4th February 2008 12:02 GMT

Review Everything about the Sapphire ATI Radeon HD 3870 X2 graphics card is big, including the model name, so we’re going to stick to calling it the X2 here.

The principle behind the X2 is simple enough. AMD's ATI Radeon HD 3870 is a decent graphics card but it's having a job competing with Nvidia's GeForce 8800 family, which is the reason why it was priced at just £150. So how do you increase the performance of the HD 3870? That's easy - you gang up two of them in CrossFire. But what if you don’t want to stuff your PC case with two double-slot graphics cards?

Sapphire's ATI Radeon HD 3870 X2: one board, two GPUs

The answer is CrossFire on a single graphics card, and here’s the AMD recipe for success. Take two HD 3870 chips - reviewed here - and two batches of 512MB of GDDR 3 memory. Add a PCI Express 1.1 bridge chip, mix the whole lot together on a 263mm-long graphics card and - bingo - you’ve got the X2.

You won’t be surprised to learn that the specification of the X2 bears an uncanny resemblance to a pair of HD 3870s while the appearance is very much like a 3870 with an extra couple of inches tacked on the length. While Sapphire’s first take on the X2 is a reference design, Asus has done something that is most unusual by producing an X2 with four DVI ports.

The X2's GPUs are made using the same 55nm production process as the HD 3870, and they support DirectX 10.1 and Shader Model 4.1, and have 320 Stream Processors in each chip. That’s 640 Stream Processors in total with a transistor count that has climbed past 1.3 billion. Each GPU has 512MB of GDDR 3 memory to itself, connected to its own 256-bit controller, so there’s 1GB in total.

The card is longer than a regular HD 3870 board, but it looks very similar and has the same beefy heatsink along the full length of the card, with a dust-buster fan at the far end that draws cooling air from inside your PC case. The air is blown through the heatsink and exhausts through the vented bracket to the outside world.

The core speed of the X2 has been increased from the HD 3870's 775MHz to 825MHz, while the memory speed has decreased from 2250MHz to 1800MHz. The memory controller supports both GDDR 3 and GDDR 4 so it would be no surprise if a graphics card manufacturer was to come up with an X2 with 2GB of fast memory. Whether it would make any sense is a completely different question.

Using a PCI Express 1.1 bridge chip between the two HD 3870 GPUs seems like an odd move as it would appear to be a bottleneck in performance. Nvidia did something similar with its nForce 780i chipset as most of the PCI Express is controlled by an nForce 200 chip which also supports PCI Express 1.1, so it's clear that both AMD and Nvidia feel that the older standard has plenty of bandwidth headroom at present.

One change for the X2 compared to the HD 3870 is the addition of a second power connector. Just like the old HD 2900, there’s one six-pin and one eight-pin connector, and while you can use a pair of six-pin PCI Express connectors this has a downside. If you want to use the Overdrive part of the Catalyst drivers to overclock your X2 then you need to use an eight-pin feed. Your reviewer has a fair few high-end power supplies kicking around, among the, three that have four six-pin connectors for all manner of SLI and CrossFire configurations but none has an eight-pin connector for the graphics. The odds are that you’re in the same boat, so if you’re considering an X2 and fancy a spot of overclocking then you’d better budget about £150 for a new, suitable power supply.

Installing the X2 was an absolute doddle as the CrossFire element is permanently enabled so you plug in the card, install the drivers - beta in this case - and you’re good to go.

We tested the Sapphire card on an Abit IP35 Pro with an Intel Core 2 Q6600 processor and 2GB of Corsair memory all running Windows Vista Ultimate Edition. In an ideal world, we’d have compared the X2 with an HD 3870 but we didn’t have one to hand. What we did have, however, was a pair of PowerColor HD 2900 XT cards. These boys deliver the same performance as the HD 3870 although they consume far more power. The comparison isn’t completely fair as the PowerColors have 1GB of memory on each card instead of the usual 512MB but it's close enough to act as a useful test.

On the Nvidia front, we’d have liked to use a GeForce 8800 GTX as it costs about the same as the X2 but once again we let the side down as we only had an 8800 GT on the shelf. This too is a reasonable comparison as the GT delivers a fair proportion of the performance of its big brothers, but that’s what happens when a review sample arrives at the weekend. As we recently reviewed the Asus EN8800GT 1GB - read all about it herewe gave it an outing alongside the faster EN8800GT TOP.

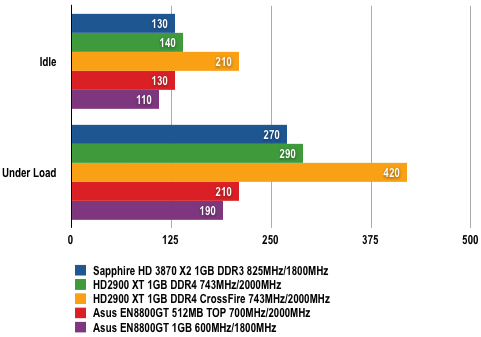

Power Draw Results

Power draw in Watt (W)

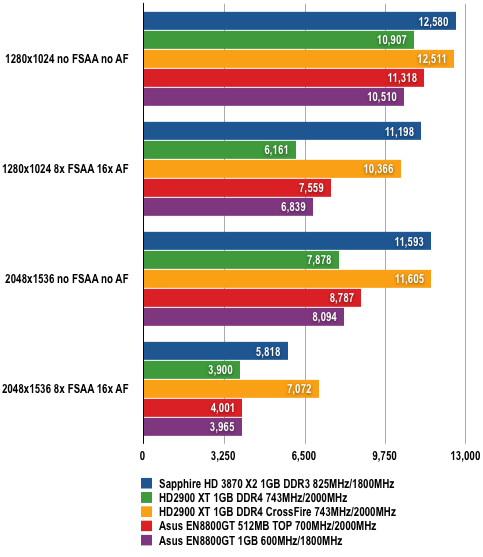

In 3DMark06, the X2 had very similar performance to the pair of 2900 XTs in CrossFire, although the figures diverge at extreme resolutions with anti-aliasing (AA) enabled. This may be due to the extra memory on the 2900 XTs.

3DMark06 Results

Longer bars are better

The extra performance of CrossFire rises exponentially as the load increases, so at a resolution of 1280 x 1024 there’s not much advantage to be gained. Add in AA, and CrossFire gives you a 60 per cent increase in performance, and when the resolution is increased to an astronomical 2048 x 1536 with AA the gain rises to 75 per cent.

In the same tests, the GeForce 8800 GT models delivered performance that started the same as a single HD 2900 XT but as the workload increased it became clear that the X2 had the legs on the 8800 GT to the tune of 50 per cent which seems about right as the X2 costs 50 per cent more than an 8800 GT.

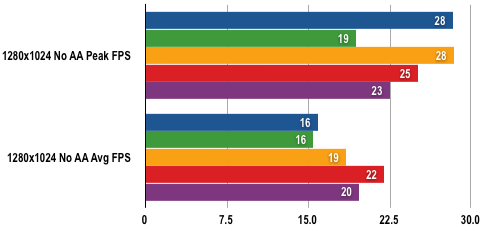

When we tested the graphics cards in Crysis, the most noticeable point is that CrossFire works. When we first played Crysis it didn’t support CrossFire, so a second AMD graphics card actually slowed things down. Thankfully, the 1.1 patch sorts out this glaring flaw. The problem is that the HD 2900 XT cards in CrossFire danced all over the X2 in a way that they simply couldn’t manage in 3DMark06. Our conclusion is that the X2 performance should be the same as the two 2900s in CrossFire and the fact that it is not is down to the beta drivers. Let’s face it, there’s no reason for the 2900s to be faster than the X2 and the figures show clearly that this is the case.

Crysis Results

Very High Quality Setting at 1280 x 1024

Longer bars are better

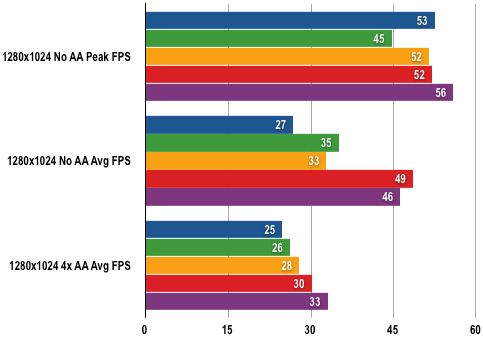

Medium Quality Setting at 1280 x 1024

Longer bars are better

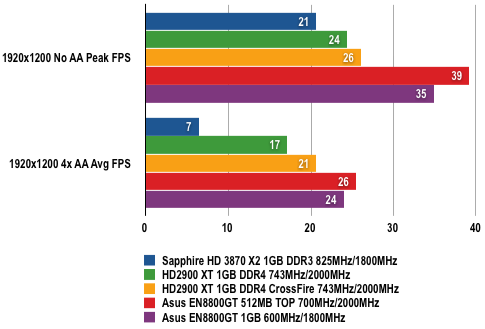

Medium Quality Setting at 1920 x 1200

Longer bars are better

Provided that we're correct and AMD sorts the X2 graphics drivers in the next few weeks then it looks like AMD finally has an answer for the GeForce 8800 steamroller, provided that you’re prepared to install a monster graphics card and probable a new PSU too instead of the more elegant 8800 GTX.

Verdict

If – and it’s a big if – you’re thinking of using a pair of Radeon HD 3870 cards in CrossFire then the X2 is a better prospect and costs the same amount of money. The problem is that CrossFire can be erratic whereas an Nvidia GeForce 8800 GTX is a cast-iron certainty for delivering the gaming goodies.