Original URL: https://www.theregister.com/2006/05/30/jim_gray/

Deconstructing databases with Jim Gray

A genuine guru

Posted in Software, 30th May 2006 10:15 GMT

Interview Most companies have a tame "guru" - someone presented as a world authority on the subject in question and so amazingly intelligent that they are above the tacky world of commercialism.

Sadly, many such "gurus" merely debase the term and turn out to be exactly what you expect - mouthpieces for the marketing department.

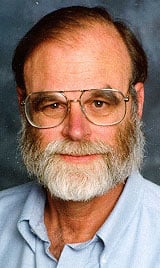

One of the great exceptions to this rule is Jim Gray, who has managed to combine an outstanding academic career (you don't win an ACM Turing Award for your attendance record) with a very practical one.

One of the great exceptions to this rule is Jim Gray, who has managed to combine an outstanding academic career (you don't win an ACM Turing Award for your attendance record) with a very practical one.

During the 1970s he was responsible for some of the most fundamental work on database theory and practice. To put this into context, it's because of Jim's work that we understand enough about transactions to be able to run multi-user databases effectively.

Over the years Jim has worked for DEC, Tandem and IBM. He is currently the head of Microsoft's Bay Area Research Centre and a Microsoft distinguished engineer. This is a man for all seasons - completely unpartisan. No matter who currently provides his pay cheque, he tells it as he sees it. I've heard him say, talking of the company for which he was then working: "We screwed up big time; it's as simple as that." This ought to make him a PR nightmare, but his standing in the community means that it has the opposite effect. People trust what he says.

If all that wasn't enough, Jim Gray is further renowned for being a humanist. One of the most decent, honest, upright, pleasant people you could hope to meet. After speaking at conferences, he is mobbed by attendees, but always finds time to talk to them and leaves each one feeling as if they have just been chatting to a friend.

Even hardened cynical computer journalists treat this guy with serious respect. Several of us were privileged to talk to him recently. In true guru style, he fielded every question without hesitation, deviation or repetition.

Transactions across services

On the topic of running transactions across services, what are the implications for transaction integrity?

As you may know, I spent decades working on the problem of getting transaction integrity to work at all; and on ACID [Atomicity, Consistency, Isolation and Durability - the four essential properties that a transaction must have - Ed] properties and how they can be implemented efficiently. Throughout this there has been always been the question of when should one use transactions and when are they inappropriate.

One of things that we worked on for decades is a transactional workflow metaphor. The basic idea is that if x is a good idea in computer science, then recursive x is better. So if we have transactions that are flat and are units of work, we should be able to nest them and parenthesise them and have sub transactions and sub sub sub transactions.

Frankly, over 25 years there's been a lot of work in this area and not much success. Workflow systems have, by and large, come to the conclusion that what the accountants told us when we started was correct - the best thing you can do is have compensation if you run a transaction and something goes wrong. You really can't undo the transaction, the only thing you can do is run a new transaction that reverses the effects of that previous transaction as best you can.

Often it is not possible to reverse the effects of an action: if you drill a hole in a piece of metal, you can't un-drill the hole. If you fire a missile, the abort is to destroy the missile. It's Carnot's [Sadi Carnot, French engineer (1796-1832) - Ed] second law of thermodynamics that says you can't run time backwards all the time, though you can in some situations. If it's just a North and South pole on a disk; if you made the pole North you can make it South. So long as you can keep everything inside the system things are reversible, but the minute things leak out of the system - for example, drilling the hole in the metal as an example of something leaking out - then the compensation action for that has to be something outside the computer's world.

So now, we come to a world which has a loosely coupled service-oriented architecture and people are asking the question: "What is the role of transactions in service-oriented architecture and internet scale distributed computing?"

So we have the notion of a transaction (and also compensation) and we also have the notion of a workflow. The Microsoft technology for this is BizTalk. There are many other people who have products that are workflow scripting languages; that allow you to declare transformations and also compensations associated with the transformation. If something goes wrong with the transaction, it goes back and applies the compensation action as best it can. However one of the results of compensation may be "I'm sorry I can't compensate for that transaction, some human is going to have to intervene to deal with this case", and I think that is the best story I can give.

Transactions are wonderful inside an organisation, they simplify things, they are all about making error handling easier, but when you get to internet scale and go across architectural boundaries error handling becomes much more difficult. When physical resources are involved, error handling becomes much more difficult because certain operations are not reversible. In those situations, you do the best you can in software and at a certain point, you have to involve people.

Graphical Processor Units or GPUs

What are your views on using Graphical Processor Units (GPUs) for data processing?

Unbeknownst to most of us, the graphics community has been building vector co-processors and putting them into every laptop, every desktop. At present, the typical vector co-processor has about 40 times the CPU power - instruction per second power - of the CPU. It also has about ten times the memory bandwidth.

Intel and AMD talk about multi-core; well, a typical GPU has a hundred cores, a hundred pipelines and is doing all this texture mapping in parallel. So if you could figure out how to program the GPU you could go a lot faster, and the coda to this is that the GPU continues to double at a much faster rate of speed than CPUs. So it is incumbent upon us software guys to use the instructions where we find them, and we are beginning to find lots and lots of instructions in the GPU.

There is a Microsoft research project, which has published a technical report, I think two months ago, about Accelerator [Microsoft Tech Report 2005-184 - Ed], a general purpose programming language that extends C# and allows you to program array-oriented operations in video and ATI chips. The language is generic; you can program not knowing which GPU you've got.

This project was working with some people at the University of North Carolina and they are graphics guys who know how to program GPUs and they came up with a novel algorithm for sorting. So the general thing we are doing is going methodically through the problems and seeing if we can map those problems to GPUs. I'm currently looking at processing satellite imagery and much of the satellite image processing fits GPUs beautifully because it is fundamentally pixel programming. My main role in working on that paper was that, in doing something like sorting, you have to balance I/O and CPU and memory utilisation. So it's a system-wide design and I helped the people at North Carolina with making sure that the I/O could deliver data quickly enough so that we weren't disk-bound. We had to use several disks in order to keep the system busy because a disk only gives you data at about 50MB per second and the GPU can sort at about 500MB per second.

What kind of applications would the GPU processing would suit?

Crypto would be a good application. In addition, many of the large data applications; like mapping applications or sensor applications. Of course, the most natural thing is any kind of the processing of cameras so if you can get Photoshop recast using the GPU instead of the CPU it would run a lot faster. Fundamentally highly parallel "do this to all the pixels" applications are good.

Of course, this doesn't fit within the x86 instruction set and it requires writing algorithms in a very different way; you have to think in a very different way. The bitonic sort [see a general definition of this term here - Ed] that we used with the GPUs really is radically different from any sort anyone has ever thought of before. It is massively parallel. You have to think of a single instruction stream, multi-output, data-stream architecture, and most people are used to the ALGOL, COBOL single instruction stream, single data-stream idea [and, in my experience, only a few such programmers can reliably code parallel processes, as Gray says later - Ed]

Why is this taking so long to be accepted?

Remember the year 2000 problem? Looking back it's amazing people had to work so hard changing two digit to four digit dates. Many of us use libraries that already exist, many of us use algorithms that already exist, we all know that weighted sorting is pretty good and quick sort is pretty good. Then somebody comes along and says "No, no, no you've got to use bitonic sort and you've got to figure out how to program everything in parallel and incidentally the memory model is different and you just have to think differently, and oh, incidentally they vary from one machine to the next". So you say, "Oh, excuse me, how does this work on a server? I have a multi-threaded system and how does the GPU get shared among the multiple threads?"

There are a few details like that that haven't, quote "been worked out". About two weeks ago IBM came out with a cell architecture which is a fairly radical departure from...it's not just a GPU any more 48:42 it's an array of array processors and so we are seeing an architectural blossoming right now which could make life so confusing for people.

You can see why people end up saying, "You know, I'm a C programmer, I'm just going to stick to my good old C programs and I'm sure they'll be working in a few years and if I invest in one of these bizarre architectures they are likely to have evaporated and I'll have wasted all that effort."

Another problem is that people find it hard to think in parallel. If you read a cookbook, it tells you how to make things and there's parallelism inside the cookbook. They never tell you to do things at the same time...you know, each step is do this, do this, do this, it's all sequential. Knitting is another very sequential process.

It's easier to understand sequential programs than parallel programs because the state space is much smaller. If you have parallel programs then the so-called Cartesian product - the product of the number of states in each of the programs - is much higher. For example, if there are five programs then you don't have five times as many states; you have two to the fifth times as many states, which is 32 times as many. So it's much harder to think about parallel programming, much harder to get parallel programs right.

Do you think compilers would ever get intelligent enough to parallelise our code for us, or would it always require human intelligence?

I hope it will. Actually, I'm convinced as far as human intelligence is concerned it's hopeless. That's what scares me so much. So what it's actually going to require is a simple programming model. I think a dataflow programming model is where we're headed and that happens to be the way you program GPUs. You program them as dataflow. If you look at the GPU sort, most of it is data flow.

Parallel programming is hard because of the state space explosion, so you have to think in general terms. You be able to say "do this general thing to all those objects", and you have to generalise and think about the general case. Whereas in sequential programming you have narrowed everything down to a very specific case and you know at a certain point in the program quite a bit about the state of this object.

If you look at SQL Server Integration Services it's dataflow programming. You write transforms as simple VB or C++ or C# transforms that take in data streams and spit out data streams. They are sequential programs but they run using pipeline partition parallels.

Real time analytics

We use databases: relational for storage and multi dimensional for analysis. But these are both simply logical ways of thinking about data - conveniences for the human rather than the machine. We currently hold these as separate data stores but will we ever move to a stage when a single store of data services both ways of looking at data? Surely, if we can find such a storage mechanism we will have reached to goal of real-time analytics?

Absolutely. In addition, there is the XML stuff which you can think of as XML documents but it's shredded inside and stored in formats that are convenient. There's the text data and the Microsoft research technologies are using bitmap indexes internally to keep in a pyramid of them to allow quick indexing to the text information. The data mining systems are actually storing data as data models and beginning to give you probabilistic reasoning about data, which I think is a really exciting development.

I think we end up representing the information in a variety of ways depending on what the need is and we that take the information and replicate it. Typically, the multi-dimensional structure is a replica of underlying fact tables. You can think of it as index structures if you want to, but for many people, because actually they deal directly with the dimensional data, that's the reality and everything else is just irrelevant.

SQL has gotten so huge, and there are so many different parts to it that there are people who have no idea what multi-dimensional data is. There are people who have no idea how the data mining models work, and are still experts. It is difficult to be cognisant of all the things that are in SQL Server because it's gotten to be so huge. I certainly have a hard time keeping up. ®