This article is more than 1 year old

Beyond WAR: How I bitchslapped Google

Bagging the sticky eyeball

WAR on the cloud 5 In part 4 I had a quick peek at the performance of Rackspace's CDN and liked what I saw. Now I'm starting to use the CDN with my main site, but I discovered that I had plenty of other things to fix to make the CDN worthwhile and get my home page faster to load than Google's!

Speed matters

A fast-loading web page, especially the first page on a user's first visit, helps build confidence and makes that user more likely to stay. If you run a business-related site you should care about this: a site that runs like treacle gets in the way of delivering your proposition.

Page load speed is indeed now one of Google's metrics for ranking search results – and the need for speed is why I've been messing around with my private and public clouds rather than running everything off my office desk over cheap ADSL.

A page should render well within about 7s or visitors will give up. My long-term target is to have my pages complete loading in 100ms, but given that just one DNS lookup can take 300ms even when everything goes right, 1s to have everything essential on the user's screen is my working target for now.

Dirty laundry

There's a whole bunch of things to get right at once to ensure that a useful page loads fast across the planet and for common browsers, and especially on the user's very first visit to the site when that otherwise great weapon – cacheing – cannot yet help.

Here's a laundry list for web performance tuning:

- Eliminate spurious redirects (eg, from / to /index.jsp) as my site was doing for every visit at a cost of as much as several hundred milliseconds.

- Minimise use of external files to support the page such as .js and .css (eg, inline small ones).

- Minimise the size of pages/files sent down the wire (eg, optimise images, mininify CSS/JS, allow gzip compression of HTML/CSS/JS/XML).

- Be careful with where you place your JavaScript and CCS as it can block loading and rendering of material even after it has hit the browser.

- Try to get the user to a site mirror geographically close to them to minimise latency, possibly using DNS tricks.

- Try to ensure quick (and few) DNS lookups, as this is often a difficult-to-improve and major part of page-load time.

- Optimise page creation and back-end support such as databases, with internal cacheing, streaming, threading, asynchronous loading/delivery, pre-computation, etc.

- Increase browser concurrency, ie, load many elements of the page in parallel rather than one after the other, using an external CDN (Content Delivery Network) or "sneaky" means such as alternate hostnames for the same server.

- Ensure that there is enough bandwidth to load each page quickly; again a CDN may help here.

What tools!

I only (re)realised some of this while using the excellent and free tool http://www.webpagetest.org/ in conjunction with the PageSpeed add-in for FireFox. Look how far down the list "cloudy"/CDN issues are: it is only one (useful) piece of the puzzle.

If you can measure, you can manage (and optimise). Having been paid squillions over the years by banks to optimise their code I know that without the right tools I'm stabbing in the dark, with them my client may mistake me for a genius.

Having worked some of Rackspace's CloudFiles magic into my code and dealt with all the issues above, I was able to consistently beat or match Google's generic homepage load (www.google.com) for a DSL user, for example on one typical run:

My site: first byte time of 207ms, start of page render at 246ms, and all done in 811ms.

Google: first byte time of 208ms, start of page render at 336ms, and all done in 1379ms.

And mon.itor.us also reports performance as very close: my site response time at 170s, Google at 167ms.

(However, Google's Webmaster Tools still ranks my site on average as slow, so I have more to do, especially if it is influencing my search ranking, though that metric may include ads which load long after the page itself is complete.)

All this in spite of my front page being rather less spartan than Google's, and not quite having the same DNS and network and server firepower as it does!

To err is human

I had been getting some of these things at least slightly wrong for years it seems, and I am even now redoing all my Last-Modified and ETag headers and my response to a browser's If-Modified-Since and If-None-Match to improve performance for all page loads after the first one.

What webpagetest does is make some of the problems crystal clear.

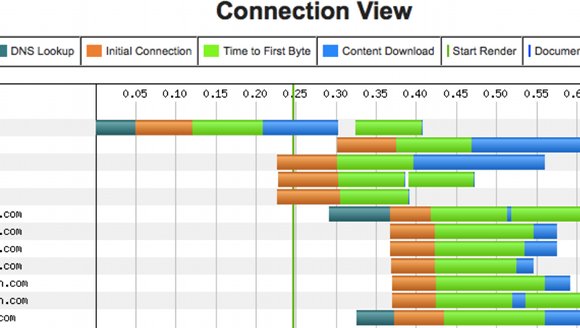

Here is a snippet from its Connection View where all the concurrent connections and load times and delays can be seen as well as, for example, the time to start to render the page for the user.

Next?

At this stage, because of the lack of control of such things as ambush bills and hotlinking, I'm putting only relatively small and unexciting images in the CDN (the CDN is serving ~150,000 per day for me at a little over 100MB/d, but probably costing me just something like £1 per month!)

My next step is to rejig my servers to be my own CDN for the more valuable and large – ie, bandwidth-hungry – images. These I can serve from whichever mirror happens to have decent bandwidth at that moment, with less concern about geography and latency.

Thanks to being forced to think about these things more carefully to write my pieces for El Reg, the finances of my site (revenue vs hosting costs) have improved markedly. There's a new Vulture business opportunity here somewhere, I'm sure of it! ®