This article is more than 1 year old

When it comes to AI, Pure twists FlashBlade in NetApp's A700 guts

You see, it all becomes clear when you provide numbers

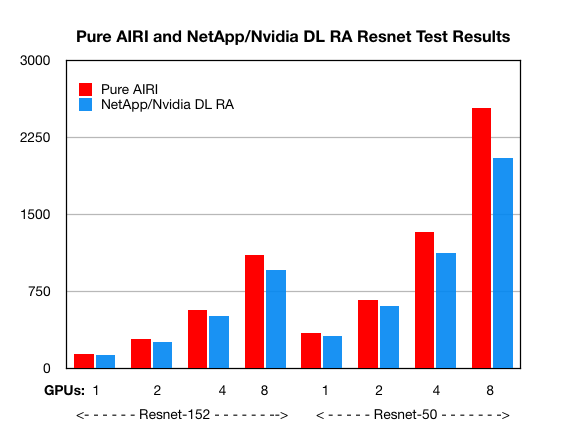

Pure Storage's AIRI FlashBlade is faster than NetApp's A700 all-flash array, according to two AI benchmark runs.

The two suppliers have both designed tech using Nvidia DGX-1 GPU servers and their own storage arrays to provide storage-server systems for "AI" applications such as deep learning.

Pure's deliverable hardware-software system is called AIRI (AI-Ready Infrastructure), while NetApp and Nvidia have deep learning reference architecture (DL RA). NetApp published Resnet-152 and Resnet-50 performance numbers and bar charts with 1, 2, 4 and 8 GPUs for its A700 all-flash array and one DGX-1, not the top line A800 array.

Pure published individually differently scaled bar charts with no numbers. We crudely compared the two charts yesterday and concluded NetApp was ahead – but this was wrong.

Pure contacted us and supplied the numbers for its Resnet runs, allowing a direct comparison. Resnet-152 first:

| Resnet-152 | 1 GPU | 2 GPU | 4 GPU | 8 GPU |

|---|---|---|---|---|

| Pure Storage | 146 | 287 | 568 | 1112 |

| NetApp | 136 | 266 | 511 | 962 |

The batch size is 64. Resnet-50 with the same batch size next:

| Resnet-50 | 1 GPU | 2 GPU | 4 GPU | 8 GPU |

|---|---|---|---|---|

| Pure Storage | 346 | 667 | 1335 | 2540 |

| NetApp | 321 | 613 | 1131 | 2048 |

Pure's AIRI beats the NetApp/Nvidia DL RA at all GPU levels. Charting the numbers makes it plain:

Pure AIRI vs NetApp/Nvidia DL RA

AI system choices are going to need more than two simple benchmark runs, and the Resnet benchmarks have been described by a Pure spokesperson as "incredibly complex".

But here we do see a direct comparison between Pure's FlashBlade and NetApp's A700 – and the FlashBlade is ahead. ®