This article is more than 1 year old

Microsoft's Pelican brief, MAID in Azure* and femtosecond laser glass storage

Bleeding edges in the cloud

Analysis Following last week's announcement that Microsoft's Azure planned to reinvent MAID disk arrays, The Reg took a deep breath and dove into Azure CTO Mark Russinovich’s presentation.

What did we find? Some interesting bits and pieces about IBM tape library use, the aforementioned Pelican MAI, and a project to store 50TB in a slab of glass an inch square known as Project Silica. It’s all about archival data storage at hyperscale and how to maximise its speed and minimise its cost.

Tape is the archive media du jour but it is slow to access whereas a disk array, if you could figure out how to reduce its electricity use, could approach tape ownership costs and provide faster data access. So Pelican could be added into the Azure archive mix between nearline disk and tape. But what if you could invent a new, denser medium that could store more data in a smaller space than tape or disk and last forever? Well that’s the distant promise of the Silica project, a new storage tier behind tape.

Russinovich says Microsoft is currently basing Azure archival storage on IBM TS3500 tape libraries. These, in their Azure configuration, have from 1 to 16 frames, up to 72 x 3592 tape drives and 12,000 cartridges and one or two accessors (robots.)

He doesn't talk capacity but a maximally configured TS3500 library can store up to 675PB of compressed data using more than 15,000 of IBM's 3592 extended cartridges.

The Pelican brief

Pelican, which has been under development for six years or more, is claimed to combine disk speed with tape-style economics. It comes in a 52U, non-standard rack, holding 72 drive trays – 12 rows of six trays. Each of these has 16 vertically-mounted 3.5-inch disk drives in two rows of eight drives. There are 1,152 drives in total, providing 11.52PB raw capacity using 10TB drives.

The whole rack weighs 1.36 tonnes (3,000lbs) and is a monster*. It is basically a dual-controller object storage system. In this MAID (Massive Array of Idle Disks) system only a few drives, 8 per cent of the total – meaning 92 – are allowed to be active at any time, reducing the cooling burden and lowering the power draw to 3.5kW. The controllers (two servers) schedule drive power up and down, and select the drives to run up based on the IO request queue.

The rack is cooled from the front bottom to the top rear. Disks at the top and rear of the rack are warmer than drives elsewhere in the rack.

Each tray of 16 drives is a power domain and two drives can be active or transitioning to being active while the other 14 are in standby mode.

The drives are accessed over a PCIe fabric which connects to 72 SATA HBAs, one per tray. These provide a SATA path to the drives in the trays. IO requests read (get) or write (put) data and come into Pelican over a pair of 40GigE links.

Drives are placed into 48 sets of 24 disks – a group. Data items are called blobs and a blob is striped across 21 disks within a group, using 18+3 erasure coding. Accessing a disk means the group must be spun up.

A 2016 Microsoft research document (PDF) says the drives are archival, which trade off performance against cost. Examples given were Western Digital’s Ae model WD6001F4PZ 6TB drive, which spins at 5,400rpm, and Seagate’s ST8000AS0022.

According to the paper: “We briefed all the major HDD vendors, and worked closely with one vendor to help specify the performance we wanted from these new HDDs.” It does not identify the vendor. Both shingled and non-shingled drives were evaluated.

Microsoft deployed non-shingled 4.9TB drives in the Azure production environment in 2015.

Times change and technology moves on: Russinovich’s presentation mentioned 10TB drives.

A May 2017 YouTube video provides a Pelican demonstration and talks of a Pelican rack having 5PB of capacity, not the 11.5PB (raw) capacity number.

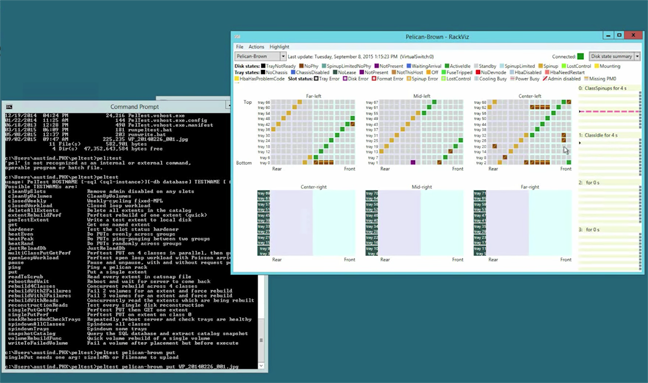

Microsoft developed a GUI to manage and operate Pelican:

Each row in the three blocks represent trays. The green squares represent active drives. The grey squares are inactive drives and the brown squares are failed disks. The single purple square indicates an absent disk

The right-hand yellowish block represents the IO queues and state.

Azure offers a range of storage types and tiers – file, disk, blob and archive. The blob option has hot, cool or archive storage tiers depending on how often the data is accessed. Pricing falls as you move from hot storage to cool and on to archive, with archive costs 50 per cent lower than the cool option. The archive option is for low access rate blobs, with access time measured in hours.

Microsoft states: "To read data in archive storage, you must first change the tier of the blob to hot or cool. This process is known as rehydration and can take up to 15 hours to complete."

There is no surfacing here of differences between IBM tape and Pelican archive storage. Tape is being used for Azure archival storage, according to the Russinovich video, while Pelican will be rolled out in regions that aren't big enough to support tape libraries.

It's not clear yet whether Microsoft will price Pelican-based archival storage differently from tape-based archival storage.

Project Silica

Project Silica is a Microsoft research initiative to store data in quartz glass cubes using laser light. Microsoft is collaborating with the UK's University of Southampton Optoelectronics Research Centre.

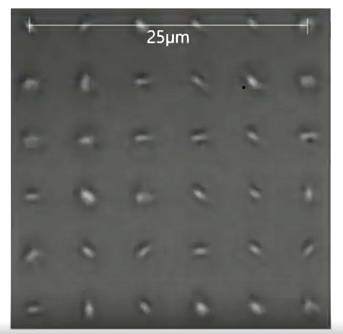

Squares of quartz glass store voxels (Volume Elements) – imagine the 3D equivalent of pixels – with a voxel being a defined volumetric area within the glass. A 6 x 6 array of voxels occupies a 25 micron square area, and there is a 5 micron separation between these voxels.

Voxels in Project Silica glass

There can be layers of voxels, with Russinovich mentioning five.

The voxels are "burned" into the glass using a femtosecond laser, meaning a flash of light whose duration is measured in quadrillionths of a second. A voxel's bit value is differentiated from other voxels in terms of the laser light's retardance and level of polarisation. This kind of laser is used to avoid breaking the glass, according to the CTO.

In effect, a pattern, created by the laser's retardance and polarisation, is ingrained into a voxel area in the glass and represents a set of bit values. Microsoft gold partner NeroBlanco estimated: "A piece of glass about 1 inch square can hold 50TB of data."

Our understanding is that Project Silica is a WORM (Write Once Read Many) medium and several years away from being a deployable product. We might assume there are difficulties to get over in positioning a laser read beam above the voxels to read them precisely.

In a read-access graphic, we can see a slab of Silica glass on the left with sets of voxel arrays in it. The central grey oblong represents one of these with an array of multiple voxels shown and a 25 micron length guide. The device on the right is a research lab reader, with the implication that the glass slab is inserted in it.

There is no information available about likely read and write time, or how the voxel slab is brought to the reader. This is very early days in the technology's development.

The appeal of Project Silica is that the glass could last, Russinovich says, "forever". This could be the ultimate long-term storage. It reminds us of Millenniata's 1,000 year M-Disc, which was introduced in 2009. There are 4.7GB, 25GB, 50GB and 100GB versions of this Blu-ray disc.

Project Silica has, for now, no impact on the storage industry or users. Many suppliers and researchers have tried to produce long-lasting, high-capacity optical media, particularly in the holographic disk area. All failed. In the greater-than-500-years-life media area, only niche suppliers like Milleniata survive.

Microsoft is betting that public cloud storage needs for unstructured data will rocket skywards into the exabyte area in coming years, and thinks Project Silica could meet that storage need then. ®

* What helped kill earlier MAID supplier Copan was the weight of its arrays. They were so heavy that not enough customers had data centre floors strong enough to support them.