This article is more than 1 year old

Next big thing after containers? Amazon CTO talks up serverless computing

'Perhaps we no longer have to think about them'

AWS Summit London Amazon CTO Dr Werner Vogels talked up the value of serverless computing at the AWS (Amazon Web Services) London Summit last week.

"What we’ve seen is a revolution where complete applications are being stripped of all their servers, and only code is being run. Quite a few companies are ripping out big pieces of their applications and replacing their servers, their VMs and their containers with just code," he told attendees at his keynote on 7th July 2016. "Perhaps we no longer have to think about servers."

Since the industry is still getting used to the idea of using containers rather than virtual machines (VMs) - perhaps using Amazon's ECS (EC2 Container Service) for management - then the idea that they are already being "ripped out" may come as a surprise. It makes sense though, if you buy into the idea of cloud computing as a managed platform rather than as IaaS (Infrastructure as a Service).

The earliest AWS offerings like S3 and DynamoDB did not require you to think about VMs or containers, said Vogels. "If you look at how we designed DynamoDB, we looked at all the other NoSQL databases and found that they were really hard to manage. All this sharding that you had to do, all this buffer management, connection pools, and all for one reason, to get consistent performance out of your database.

"When we looked at DynamoDB, we thought why not just give it one capability to manage, maybe, how quick performance do you want? You tell us how many reads and writes you want to do, and we will make sure that happens with consistent performance," he said.

These early services did not let you run code, but that changed when Amazon launched Lambda in November 2014. This was initially presented as a way to write code in JavaScript that was triggered by events such as a file arriving in S3, Amazon's storage service, but it has the potential to support many kinds of applications and has evolved significantly since its launch.

Today Lambda supports Java, Node.js or Python, supports scheduled functions as well as those triggered by events, and can be connected to an AWS VPC (Virtual Private Cloud) for access to a private network. The code storage limit has been increased from 5GB to 75GB. Lambda can also be linked with other AWS services such as the API Gateway for building APIs, or CloudFormation for defining a group of AWS resources.

"You just write some code, and we’ll run it for you," said Vogels. "The deployment model is code. You still do versioning. Each function takes seconds, maybe minutes at most. And each of those functions has a single thread and a single task. You can still execute 10s of thousands, maybe 100s of thousands of these Lambda functions in parallel, if that’s what you want. The financial model is no longer per VM per hour, you pay a combination between the amount of memory that you held for a certain period of time and the number of requests that you process," said Vogels.

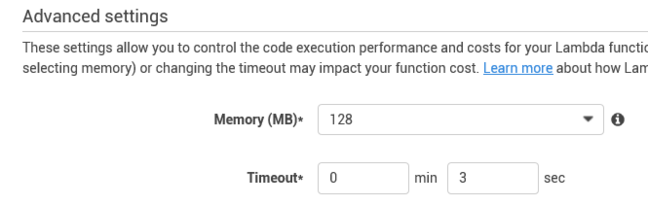

His explanation betrays the fact that using Lambda is not quite as simple as just uploading your code. You have to specify the amount of memory that you require, and how long to allow a function to run before it times out with an error. Nevertheless, it does abstract away the VM or container on which it runs - even though, as attendees learned in the Lambda breakout session, the service itself is implemented through containers.

Configuring AWS Lambda memory settings

Amazon is not alone in offering this kind of service. Microsoft offers Azure Functions, and Google offers Cloud Functions, as part of their respective cloud computing platforms.

It is also true that other cloud platforms, such as those from Microsoft, Google or Salesforce, have long had services which let you run applications without managing VMs or containers. Google's App Engine has always worked like this. Microsoft's Azure App Service manages the VMs for you, though their instances are not fully abstracted.

That said, the term "serverless computing" is usually reserved for platforms that enable applications composed of small microservice-style functions rather than those which host monolithic applications. One typical approach is to use a serverless platform to glue together other cloud services. This is great for the cloud provider, which may explain their enthusiasm. It is likely, for example, that you will use Lambda in conjunction with other AWS services.

"In a serverless world you don’t have to think about containers any more, you just write your code. You glue the different pieces together that are all managed. They are managed for you," said Vogels.

As analyst James Governor notes, serverless is "on demand computing taken to its natural conclusion," where you pay only when your code is executed, "the point of customer value".

Is serverless the future? The trade-offs are less control over how your code is run, the margin you pay to the cloud provider to run it for you, and the fact that existing applications may be hard to port to the new model.

Even if you like the serverless model, getting there is a journey, as Vogels freely admitted. Still, before embarking on that big new container-based project, it is worth considering whether serverless could also be an option. ®