This article is more than 1 year old

Bringing AFA SAN orphans in house

Care in the SAN family is best

All-flash arrays are SAN orphans, typically operating outside the existing SAN infrastructure and having a hard time of it from the data services angle as a result. Wouldn’t it be much better if they lived inside the existing SAN house rather than being out in the cold, so to speak?

Existing enterprise SAN arrays are robust, reliable and mature products with data services software facilities built up through several software releases to provide data protection and management functions such as:

- Backup product/operation integration

- Snapshots

- Asynchronous and synchronous replication

- Cloning

- Data reduction ranging from thin provisioning through to deduplication

- Provisioning

- Resource usage trending

- General management

SAN arrays from Dell, EMC, HDS, HP, IBM and NetApp are all in this general category and provide a rounded set of data management services that are relied on by IT departments using their arrays.

These are typically disk-based arrays with the latency disadvantage that such media has, the time it tales from receiving an IO request to the time a disk read/write head starts moving data to/from blocks on a platter’s track. Solid stated drives (SSDS) don’t have such seek time latency waits and can speed a legacy array.

However the average SAN array has a controller software stack based on the IO it’s handling being to and from disk. Once again it will be a mature and robust item, matured over several generations so that it does not fail and is reliable and efficient; efficient that is given the spinning media it controls.

The rise of all-flash arrays

When all-flash arrays were first envisaged they were conceived by newer, start-up companies who could not use existing array software, which was proprietary, and so wrote their own. This had an intrinsic virtue in that it was written to take advantage of NAND flash’s low latency and not slow the flash down through inefficiencies in the controller software layer.

The startups marketed this as ground-up designed software, tailored for flash and therefore creating arrays that had faster controller software stacks as well as natively faster media. Thus a Pure Software, a SolidFire or XtremIO would say, justifiably, that their technology had both faster flash media than disk and faster array software than disk drive-based SANs, and consequently were two or three orders of magnitude faster than SANs using HDDs.

The high cost of flash compared to disk media was tackled by adding in deduplication and compression to arrive at an effective cost/GB of storage that was equivalent to or lower than a disk-based SAN.

Where servers are being held up waiting for IO with applications that needed to run as fast as possible - financial trading applications for example or web commerce - then all-flash arrays became attractive indeed, with a few rack unit “U”s of flash array replacing two or more racks full of short-stroked disks. Such arrays needed multiple spindles with data stored on the faster-accessed tracks to deliver the speed needed by such applications.

With this beach head all-flash arrays (AFAs) started moving into enterprise data centres and were marketed as the primary data storage medium, ready to replace all SAN array data storage needing 15,000rpm disk drives.

AFA hidden costs

But there was a cost, an almost hidden cost, in that the data in the all-flash arrays could not be managed in the same way as with the installed SANs. They had their own management interface, their own support arrangements and their own data services, which, common to existing SANs, were limited at first and, as well as being inferior in features to the existing arrays, were different in their detailed operation and management.

This meant that businesses were protecting and managing their less important data, the stuff stored on their mainstream SANs, better than the more important primary or “hot” data on these newer, faster all-flash arrays. Thus, for example, replication did not exist, or was asynchronous. The owning businesses then had to make other arrangements if they wanted the same data security features that they were used to with their SAN arrays.

How beneficial it would be if their existing SAN array data services could be used with the new all-flash arrays. But the general rule is that they cannot. Thus the independent flash array vendors, such as Pure Storage, SolidFire and Violin, all have their own separate data services, EMC bought a flash array startup, XtremIO, and offers it as separate product line from its VMAX and VNX SAN arrays and Isilon filers.

VMAX-specific data services are not available for XtremIO and things VMAX and VNX users take for granted like largely or completely non-disruptive software upgrades are absent from XtremIO.*

NetApp has three all-flash array products; its fast ingest EF-Series, its all-flash FAS arrays and its latest but single controller-only in-house developed Flash Ray. The NetApp mothership is its FAS Data ONTAP line but, even with its ONTAP data services portfolio, NetApp has found it necessary to have two other all-flash array products; the EF series and FlashRay, neither of which have ONTAP data services goodness but both of which offer better performance and/or price/performance for applications needing faster flash media day access than ONTSP can deliver.

Array controller software

Only two existing SAN array vendors offer new all-flash array performance inside their data services stockade; HDS and HP. Why have they been able to do this when Dell, EMC, IBM, NetApp and others cannot?

HDS designed its own hardware, a Hitachi Accelerated Flash (HAF) tray, which slots into an existing high-end VSP arrays, and mid-range HUS arrays.

Hitachi Accelerated Flash module for its VSP array

These are company, 2U trays holding up to 38.4TB and the design of the hardware ensures, HDS says, that IOPS performance is 4 times better per flash device than that of enterprise solid-state drives (SSDs). There’s no sacrificing of speed here, yet these AFAs operate inside the full panoply of VSP and HUS data services. For example, All host array functions like external virtualisation, continue as before.

Interestingly the product has an ASIC to provide extra speed, which brings us to HP and its 3PAR line.

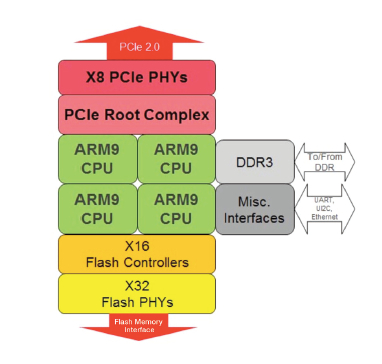

Hitachi Accelerated Flash ASIC diagram

HP has an all-flash version of its StoreServ (3PAR) product, the 7450 and basically says that the ASIC hardware-acceleration and fine-grained efficiency features, such as adaptive cache optimisation, if its StoreServ software mean that it can run this all-flash configuration as effectively as any all-flash array startup without its customers having to sacrifice StoreServ data services.

HP StoreServ 7450

Since it was announced in 2013 it has been updated - a software upgrade at the end of 2013 increased its 4K random read IOPS rate from 550,000 to 900,000 for example. Deduplication was announced in June 2014 along with thin cloning. HP says its effective cost per GB is at the $2/GB level.

HP and HDS are hoping that there ability to provide performance and storage efficiency, equal to or better than all-flash arrays that do not enjoy residence underneath the mainstream SAN data services umbrella, will persuade their customers to eschew AFA startups or orphan AFA products from other vendors and stay inside their existing SAN array software environment,

One further thought. With storage resource sprawl coming as application-specific resource silos come along, Hadoop-style big data and analytics stores for example, it surely makes sense to minimise the number of separate silos your admin staff have to deal with.

Bringing orphan all-flash arrays into your mainstream SAN tent, where you can, will reduce the admin burden and let you use the same management, protection and support arrangements across both your SAN and all-flash array environments. What’s not to like? ®

* Josh Goldstein, EXM XtremIO VP of marketing and product management, comments: “XtremIO arrays fully support non-disruptive software upgrades (NDU). … the comment [above] … was in reference to the 3.0 code release … that was a business choice and all upgrades to that point and since that point have been fully NDU.“