This article is more than 1 year old

Two years in the making: Sneak peek at VMware's future VVOL tech

Virtual volume storage to erupt onto array scene next year

VMware says its virtual volume storage technology – which has spent two years in development – is set to erupt onto the storage array landscape next year, causing profound changes for array controllers.

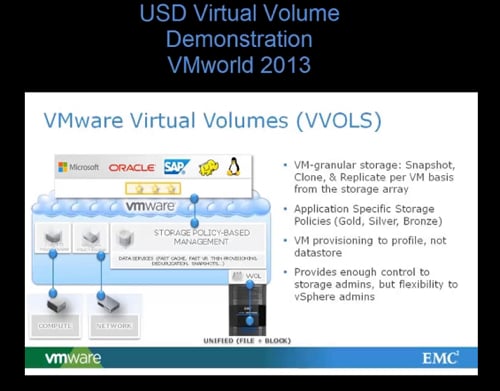

Virtual volumes or VVOLs provide storage for VMware virtual machines (VMs) that currently need LUN (Logical Unit Number) or other storage abstractions requiring dedicated storage admin staff. Also an individual ESXi server can have up to 250 LUNs. This is too few in a world where multi-socket and multi-core servers can host more than 250 VMs.

With VVOLs, VMware admins can set the scene for VMs to request storage services from an array in a much more automated and scalable fashion that also relieves the ESX of work by offloading the heavy lifting, so to speak, to the array.

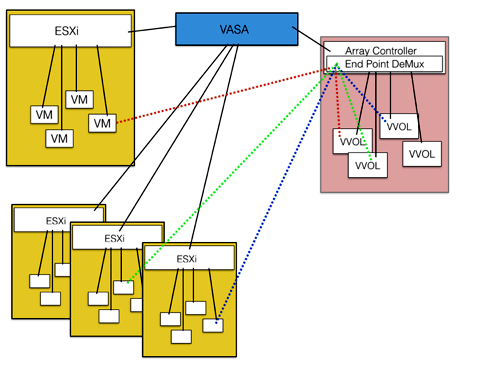

VVOL technology is still in development by VMware and by storage array vendors, so its final form has yet to be revealed. What we understand so far is that storage arrays publish their services to ESXi using VASA (VMware APIs for Storage Awareness) 2.0 APIs and VMs request a selected service from the service availability list. It is then provisioned by the array and tied to a VM.

VVOL scheme. Black lines are control plane paths. Dotted lines are inline data plane paths. Click picture for larger version

These services are requested through protocol endpoints on the arrays which provide represent protocol access routes using Fibre Channel, iSCSi, a file or object protocol. This becomes the access path to VVOLs on an array.

VVOLs are said to be aligned with array boundaries and so quicker to access.

A VVOL has metadata describing it, which can specify:

- a defined capacity

- a storage service level in terms of IOPS and latency

- thin or fat provisioning policy

- deduplication

- snapshot and replication (sync, asynch) services occurring at set intervals or according to a policy

- encryption

- retention period for data

- data-tiering policy defining what data is moved to which tiers of storage – and when...

A storage array could be a physical array, a virtual SAN formed by pooling individual server's directly attached storage (DAS) or a storage array software abstraction (such as EMC's ViPR storage resource management and virtualisation product). ViPR would act as a front end to its underlying arrays.

A VVOL-supporting storage array will receive data access requests potentially from hundreds, thousands and potentially tens of thousands of VMs. It will need to understand them and translate their VVOL-type data access abstractions to the array's RAIDed tiers of disk and solid state drives.

It will receive multiplexed access requests and need to de-multiplex them and then deal with them, understanding what VVOLs might contain and accepting and delivering data in the right format and amount to the right logical place within the right service level.

Consequently VVOL-supporting arrays will need more powerful controllers that can accept the existing file (NFS and SMB/CIFS) and block (Fibre Channel, FCoE, iSCSI) protocols, as well as understand VVOLs, and apply data services, like snapshots and replication, at the VVOL level as well as using the existing file and block constructs for these data services. VMotion could well be easier, as all it need involve is remapping access to a VVOL or VVOLs with no data movement.

We expect VMware to announce VVOL support in a vSphere refresh in the first quarter of next year, and for array suppliers to announce support after that, with the main ones announcing before the end of true second quarter.

Where can you get more information? There is background information available dating from the VVOL announcement and here's a list of useful sources:

- VMware chief Strategist Chuck Hollis in November 2013

- VMWare architect Cormac Hogan in 2012

- Wen Yu of Nimble Storage in May 2014

EMC's Chad Sakac has written on VVOLs and has made video presentations:

Chris says

Enjoyed your background reading? Here are the first few questions that came to my mind:

- Suppose Hyper-V comes out with its own virtual volume scheme and API access. Would array suppliers support it?

- Suppose RedHat .... ditto. Would array suppliers support it?

- Suppose XEN.... ditto. Would array suppliers support it?

- With such a potential code burden coming onto storage array suppliers don't we really need an industry-standard way of accessing a hypervisor's virtual storage volumes?

- Could VMware donate its virtual volume scheme APIs to OpenStack?

What do you think?

®