This article is more than 1 year old

Let the SAN shine: Cirrus Data Solutions plugs speedy solution

No reboot, no problem, says former FalconStor man

A startup founded in 2010 reckons it can speed up SAN data access tenfold using its in-fabric Data Caching Server appliance.

Cirrus Data Solutions (CDS) is based in Jericho, NY, and its CEO is Wayne Lam, who co-founded FalconStor. The founding funding amount was $480,000.

Paul Bjorndahl, VP of sales and marketing at CDS, said: “We are a small company (self-funded) that has developed (and patented) an appliance that sits in a SAN between the switch and storage. It installs in a production environment without updating any drivers, modifying LUN’s and or having to reboot any hosts.”

Its Data Caching Server (DCS) is CDS’s second product. The first product was DMS, a Data Migration Server, a block-level migration device to move data to/from any legacy or cloud storage repository.

The technology behind both the DMS and DCS products is patented (U.S. Patent No. 8,255,538) and has been dubbed Transparent Datapath Intercept by the company.

It “uses standard storage HBA ports to create a transparent data path as a virtual cable. The technology provides the capability to insert an appliance in-band between the host and the storage without causing downtime and without requiring any changes to the host, the switch, and the storage. The appliance automatically discovers the WWPN of each storage target port and host initiator port once connected, thus allowing it to be totally transparent.”

The company says: “Cache policies can be set after an I/O analysis is done by the admin or just assign all available SSD capacity to all the LUNs, and let DCS figure out how best to cache the LUNs, based on actual I/O activities.”

A second patent (U.S. Patent No. 8,417,818I) extends TDI to additional storage protocols, such as iSCSI, SATA, InfiniBand, and others.

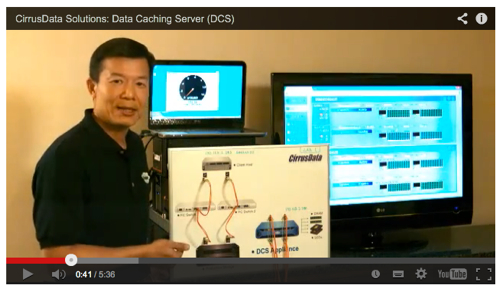

DCS appliance. Looks to be about 2U high.

DCS is installed in the SAN, typically between the Fibre Channel switch and the legacy storage being accelerated. How does the technology compare to other server flash cache products that accelerate SANs by getting rid of SAN network and data access latencies?

Bjorndahl said: “DCS adds one FC hop to the FC path. Compared with host-deployed cache which has zero added hop, or compared with virtualisation appliances such as SVC or FalconStor IPStor [these] add 2 FC hops. However, the 10x acceleration, even with the extra hop, is more than appealing to the user to embrace the solution, especially with the very unique feature that DCS can be deployed any time without pre-scheduled downtime, requiring absolutely no changes to the existing FC SAN environment.”

“We can deploy DCS in minutes, without even knowing the password to the FC switch, the application hosts or clusters, or the storage. This eliminates all the risks associated with deployment of cache at the host (downtime, new heavy hardware at each app server, cooling issues, cluster issues…) or at the FC switch (zoning changes for SVC and other virtualisation appliances).”

Compared to IBM’s SAN Volume Controller (SVC), “the most compelling advantage of our DCS is the ability to deploying on a live FC SAN without any changes and requiring no downtime.“

A high-availability DCS pair or single DCS box can be installed near the SAN hosts, giving the lowest latency, just in front of the storage controller or in the fabric.

CDSI is starting to partner with some of the PCIe-based flash board vendors, such as LSI. This flash card business is currently being bought by Seagate from Avago. Get a white paper here (PDF and registration.

The DMS products are available now. We expect the DCS product to be formally announced within the next three months.

How are they sold?

Bjorndahl said: "DMS ... is sold directly to our partners, whether it’s OEMs or VARs with PS teams. DCS will be different and will be a channel-based product."

Contact DCS directly to find out more. ®