This article is more than 1 year old

The SECOND COMING of Virtensys: HPC boffins turn PCIe into in-memory network

Haven't we heard this one before?

HPC boffins at startup A3CUBE have picked up where Virtensys left off and designed a way to use PCIe interfaces to provide direct shared global memory across a network with lower latency and higher scalability than Ethernet, InfiniBand and Fibre Channel.

San Jose-based A3CUBE was founded by in 2012 by Emilio Billi, its chief development officer and CTO, and Antonella Rubicco, its CEO. It is staffed by high-performance computing engineers. The group say their starting point was that there is a performance gap between storage systems and servers, one which they hoped could be remedied by using massively parallel processing techniques.

There are three product technologies being developed in A3CUBE's RONNIEE Express platform scheme:

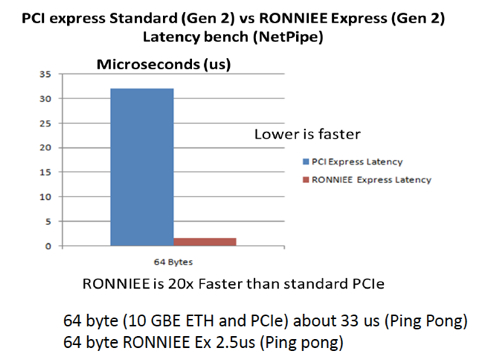

- RONNIEE 2S - a compact PCIe-based intelligent NIC designed to maximise application performance. It's claimed to eliminate conventional communications bottlenecks and provides multiple channels with <1µ latency, and fast direct remote I/O connections with nanoseconds level latency.

- RONNIEE RIO – a general purpose NIC supporting Ethernet and memory-to-memory transactions in a 3D torus topology that can plug in any server equipped with a PCIe slot. It presents a scalable interconnection fabric based on a shared memory architecture that implements the concept of distributed non-transparent bridging.

- RONNIEE 3 – a switch card designed to extend the scalability of RONNIEE 2S and is optimised for high performance data environments.

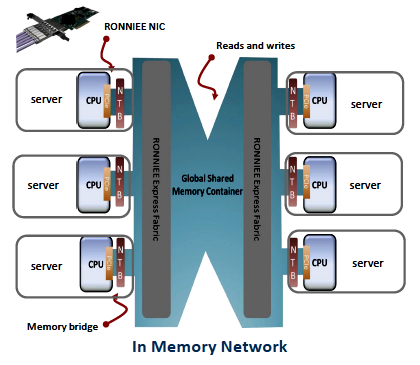

A3CUBE's RONNIEE Express scheme.

The RONNIEE products are said to implement an in-memory network, with A3CUBE stating that its In-Memory Network "provides full support for memory-to-memory transactions without the usual software overhead to achieve unmatched efficiency and performance compared to ordinary interconnection fabrics available on the market today."

From a slide deck we understand that A3CUBE's RONNIEE Express NIC uses memory as the main communication paradigm at protocol level. It uses PCIe direct access to memory with “memory windows” in combination with a globally shared 64-bit memory address space. These memory windows are used to create a shared global memory container that permits direct communication between local and remote CPUs, from memory to memory, and with local and remote I/O.

The Three RONNIEEs. From the top the RONNIEE 2S, RIO and 3.

We're told RONNIEE Express scales linearly up to 64,000 nodes and hundreds of I/Os per node. Its makers say it features "end-to-end traffic management with up to 16 million unique virtual streams between any two endpoints".

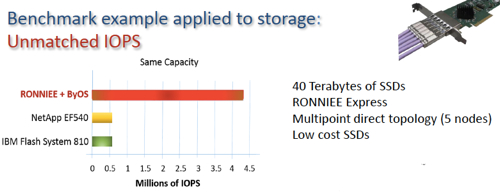

A3CUBE claims that the "RONNIEE Express data plane enables exascale storage that combines supercomputing’s massively parallel operational concepts and an innovative I/O interface eliminating central switching, network overhead and slashing the latency of traditional storage networking designs."

The cards implement an in-memory network that "discards the protocol stack bottleneck and replaces it with a direct memory-to-memory mapped socket, producing extraordinary and disruptive performance enhancements while leveraging commodity hardware."

A3CUBE has filed three US patents:

- #61786560 - Massive parallel petabyte scale storage system architecture,

- #61786537 - PCIe non-transparent bridge designed for scalability and networking enabling the creation of complex architecture with ID based routing, and

- #61786551 - Low-profile half length PCI Express form factor embedded PCI express multiport switch and related accessories.

A canned quote from CTO Billi reads: “Today’s data centre architectures were never designed to handle the extreme I/O and data access demands of HPC, Hadoop and other big data applications."

Billi's clearly not afraid to think big and goes on: "The scalability and performance limitations inherent in current network designs are too severe to be rescued by incremental enhancements. The only way to accommodate the next generation of high-performance data applications is with a radical new design that delivers disruptive performance gains to eradicate the network bottlenecks and unlock true application potential.”

A3CUBE sees four example use cases: big data and data centre; HPC; biotech and genome research; and computational fluid dynamics. It says its technologies could be used as building blocks for high-end routers, parallel storage, embedded computing and redundant servers.

The status of these technologies in terms of availability, support, price and sales channel has not yet been revealed.

There has been a previous attempt to use PCIe as a networking scheme between servers and storage and other resources by a startup called Virtensys. It crashed out in 2012 with Micron scooping up its assets.

The RONNIEE products would need to offer startlingly good data access performance and be plug-and-play in terms of deploying them with a skilled channel (reseller and/or OEM) setup for customers to provide sales and support, unless A3CUBE is going to sell to a few large customers and can go direct.

Much needs to happen before this technology can hit the street and join in the daily IT jungle dogfighting. ®