This article is more than 1 year old

The 'third era' of app development will be fast, simple, and compact

Will Intel and Nvidia join the HSA party, or insist on going it alone?

Shrinking apps will result in more apps

HSA, Rogers promised, will be smart enough to know when to pass what data to what processor, be it CPU, GPU, or some other accelerator. Haar face detection, for example, works by running pixels of an image of a face through several passes called "cascades", some that benefit best from a CPU and some from a GPU, but which require both per cascade.

Processing of suffix array data structures, on the other hand, which are used for text and "big data" analytics, is a pipeline in which some stages work best on the GPU and some on the CPU. HSA will be smart enough to performing the compute-unit balancing in the Haar face detection and the stage-switching in the suffix data array processing.

It's not just performance that HSA is aimed at, however – it's also code complexity. "A huge motivation in the HSA architecture," Rogers said, "is ease of programming."

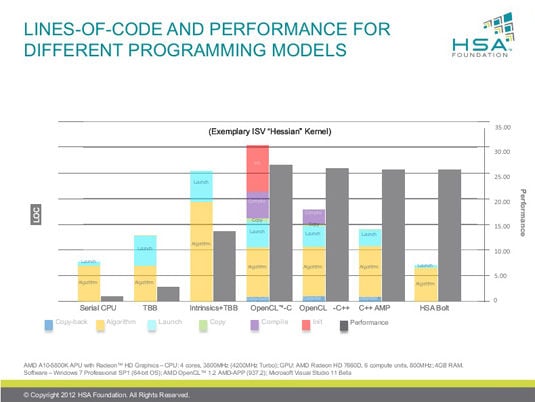

He cited examples of various computing systems running the Hessian kernel used in image processing to illustrate HSA's ability to both boost processing and simplify code. The tests were run on a serial CPU, threading building blocks (task-parallel runtime acceleration), added intrinsics (CPU assembly code), OpenCL with C semantics, OpenCL with C++ kernel language, C++ AMP, and finally HSA using the Bolt library.

In the serial CPU example, code was compact and straightforward, but performance was low. As code complexity (measured in lines of code) increased, so did performance, which peaked at the OpenCL with C semantics test. Code complexity dipped substantially thereafter, but performance dipped only slightly. In the test using HSA with the Bolt library, performance was marginally lower, but code complexity was substantially reduced.

"The code complexity is going down," Rogers said, "which means that the number of applications will go up."

The final HSA specifications should be finished mid-2014 – but as Rogers cautioned, "Honestly, closing specifications in a foundation or consortium with a lot of members is an inexact science." ®

Bootnote

Conspicuous in their absence from the HSA Foundation membership list are Nvidia, promoter of its own CUDA parallel-processing platform, and Intel. "The HSA Foundation has invited both companies, and we would welcome either or both of them to join us," Rogers said when their absence was pointed out during the pre-briefing's Q&A session.

"I will say that over time it's my hope they will join in. Ultimately it's good for the industry, it's good for the application developer, it's good for end users when we get to open standards and the hardware companies compete based on performance, power, and extension features. Having multiple different but equivalent ways of doing the same thing really doesn't benefit anyone."