This article is more than 1 year old

Why data storage technology is pretty much PERFECT

There's nothing to be done here... at least on the error-correction front

Feature Reliable data storage is central to IT and therefore to modern life. We take it for granted, but what lies beneath? Digital video guru and IT author John Watkinson gets into the details of how it works together and serves us today, as well as what might happen in the future. Brain cells at the ready? This is gonna hurt.

Digital computers work on binary because the minimal number of states 0 and 1 are easiest to tell apart when represented by two different voltages.

In a flash memory, we can store those voltages directly using a clump of carefully insulated electrons. But in all other storage devices, physical analogs are needed.

In tape or hard disk, for example, we look at the direction of magnetisation, N-S or S-N, in a small area. In an optical disc, the difference is represented by the presence or absence of a small pit.

The very blueprint of our biology, DNA, is a data recording based on chemicals that exist in discrete states. "Bit" errors cause mutations that allow evolution, or result in the missing or malformed proteins, which lead to disease. Data recording is essential to all life.

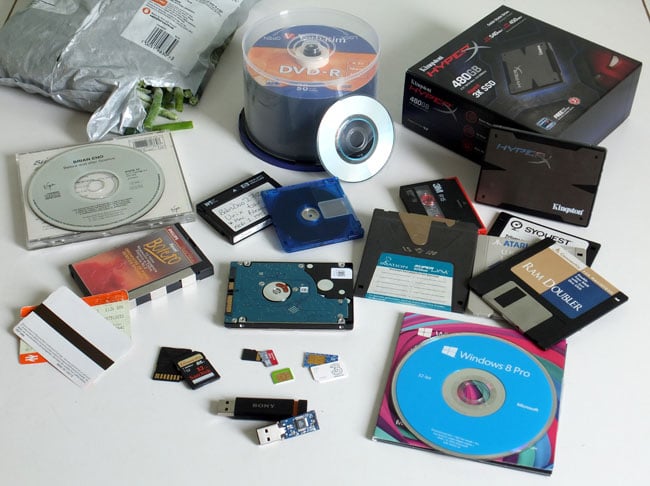

Data storage everywhere: CD, DVD, DAT, DCC, HDD, MiniDisc, SSD, SD, Sim, floppy disk, SuperDisk, magnetic stripe, barcode

Click for a larger image

A binary medium neither knows nor cares what the data represents. Once we can reliably record binary data then we can record audio, video, still pictures, text, CAD files and computer programs on the same medium and we can copy them without loss.

The only difference between these types of data is that some need to be reproduced with a specific time base.

Timing, reliability, durability and cost...

Different storage media have different characteristics and no single medium is the best in all respects. Hard drives are best at intense read/write applications, but the disk can’t be removed from the drive. And although the recording density of optical discs has been exceeded by hard drives, you can swap out an optical disc in a matter of seconds. Also, because they can be stamped at low cost, they are good for mass distribution.

Flash memory offers rapid access and is rugged and compact, but it is limited in the number of write cycles it can sustain. And even though flash memory wiped out the floppy disk in PCs, floppy disk technology is alive and well. It's in magnetic stripes on airline and rail tickets, on credit cards and hotel room keys. Sometimes a sledgehammer isn’t the right tool and the humble barcode is a perfect example.

In flash memory, the storage density is determined by how finely the individual charge wells can be fabricated. But the same advances in optics that allow smaller and smaller details to be photo-etched on a chip also allow optical discs to resolve smaller and smaller bits.

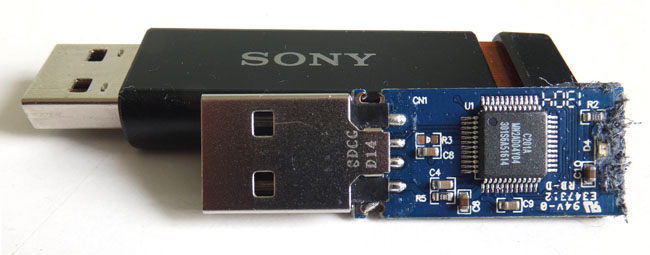

Flash chip inside USB thumb drive: no moving parts but degenerates with use

In a rotating memory - a spinning disc, whether magnetic or optical - the problem is two-dimensional: we need to pack in as many tracks as possible side by side and we need to pack as many bits into a given length of track as possible.

The tracks are incredibly narrow and active tracking servo systems are needed to keep the heads registered despite tolerances and temperature changes. To eliminate wear there is no contact between the pickup head and the disc.

Optical discs peer at the tracks though a microscope whereas in magnetic drives, the heads "fly" on an air film just nanometres above the disk's surface. Paradoxically, it is flash memory, which has no moving parts, that has the wear mechanism.

Channel coding

Discs scan their tracks and pick up data serially. We can’t just write raw data on the disc tracks, because if that data contained a run of identical bits there would be nothing to separate the bits and the reader would lose synchronism. Instead the data is modified by a process called channel coding. One of channel coding's functions is to guarantee clock content in the signal irrespective of the actual data patterns.

In optical discs the tracking and focusing is performed by looking at the symmetry of the data track through the pickup lens after filtering out the data. A second function of the channel coding is to eliminate DC and low frequency content from the data track so that filtering is more effective. The round light spot finds it hard to resolve events on the track that are too close together.

Mass media

The first mass-produced application of error correction was in the compact disc, launched in 1982, 22 years after the publication of Reed and Solomon’s paper. The optical technology of CD is that of the earlier LaserVision discs, so what was the hold-up?

Firstly, a digital audio disc has to play in real time. The player can’t think about the error like a computer can, but has to correct on the fly. Secondly, if the CD used a simpler system than Reed-Solomon coding, it would have needed to be much larger - hence closing the portable and car player markets to its makers. Thirdly, a Reed-Solomon error-correction system is complex and can only economically be implemented on an LSI chip.

Essentially all of the intellectual property that was needed to make a Compact Disc was already in place a decade earlier, but it was not until the performance of LSI Logic Corp's chips had crossed a certain threshold that it suddenly became economically viable and arrived with a wallop.

By a similar argument, we would later see the DVD emerge only once LSI technology made it possible to perform MPEG decoding in real time at consumer prices.