This article is more than 1 year old

Never mind your little brother - happy 10th birthday, H.264

Today it's all about you, Mister Video Codec

Feature As technology advances, video codecs come and go naturally enough. But while H.265 is still waiting in the wings, we should pay tribute to the groundbreaking H.264, which is a decade old this month.

H.264 is possibly not the snappiest or most memorable name, but even 10 years on it remains an important video coding standard, one that made HDTV possible. John Watkinson has had the phrase "industry standard" attributed to most of his numerous publications on digital audio and video – he really did write the book on MPEG – and he examines the life of this enduring codec for The Register.

The big picture

The great advantage of the digital domain is that it is possible to use the techniques of error correction and time-base stabilisation to deliver data to any desired accuracy and time stability. But simple digitisation cannot be used for the distribution of digital television because of the huge data rate it creates.

Every digital still camera owner knows the image is divided into millions of pixels, but in television we are going to send a new picture many times per second. It is easy to see that the resultant data rate is going to be phenomenal. Digital television would be neither practical nor economic without some reduction of that data rate. Compression is the solution, which means we need an encoder at the transmitting side and a matching decoder at the TV.

The International Standards Organisation (ISO) recognised the requirement for image compression standards quite early and set up JPEG, the Joint Photographic Experts Group and MPEG, the Moving Picture Experts Group. These groups have been responsible for standardised compression codes used extensively and successfully in today’s still pictures, digital cinema images, digital television and the associated audio.

One of the reasons for their success is that the ISO is able to bring together expertise from across the industry. Standards are not one individual’s bright idea, but incorporate the ideas of many. The ISO has also excelled at producing standards that are relevant. For example, it’s no good standardising the world’s best compression system if it needs a computer the size of a house to run it.

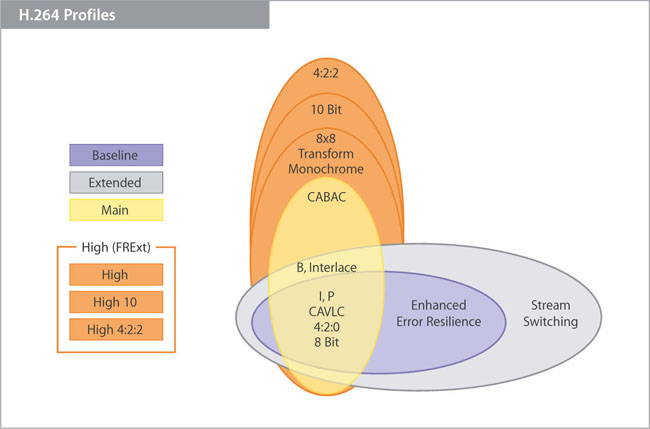

H.264 relies on various algorithms to deliver a range of profiles for different scenarios

CAVLC: Context-adaptive variable-length coding; CABAC: Context-adaptive binary arithmetic coding; FRExt: Fidelity Range Extensions

The adoption of a range of compression profiles addressed that problem. Low-cost hardware could achieve a lower compression factor by omitting some of the clever tricks. A wide variety of picture sizes is also supported.

Another difficulty with standards is that if they are too rigid they impede progress. That one was overcome in MPEG when it standardised the syntax of the communication between encoder and decoder. The standards do not specify the encoder, only the language it must speak to the decoder. In that way the encoding of algorithms could improve over time and there could be competition between encoder designs which would still be compatible with compliant decoders.

Standard definitions

MPEG-1 was the first codec which was limited in the size of the images it could handle, but which nevertheless hinted at what was to come. MPEG-2 was able to handle both standard definition and high definition television, but the compression factors it achieved were only really viable for standard definition. The work leading to MPEG-3 was completed at much the same time as MPEG-2 and was incorporated into it, so there is no separate MPEG-3 standard.

MPEG-4 built on MPEG-2 and provided a comprehensive set of coding tools with a relevance far beyond television. It also supported applications such as computer-rendered video and video games. H.264 is a subset of MPEG-4 which was optimised for high definition television and which actually made HD broadcasting possible.

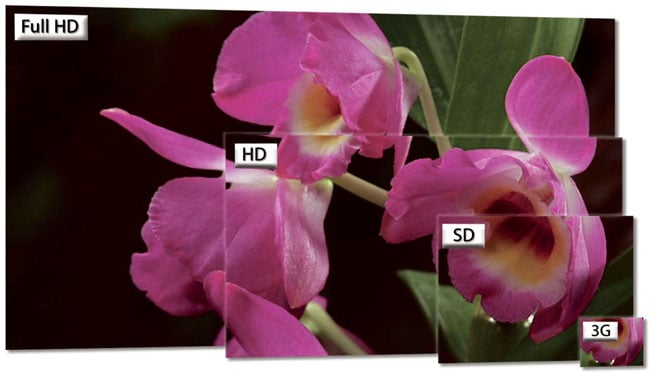

The scalability of H.264 has been key to its enduring utilisation

H.264 is complicated and it is only possible to give a general outline of how it works, but even at that level what it does is fascinating. It is probably a good idea to say something about information. We all use it, but without necessarily knowing what it is. Suppose someone tells you that it is daybreak. Is that information? Actually no, because daybreak is predictable and unsurprising: the ultimate everyday event. So one of the ways we judge information is how surprising it is, not in itself, but to us, the recipient.

For example, the discovery of Australia was only surprising to the English. The Australian Aboriginals certainly weren't surprised. But once you know of the existence of Australia, is it information if you are told about it again? Of course not: the element of surprise was absent and the second version of the message was redundant. So a good definition of information is something that could not have been predicted by the recipient.