This article is more than 1 year old

Teradata boosts DRAM on appliances for in-memory queries

You don't need no stinkin' HANA or Exalytics

If it were as simple as adding more main memory to a server to crank up the performance of a parallel database, Teradata would have done it by now. But with memory capacities going up on x86 servers and memory prices coming down, now is the time to add more in-memory processing to data warehouse appliances – and that is precisely what Teradata has done with a new software feature called Intelligent Memory.

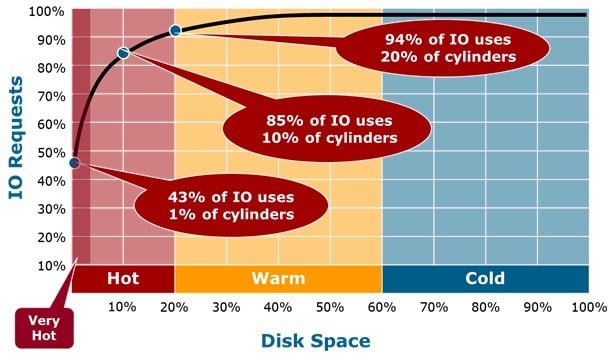

The idea behind the new Intelligent Memory feature is to tweak the underlying Teradata database so it stores the very hottest data in a memory cache. With main memory access being on the order of 3,000 times faster than reaching out to disk drives on a server node with the Teradata parallel database, it makes sense to splurge a bit on main memory.

But with DRAM still costing on the order of 80 times as much as disk drive capacity, Scott Gnau, president of the Teradata Labs division that designs the company's hardware and software, tells El Reg that you have to strike a balance between DRAM and disk.

For years, the Teradata appliances have had File Segment cache, or FSG for short in a universe where that makes sense (unlike this universe that we live in), so caching is not a new idea by any stretch of the imagination. But Intelligent Memory cache is different from FSG cache, which is available on all Teradata appliances, and is also distinct from the Teradata Virtual Storage that has been a part of the platform for a few years that moves data back and forth (based on its hotness) between disk and flash drives. The Extreme Data Appliances employ SANdisk flash drives as solid state drives – meaning they are part of the storage subsystem, not part of the memory subsystem.

With FSG Cache, the Teradata database temporarily stores the most recently used data in a segment of main memory that is carved out as disk cache, like a RAM disk in PCs from days gone by. As new data comes into the cache, old data is pushed out. With Intelligent Memory, instead of caching the most recent data, you cache the hottest – meaning the most frequently used – data on the disk drives in the database cluster. So very hot data comes in and over time the cold data gets pushed out of the cache.

The Intelligent Memory feature will be available on Teradata 14.0 and higher databases. It's in beta now, so if you want to try it out, call your Teradata sales rep. It will ship in the second quarter, and any company on a proper maintenance contract will get Intelligent Memory as part of their normal updates.

Incidentally, Gnau says that Intelligent Memory caching will work on data stored in row or columnar formats, since it works at a disk block level rather than at a physical object level such as with database rows or tables.

Intelligent Memory works transparently underneath applications, and you don't have to change SQL queries or do anything funky to your databases.

Teradata says a fat memory data warehouse can perform like an in-memory database

This is a bit in contrast to in-memory appliances, which often bolt onto production systems. But increasingly, the in-memory database is becoming a production-grade machine that sits behind applications, processing both transactions and ad hoc queries.

That's what SAP wants everyone to believe is the future with its HANA appliances, anyway.

Gnau says that companies should just keep consolidating data back in the data warehouse as they have been doing, and speed up the queries using server main memory as cache – by perhaps an order of magnitude on a wide variety of queries, and without having to install a new machine with a new database.

And equally importantly, the Intelligent Memory feature for the Teradata appliances provides consistent performance on queries. "A flat service level agreement is something companies want," says Gnau. "Consistency is often more important that speed for certain kinds of queries."

Teradata 14.0 also supports the Compress On Cold feature that, as the name suggests, automagically compresses data that has not been used so it takes up less space in a data warehouse. The Virtual Storage flash-caching feature was available on Teradata 13.0 and higher databases. All of these features as well as FSG cache can be used at the same time – in fact, this is the recommended behavior for data warehouse appliances from Teradata.

To be able to cram more data into main memory, Teradata has to boost the memory capacity in its server nodes, which have a pretty restrictive 96GB configuration today for a two-socket node using Intel's "Sandy Bridge-EP" Xeon E5 processors. That's a really tiny memory footprint, which was fine for disk-centric processing, but now Teradata is switching to 32GB memory sticks and jacking up the memory in its nodes to a maximum of 512GB. You can go with 256GB of memory per node if you are not feeling rich (or more precisely, your CFO tells you that you are not), but obviously that will curtail performance some.

Gnau says it will be able to goose that a little bit more on current Xeon E5 machines this year, and will offer even higher memory capacities on future "Ivy Bridge-EP" Xeon E5 v2 nodes when they become available. Intel is set to ship the Xeon E5 v2 processors in the second half of this year, and Teradata usually takes a bit of time to certify new iron for its data warehouse appliances.

Teradata did not provide pricing for the memory upgrades, but assuming that Teradata is shipping its appliances based on Dell iron, and the PowerEdge R720 is representative, then 512GB of memory costs on the order of $12,600 per node. That is an order of magnitude more expensive than a dozen 8GB memory sticks for that server, which costs around $1,263 at current Dell prices.

The fat memory is available on the 670 Data Mart Appliance, the 2700 Data Warehouse Appliance, and on the 6700 Active Enterprise Data Warehouse, and will soon be available on the flash-accelerated Extreme Data Appliance. ®