This article is more than 1 year old

Deep inside Intel's new ARM killer: Silvermont

Chipzilla's Atom grows up, speeds up, powers down

Little Atom grows up

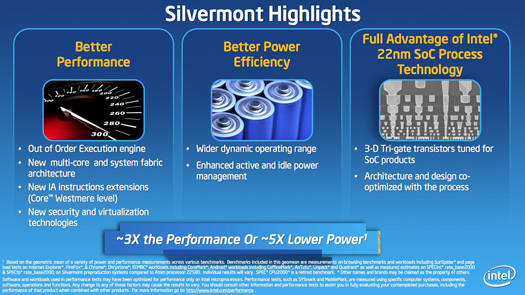

But back to the microarchitecture itself. Let's start, as Kuttanna did in his deep-dive technical explanation of Silvermont, with the fact that the new Atom microarchitecture has changed from the in-order execution used in the Bonnell/Saltwell core to an out-of-order execution (OoO), as is used in its more powerful siblings, Core and Xeon, and in most modern microprocessors.

OoO can provide significant performance improvements over in-order execution – and, in a nutshell, here's why. Both in-order and OoO get the instructions that they're tasked with performing in the order that a software compiler has assembled them. An in-order processor takes those instructions and matches them up with the data upon which they will be performed – the operand – and performs whatever task is required.

Unfortunately, that operand is not always close at hand in the processor's cache. It may, for example, be far away in main memory – or even worse, out in virtual memory on a hard drive or SSD. It might also be the result of an earlier instruction that hasn't yet been completed. When that operand is not available, an in-order execution pipeline must wait for it, effectively stalling the entire execution series until that operand is available.

Needless to say, this can slow things down mightily.

Not so with OoO. Like in-order execution, an OoO system gets its instructions in the order that a compiler has assembled them, but if one instruction gets held up while it's waiting for its operand, another instruction can step past it, grab its operand, and get to work. After all the relevant instructions and their operands are happily completed, the execution sequence is reassembled into its proper order, and all is well – and the processor has just become quite significantly more efficient.

You might ask why all processors don't use OoO when it's obviously so much more productive. Well, the answer to that logical question is the sad fact of life that you don't get something for nothing. OoO requires more transistors – a processor's basic building blocks – and more transistors require both more room on the chip's die and more power.

To the rescue – again – comes Intel's 22nm Tri-Gate process. Now that Silvermont has moved to this new process, Intel's engineers decided that they now have the die real estate and power savings to move into the 21st century, and Silvermont will benefit greatly from that decision.

OoO is not the only major improvement in Silvermont. Branch prediction – the ability for a processor to guess which way a pipeline will proceed in an if-then-else sequence – has also been beefed up considerably. "With our branch-processing unit," Kuttanna said, "we improved the accuracy of our predictors such that when you have a branch instruction and you have a misprediction – hopefully which happens quite rarely – you can recover from the misprediction quickly."

A quick recovery will of course improve a processor's performance. "But more importantly," Kuttanna said, "when you recover quickly you can flush the instructions that were speculating down the wrong path in the machine quickly, and save the power because of it."

Saving power is a Very Big Deal in the Silvermont architecture – after all, Atom's raison d'être is low power consumption. One of the many new feature that Silvermont includes is its ability to fuse some instructions together so that, essentially, one operation accomplishes multiple tasks.

"The key innovation on Silvermont," Kuttanna said, "is that we take the [Intel architecture] instructions that we call macro operations, which have a lot of capability built-in within the instructions, and we execute those instructions in the pipeline as if they're fused operations."

Typically in Intel architecture – IA, for short – you have operations that load an operand, operate on it, then store the result. In Silvermont, Kuttanna said, most of those operations can be handled in a fused fashion, so the architecture can be built without wide, power-hungry pipelines and still achieve good performance. "Using the fused nature of these instructions," he said, "we're able to extract the power efficiency out of our pipeline – and that's one of our key innovations in this out-of-order execution pipeline."

There have also been improvements made to the latencies in the execution units themselves, as well as latencies to and from high-speed first and second-level caches. Kuttanna also promised that latencies have also been improved in Silvermont's access to memory, partially due to improved, hardware-based prefectching, which stuffs data into the level-two (L2) caches from memory when it believes it will soon be needed.

"The whole thing is around the theme of building a balanced system," Kuttanna said. "It's not about just delivering a great core. You gotta have all the supporting structure to deliver that performance at the system level."

That supporting structure will, well, support Silvermont varieties of up to eight cores. Those cores will be paired, two-by-two (although a single-core version was also mentioned) with shared L2 cache. Those caches will communicate with what Intel calls a "System Agent" – what some of you might also call a Northbridge – over a point-to-point connector called an in-die interconnect (IDI).

The System Agent – as did a Northbridge – links the compute cores to a system's main memory. One big difference in Silvermont versus Bonnell/Saltwell is that those earlier microarchitectures communicated with their compute cores over a frontside bus (FSB). IDI is a big improvement for a number of reasons – not the least of which being that it's snappier than FSB.

In addition, the clock rate of an FSB is closely tied to the clock rate of the compute core – if the compute core slows down, so does the FSB. IDI allows a lot more flexibility, and it can thus be dynamically adjusted for either power savings or performance gains.

There's that power-saving meme again.