This article is more than 1 year old

GE puts new Nvidia tech through its paces, ponders HPC future

Hybrid CPU-GPU chips plus RDMA and PCI-Express make for screamin' iron

And here's where it gets really interesting

Nvidia's "Project Denver" is an effort to bring a customized ARMv8 core to market that can be deployed in both Tegra consumer electronics products and in Tesla GPU coprocessors. Nvidia has been pretty vague on the details about Project Denver, with the exception of saying it will deliver the first product using this ARM core mashed up with the "Maxwell" GPU in the future "Parker" hybrid Tegra chips – presumably called the Tegra 6.

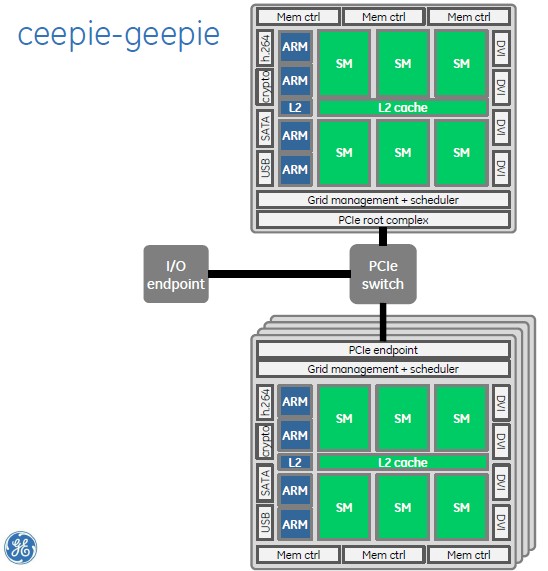

But we know that Nvidia will also create higher-end Tesla products aimed at HPC, big data, and visual computing workloads. Just for fun, and presumably based on some insight into Nvidia's possible plans, Franklin ginned up what he thinks a Tesla-class ceepie-geepie system-on-chip might look like. (Admittedly, it could be a system-on-package. We just don't know.)

That theoretical ARM-GPU mashup would have four Denver cores on the die, with their own shared L2 cache memory. This mashup would also have six SM graphics processing units with their own shared L2 caches. Three memory controllers run along the top and have the unified memory addressing that will debut with the "Maxwell" GPUs perhaps late this year or early next.

The chip would have various peripheral controllers down the side and DVI ports on the other side, and then a Grid management and scheduler controller to keep those SM units well fed, and an on-die PCI-Express controller that could be configured in software as either a PCI root complex or endpoint. That last bit is important because you could then do something like this:

How GE techies might build a cluster with Project Denver ARM cores and future GPUs from Nvidia

With PCI-Express on the chip, Nvidia could put a complex of sixteen ceepie-geepie chips on a single motherboard, delivering a hell of a lot of CPU and GPU computing without any external switching. RDMA and GPUDirect would link all of the GPUs in this cluster to each other, and it is even possible that Nvidia could do global memory addressing across all sixteen ceepie-geepie chips. (El Reg knows that Nvidia wants to come up with a global addressing scheme that spans multiple nodes, but it is just not clear when such technology will be available.)

You would then have a networking endpoint of choice coming off this sixteen-way ceepie-geepie cluster to link multiple boards together. I think a 100Gb/sec InfiniBand link is a good place to start, but I would have preferred the Cray Dragonfly interconnect that was developed under the code-name "Aries" that is at the heart of the Cray XC30 supercomputers, and that is now owned by Intel.

At the moment, GPUDirect only works on Linux on Intel iron, so it would be tough to play Crysis on such a theoretical ceepie-geepie supercomputer as Franklin outlines above. But Linux works on ARM, and GPUDirect will no doubt be enabled for Nvidia's chips. All we need is a good WINE hardware abstraction layer – remember, "Wine is not emulation" – for making x86 Windows work on top of ARM Linux, and we are good to go. ®