This article is more than 1 year old

Intel takes on all Hadoop disties to rule big data munching

'We do software now. Get used to it.'

Hadoop – and Intel – performance anxiety

Intel will be offering annual subscriptions on IDH3 on a global basis starting in the second quarter, with China getting it by the end of this quarter, at "competitive" prices. Intel is not going to be the low-priced or high-priced Hadoop producer, Boyd said, but of course, no one really publishes prices for their Hadoop stacks so it will be hard to say. Intel will offer two support plans for IDH3, one with 8x5 standard business support and another with 24x7 premium support.

Intel also plans to work with selected hardware and software reseller partners to cook up integrated systems. Server upstart Cisco Systems looks to be tapping Intel for the distribution on its UCS machines, and perhaps working in conjunction with its Tidal Enterprise Scheduler and existing Hadoop UCS bundles.

Red Hat was also trotted out at the event, and El Reg has the distinct impression that Intel and Red Hat have a gentlemen's agreement to not invade each other's software turf. Red Hat did not provide a sufficient answer when El Reg asked if IDH3 was going to be the official Hadoop distro for Red Hat or what the nature of its relationships were with Cloudera, Hortonworks, and MapR. What we can tell you is what we told you a week ago when Red Hat hosted its big data event: Red Hat is not going to do its own Hadoop distribution, as illogical as that may seem to many of us.

What Intel is focusing on now is the performance it can bring to bear for Hadoop with all of its hardware and software.

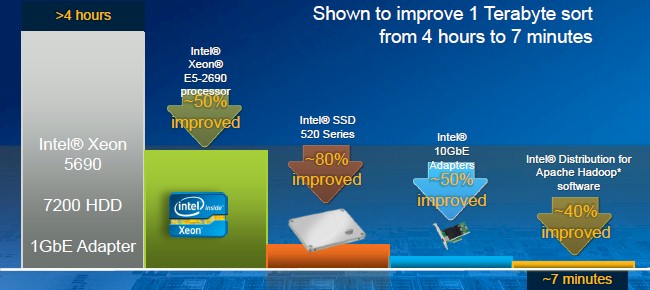

Intel says the combination of new processors, flash, 10GE, and tuning delivers big performance gains with IDH3

To make its point, it took a cluster based on old six-core Xeon 5690 processors, 1 Gigabit Ethernet connectivity, and 7,200 RPM spinning disks and then replaced this with a server setup based on more modern Xeon E5-2600 processors, 520 Series SSDs, 10GE adapter cards, and its IDH3 software tuned up to make the best use of these features. It then ran the terabyte sort test on both machines. It took more than four hours on the old iron running open source Apache Hadoop and under seven minutes on the new iron using Intel's IDH3 distribution.

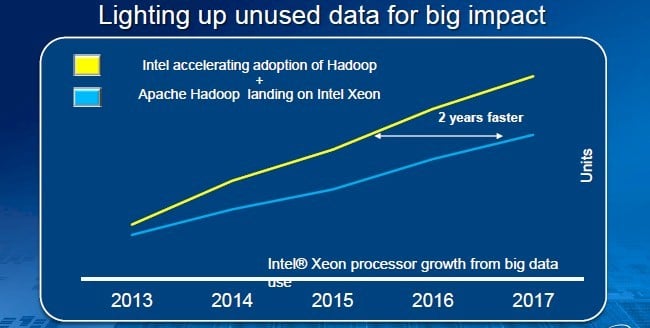

Ultimately, this is about pumping up Data Center and Connected Systems revenues and profits faster, and specifically to fill in the gaping hole slashed out of the PC market by smartphones and tablets. Before the PC market collapsed, Intel was on a crusade to double its Data Center and Connected Systems revenue to $20bn by 2016, a level set against the $10bn in sales in 2011.

Intel thinks it can accelerate big data-related Xeon sales by doing its own Hadoop

Boyd was refreshingly honest about this being about money, in fact, and shared the chart above. Intel wants to sell more Xeon chips into Hadoop clusters (and other big data products, to be sure), and it wants to do it sooner and ramp up the sales faster, too. And, of course, it wants to pump up its software business, too, and bring more dollars to the bottom line.

Your move, Cloudera, Hortonworks, MapR, EMC, and IBM. ®