This article is more than 1 year old

Cache 'n' carry: What's the best config for your SSD?

How to gain solid-state performance with out losing hard drive capacity

Feature The idea of using a low-capacity SSD to store the most frequently accessed files or parts of files in order to access them more quickly than a mechanical hard drive can serve them up - a technique called SSD caching - has been around for some time, but it wasn’t until the arrival of Intel’s Smart Response Technology with the company’s Z68 chipset, released in 2011, that the technology began to be implemented in personal machines rather than servers.

Cache in your chips

Intel’s thinking was to get ordinary users into the SSD game by allowing then to put small, cheap solid-state drives into their systems alongside existing, large capacity HDDs rather than suggest they swap out the latter for a more expensive yet not as capacious SSD. The cache drive approach brings almost all of the benefits of SSD - fast boot times and file access - without having to break the bank to get a large storage space.

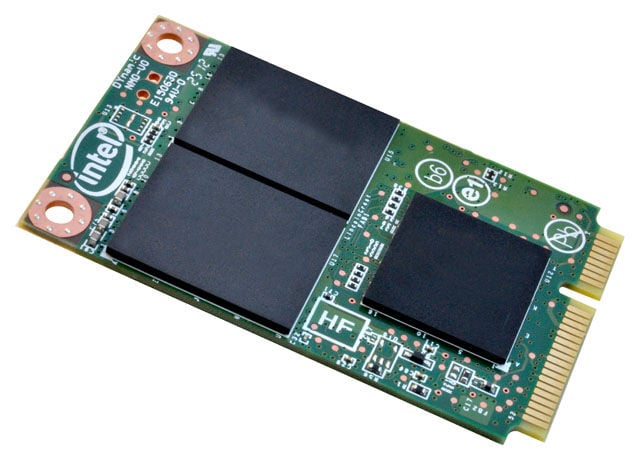

At the same time, Intel launched the 311, a 20GB capacity, 3Gb/s Sata SSD designed specifically to be used as a cache drive in Z68-based systems. Motherboard manufacturers were quick to take advantage of SRT, and a number of Z68 motherboards appeared sporting the connectors for an mSata drive. In certain Asus and Gigabyte boards, cache drives even came pre-installed.

However, what Intel and a great many others didn’t see coming was the very competitive, a polite way of saying cutthroat, pricing war that SSD suppliers are now engaged in. That has brought down the prices of mid-size SSDs to the point when many users are willing to take a punt. That said, SSDs approaching the kind of capacities we’ve come to take for granted with HDDs are still incredibly pricey. You can now pick up a fairly fast performing 120/128GB SSD for well under a hundred pounds to hold the OS and all the apps you need, but if you have a lot of data on your system, trading capacity for performance isn’t always attractive. Caching allows you to get the best of the both worlds.

At least, that’s how it’s being sold. But is it better? Let’s find out.

Intel Smart Response Technology

Intel’s Smart Response Technology (SRT) first appeared a couple of years ago in version 10.5 of the company’s Rapid Storage Technology (RST) Raid software for the Sandy Bridge chipset, but it was initially only enabled on the Z68 desktop chipset and a couple of mobile products. SRT has since been supported on the more recent Z77, H77 and Q77 chipsets. SRT works by caching the I/O data blocks of the most frequently used applications. It is able to discriminate between high value or multi-use bytes, such as boot, application and user data, and low-value data used in background tasking. The low-value data is ignored and left out of the caching process.

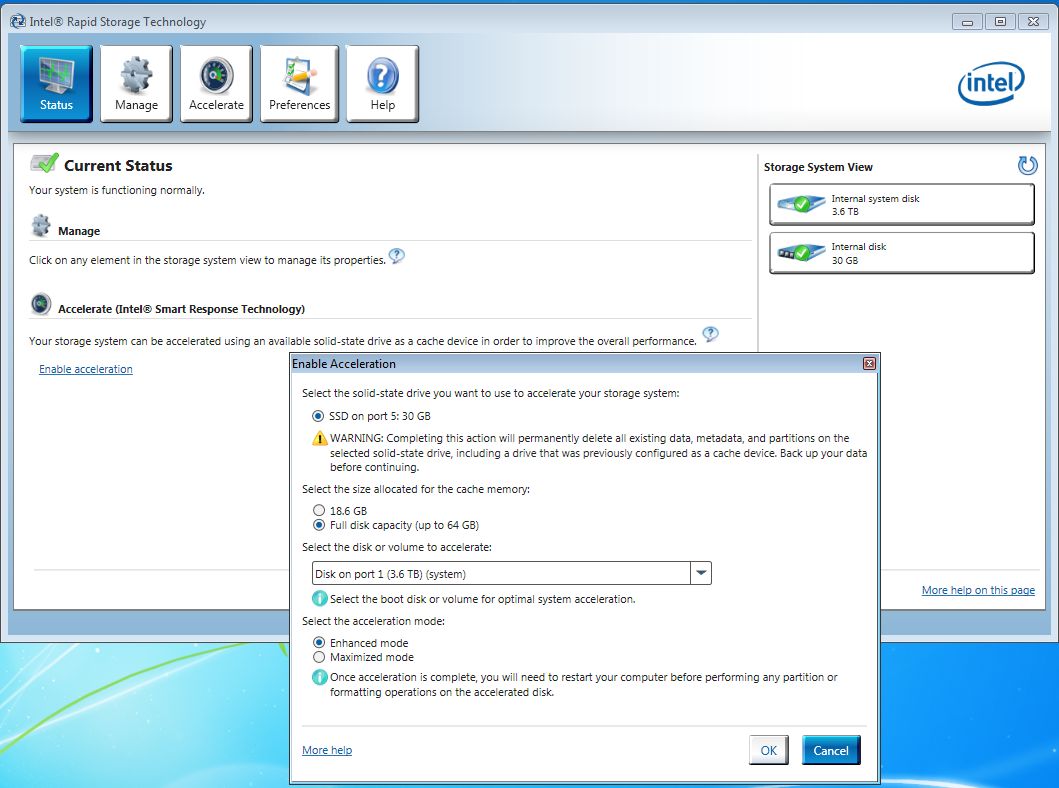

To enable SRT, you have to enter the motherboard’s Bios settings and switch the Sata controllers to Raid mode - SRT won’t work in either ACHI or IDE modes. The technology uses no more than 64GB of space on the SSD. Any extra space left over remains untouched, so there’s no point splashing out on a bigger drive. The SRT management software is an easy-to-use app which allows you to choose which of two types of caching you wish to employ.

The Two types are Enhanced (Write-Through) and Maximised (Write Back). In Enhanced mode, all the writes are sent to the SSD and HDD simultaneously which means the drives can be later separated without you having to worry about data preservation. However, there’s a hit on performance as all the writes slow to the speed of the HDD. In Maximised mode, the majority of the host writes are captured by the SSD and asynchronously copied to the HDD when the system is idle. Once again, there are good aspects to this approach – you get faster performance than Enhanced mode - and bad: SRT must be disabled before any of the drives can be removed otherwise the next time you boot up you’ll end up with the longest list of orphan files you're ever likely to see. Ask me how I know...

Cool Fusion

In 2012, Apple launched what it calls Fusion Drive technology on the latest incarnations of the iMac and the Mac Mini. Currently there are only two Fusion Drive options available, both using a 128GB MLC NAND SSD. The 1TB option is available for the latest Mac Mini or any new iMac, but the 3TB option is exclusively tied to the 27in iMac. Fusion Drive uses Mac OS X’s Core Storage Logical Volume Manager, available in version 10.7 Lion and up, to present multiple drives as a one single volume. The technology doesn’t behave in the same way as the usual SSD cache. Instead it moves data between the SSD and HDD and back again depending on how often the data is accessed and how much free space there is on any of the drives. The idea is to ensure that the most-read files are stored on the SSD, which is considered part of the host system’s overall storage capacity rather than a separate, ‘hidden’ buffer. El Reg will be digging deeper into Fusion in a future article.