This article is more than 1 year old

Huawei: Half a million IOPS? Pah, we can do better

SPC-1 speedster now fastest ever all-flash array

Huawei has captured the SPC-1 crown for disk drive and flash arrays with a 600,000-plus IOPS result for its Dorado5100 all-flash array.

The SPC-1 benchmark tests how networked storage arrays serve data requests from servers in a business environment. IBM's StorWize V7000 headed up the SPC-1 charts with a 520,043.99 result scored in January this year: the first time a system had breached the half-million IOPS level. Its average response time was 7.39ms and the cost/IOPS was $6.92. Its total system cost was $3,598,956.00 at list price.

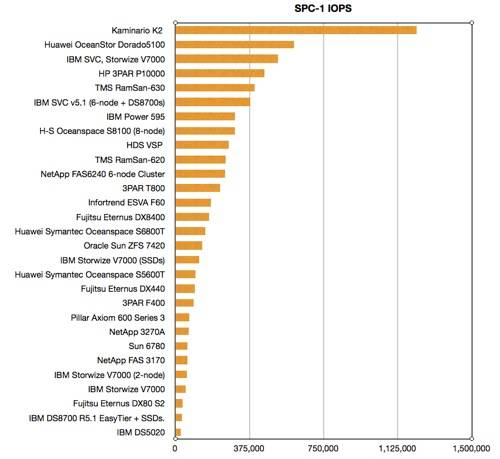

SPC-1 Results table

Kaminario topped a million IOPS a few weeks ago but that was with an all-DRAM system, so it doesn't really count unless you need stratospheric performance. Back in the real world Huawei's OceanSpace Dorado5100 array achieved 600,052.40 SPC-1 IOPS with an average response time of 1.09ms and a cost/IOPS of $0.81. The total system list price cost was $488,617.00, meaning that an all-flash system which cost less that half a million dollars was faster overall and faster to respond than a $3.6m IBM storage array based on disks.

Now that IBM has bought TMS, which also features in the SPC-1 lists, as the chart shows, we can expect IBM results to perk up.

The Dorado5100 used mirrored flash, with a raw capacity of 19.2TB, and addressable capacity of 6.44TB. It had four flash enclosures, each with 24 x 200GB SSDs and a controller. Altogether there were two active-active controllers.

Huawei, IBM and TMS are regulars in the SPC-1 benchmark tests, each over-taking the others in turn. Will IBM and TMS, now one organisation, find a chink in Huawei's armour and overtake it again?

A stray thought: with more suppliers – and mainstream suppliers at that – entering the all-flash array product space, how long must we wait for Gartner to produce an all-flash array Magic Quadrant diagram? Won't that be fun to look at? ®