This article is more than 1 year old

Amazon cloud double fluffs in 2011

There's a rumble in the data jungle

Being the touchstone for cloud computing, online retailing giant Amazon wants to brag about its compute, storage, and other cloud services that are sold under the Amazon Web Services brand. For whatever reason – probably to obscure the costs and possibly the profits of the AWS subsidiary – Amazon has not broken out the business, and still lump it into the Other bucket.

But sometimes, Amazon just can't resist itself. As the new year got rolling, Amazon did a little bit of chest beating about AWS.

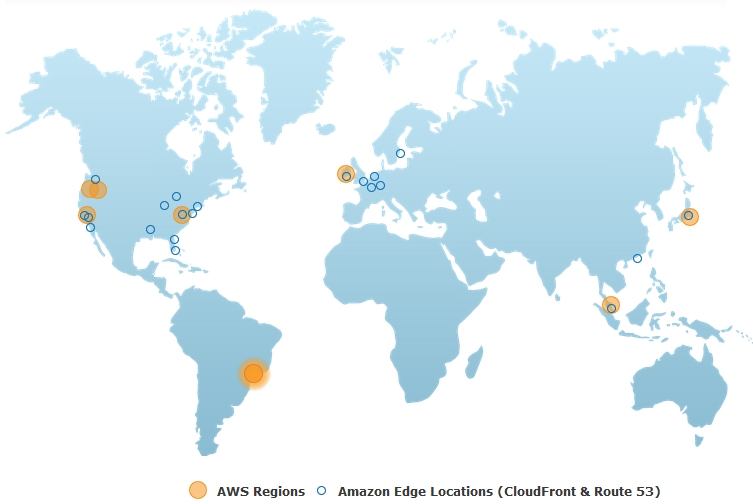

First, the company said that with the opening of its AWS data center in São Paulo, Brazil in mid-December, the company has doubled its AWS data-center footprint.

The company ended 2010 operating an AWS center in Northern California for companies on the West coast of the US, another one in Northern Virginia for the East coast, one in Singapore for the Asia/Pacific region, and another in Dublin, Ireland, to serve Europe.

In 2011, another AWS center was plunked into Tokyo, followed by a special super-secure cloud for the US federal government in Oregon, another center in an undisclosed location in Oregon that offers prices that are 10 per cent lower than the California center, and of course the São Paulo facility that just opened. Amazon also opened up seven CloudFront edge locations in 2011, which are content-delivery network services that front-end AWS data centers to speed up applications.

Here's what the Amazon AWS footprint looks like:

Amazon does not disclose how many servers it has operating the various AWS services or where they are located, but executives will toss out this statistic: every day through 2011, AWS added the same amount of server processing capacity, on average, that it took to run the Amazon online retailing operation in 2000, when it was a $2.76bn company.

"Just getting the racks into the data centers and powered up fast enough is a challenge," James Hamilton, a vice president and distinguished engineer on the AWS team, explained at the Open Compute forum last fall.

During 2011, AWS provided cloud compute services to over 100 US government agencies, and it doubled the number of CloudFront customers to over 20,000. That last figure is probably a very good indicator of the number of companies doing serious computing on Amazon's cloud, and therefore paying Amazon big bucks for various compute, storage, and auxiliary services.

Amazon does not divulge the number of customers using its core Elastic Compute Cloud (EC2) service – but it should because EC2 is a material and important part of Amazon's business.

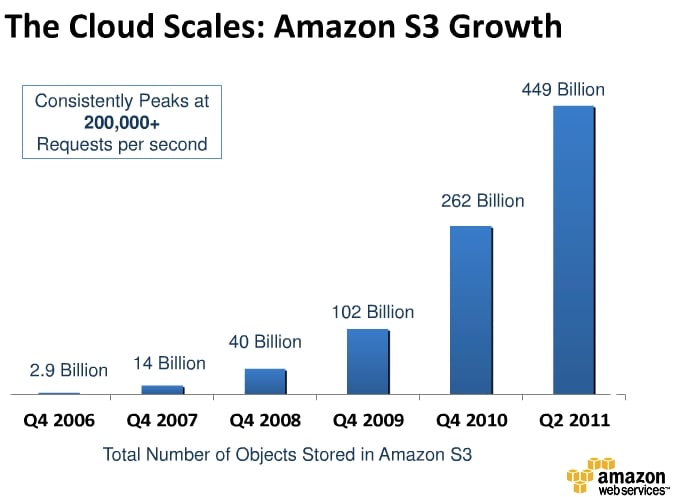

One interesting statistic that Amazon has consistently delivered is the number of unique objects stored across its Simple Storage Service (S3) storage cloud. Here's how it has grown over time:

Way back in early 2006, shortly after the S3 service launched, there were 200 million objects crammed into its disk arrays. A year later, it exploded to 5 billion objects, and then kept right on exploding to 18 billion in the first quarter of 2008 and 52 billion by the first quarter of 2009. The peak number of requests to get files across S3 peaked at around 70,000 requests per second, according to Amazon.

As you can see from the chart above, which shows data in the fourth quarter (except for 2011), Amazon's S3 customers continue to swell the number of objects by a factor of two or three per year. Amazon had 262 billion objects in S3 at the end of 2010, and now says it ended 2011 with 566 billion objects, more than doubling its 2010 load.

Amazon also says that S3 was peaking at over 200,000 requests per second earlier in 2011 and was hitting 370,000 requests per second as the year came to an end, so requests are growing a little faster than the object count.

It would be very interesting to know just how much capacity those 566 billion objects take up and how many servers are needed to drive it. But Amazon is not talking about that because that is super-secret information that even Jeff Bezos, Amazon's founder, has to burn before reading – a suitable task for his Kindle Fire, we suppose.

Moreover, with Amazon announcing automatic S3 object expiration in December, the object count growth in S3 could go down significantly. This is good news for both Amazon and S3 customers, who will only be storing data that is actually perceived as being useful.

What Amazon also does not discuss is how much revenue AWS is raking in, how much investment it takes, and what – if any – profits it generates.

The company breaks out media sales, electronics and other merchandise sales, and then a generic "other" sales, with "other" including AWS, marketing and promotion, sales generated through co-marketer affiliate sites, and interest and other fees from Amazon-branded credit cards.

It is hard to say if AWS represents the lion's share of this "other" category, but it is tempting to believe so. In 2008, Amazon booked $19.2bn in sales and $542m in Other revenues, and in 2009, Amazon grew by 27.9 per cent to $24.5bn and Other grew by 20.5 per cent to $653m. In 2010, Other grew by 45.9 per cent to $953m, while Amazon overall only grew by 39.6 per cent to $34.2bn. In the first nine months of 2011, Amazon posted $30.6bn in sales (up 44.2 per cent) while the Other category within this number accounted for $1.08bn in sales (up 70.4 per cent).

Other is now growing firmly ahead of Amazon overall, and the growth is accelerating. That sure sounds like cloud computing – if you believe all the hype. ®