This article is more than 1 year old

Future of computing crystal-balled by top chip boffins

Bad news: It's going to be tough. Good news: You won't be replaced

If you thought that the microprocessor's first 40 years were chock full of brain-boggling developments, just wait for the next 40 – that's the consensus of a quartet of Intel heavyweights, past and present, with whom we recently spoke.

At the 4004's 40th birthday party in a San Francisco watering hole on November 15, The Reg got an interesting earful from the leader of that first commercially available microprocessor's design team, Federico Faggin. At that same soirée, we also buttonholed Shekhar Borkar of Intel Labs, where he directs microprocessor technology research.

Both men expressed confidence leavened with caution when describing the frontiers of microprocessor design and the future of computing.

Coupled with our earlier discussions with Intel microprocessor architect Steve Pawlowski and process technologist Mark Bohr, we came away with the impression that the past 40 years – especially the first 30 – weren't a simple and straightforward matter of process-size scaling as they may have appeared to those of us who were outside of the research labs.

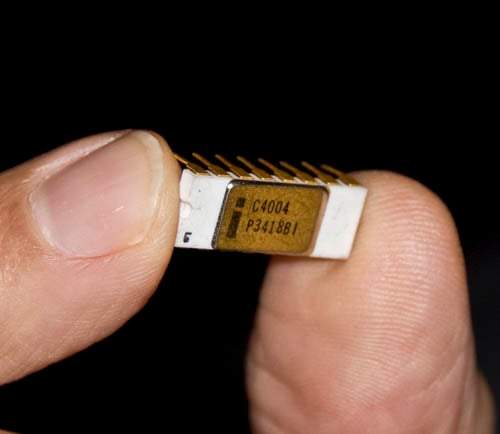

The chip that helped start the computing revolution, in the hand of its lead designer

We also learned that that the next 10 years will be tough – and that after that, the crystal ball goes dark.

It ain't been no cakewalk

When we asked Borkar and Faggin about what might be the biggest challenges when developing future microprocessor technologies, Borkar pointed out that process improvements have been challenging since day one. "It's really tough. It has been tough."

Referring to the microprocessor's first 30 years, when process technology focussed on scaling using Robert Dennard's MOSFET scaling guidelines as outlined in his landmark 1974 paper, Borkar said "It wasn't a breeze. Dennard scaling laid down the recipe – and it was a very good recipe. And people said, 'Let's follow it'. But it wasn't easy."

Times have changed, though – and not for the better. "Now," he said, "that recipe doesn't work."

Borkar then backtracked a bit, clarifying his statement to say that he believes that engineers will continue to advance scaling, but through more-selective choices of techniques. "It's not fair to say that we are at the end of Dennard scaling," he admitted.

Shekhar Borkar

"Dennard scaling showed a simple recipe. A well-behaved recipe," he said. "Now what we are doing is we are following that recipe, but we are doing it intelligently. 'Okay, I am going to scale length only', or 'I am going to scale the oxide, and not length'. We're doing it intelligently, as opposed to scaling everything down. So it is not fair to Dennard to say we are not following his recipe."

And Borkar has plenty of confidence in continued innovation. "The engineers," he said, "they'll find out a way to do it. There have been lots of innovations that have happened in process technologies – even in the 90s, when they were following Dennard scaling all the way through."

But nowadays, Borkar said, the challenges are getting tougher day by day. But again he put his faith in the ingenuity of engineers. "The one thing that gets the engineers going is a challenge. So when you give them this challenge: 'Make that 10 nanometer device work for me', they don't know better – they make it work for you," he said.

"That's what we did for the last 40 years."

Federico Faggin agreed that experimentation, failure, and innovation are what's needed to push the process forward. "That's life in the trenches," he said.

"I agree with Shekhar that engineers will figure out a way," he continued. "They will not figure out a way to use half an atom to do something, so there's going to be a limit on how small...", at which point Borkar interrupted him, joking: "Go and challenge them. That's my policy."