Original URL: https://www.theregister.com/2011/11/14/top_500_supers_nov_2011/

Top 500 Supers: Détente in East, West petaflops race

An arsenal of big iron deploying soon

Posted in HPC, 14th November 2011 14:02 GMT

SC11 For the first time since the Top 500 rankings of supercomputers was started back in 1993, the top 10 machines on the list are ranked in exactly the same order as they were in the list six months ago. But the HPC racket is set to explode, with multi-petaflops machines in the works using new processors and GPU coprocessors.

You can blame some of the détente on the arms race going on between the supercomputer labs in the United States and Europe in the West and Japan and China in the East on Intel and Advanced Micro Devices, which are both late bringing their respective Xeon E5 and Opteron 6200 processors to market. Yeah, yeah, El Reg knows that official launch dates were never divulged so you can't technically call them late, but let's face it, chipheads: Everyone, including your server partners, wanted these chips out the door earlier in the third quarter.

If these Xeon E5 and Opteron 6200 chips had got out the door and been put into machines that were in turn accepted by the big supercomputer labs, then the top-heavy part of the Top 500 list would have seen some churn. And maybe even more than one machine above 10 petaflops.

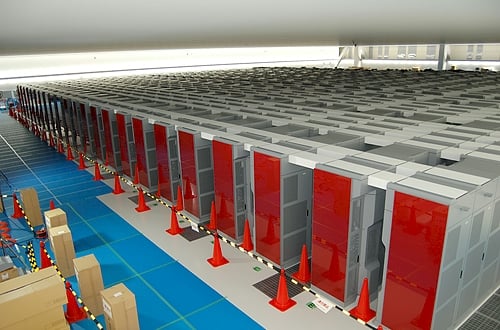

Top of the flops: Fujitsu's Sparc64-based K supercomputer

But, as it stands, there is only one machine in the world that has broken the 10 petaflops barrier running the Linpack Fortran benchmark test, and that is the K super built by Fujitsu for the Japanese government. The K super is a massive beast based on Fujitsu's eight-core Sparc64-VIIIfx processor and a 6D mesh/torus interconnect called "Tofu" (you'd think it would be called Super Noodle or something like that...), and only two weeks ago the Rikagaku Kenkyusho (Riken) research lab in Kobe, Japan, said that it had attained its full capacity of 864 racks, 705,204 cores running at 2GHz, and attaining a peak theoretical throughput of 11.28 petaflops and 10.51 sustained petaflops on the Linpack test. This machine doesn't need no stinking GPUs and does all of its flopping with beefy RISC cores. It has also run out of expansion room, and that is why Fujitsu only a week later has announced its successor, the PrimeHPC FX10.

The FX10 super, unlike K, is meant to be a commercial product rather than a one-off built to test the concept for the Japanese government. The FX10 machine will be based on Fujitsu's own 16-core Sparc64-IXfx processor, which will run at 1.85GHz and deliver 236.5 gigaflops, a little less than twice that of the 128 gigaflops that the Sparc64-VIIIfx chip does.

The PrimeHPC FX10 machine will scale from 4 to 1,024 cabinets, sporting between 384 and 98,304 nodes. Fully loaded, this behemoth will deliver a peak performance of 23.25 petaflops. Based on the list prices Fujitsu announced, such a machine would cost $656m. Even in today's world, that is a crazy amount of money. And at an estimated 23 megawatts, the electric bill for an FX10 machine is going to be a doozy. Fujitsu things it can sell 50 of the PrimeHPC machines over the next three years, but they will not be fully loaded – that's for sure. Unless it can be shown to make Google and Baidu searches faster. The FX10 machines will start shipping in January 2012. (Did you hear that, Oracle?)

The next time the Top 500 list comes around on the guitar, hybrid CPU-GPU machines from Cray as well as PowerPC A2 machines from IBM as well as homegrown massively parallel machines based on MIPS and Alpha derivatives will be jockeying for the top of the flops charts, and K will stay where it is, much as the Earth Simulator massively parallel vector super built by NEC for the Japanese government did a decade ago. (This machine is still ranked number 95 on the list.)

But Fujitsu looks like it is trying to get into the exascale race, and it will be interesting to see the political storm that erupts when and if a US or European lab suddenly decides it wants an FX10 machine instead of some more indigenous massively parallel beast. It would be interesting to see NEC and Hitachi try to come back to the K party with vector engines of some sort after they left Fujitsu holding the K super bag back in May 2009 when the Great Recession was smashing their financials.

Number two son

Number two on the list is the Tianhe-1A hybrid super at the National Supercomputing Center in Tianjin, China. The Tianhe-1A mixes six-core Intel Xeon processors, Nvidia Tesla GPUs, and a smattering of homegrown Sparc processors (China is making its own Sparc, MIPS, and Alpha processors – it's like the late 1990s meets the early 2010s.) The resulting machine hit 2.56 petaflops on the Linpack test and hasn't changed a bit since it entered the list a year ago. The Tianhe-1A ceepie-geepie has 14,336 Xeon processors and 7,168 of Nvidia's Tesla M2050 fanless GPU coprocessors. It uses a homegrown tray server design and a proprietary interconnect called Arch, which glues together the 186,368 cores in those CPUs and GPUs. The Tianhe-1A machine has a peak theoretical performance of 4.7 petaflops, so it is only running at 54.6 per cent efficiency running the Linpack test, which is pretty bad compared to the Fujitsu K box, which is delivering 93.2 per cent efficiency. Tianhe-1A is not doing any worse than other monster ceepie-geepie machines.

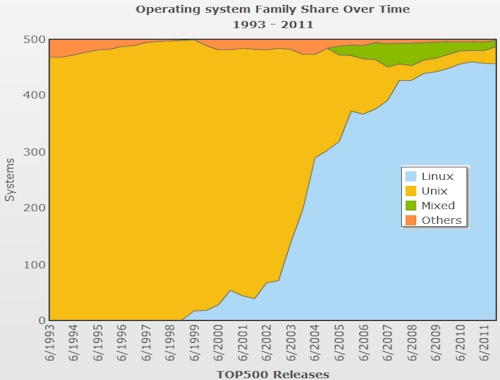

Traditional HPC means Linux. Next!

The top machine in the US is still the "Jaguar" Cray XT5 system at Oak Ridge National Laboratory, which has 224,162 Opteron cores and which is rated at 1.76 petaflops of sustained performance on the Linpack test. This machine ranks third on the list, and is in the process of being transformed into a 20 petaflops hybrid CPU-GPU XK6 super, a deal that Cray took down last month. The US Department of Energy is shelling out $97m for that upgrade, which will combine the Opteron 6200 processors from AMD with the future "Kepler" GPU coprocessors from Nvidia. Oak Ridge is hoping to hit an exaflops – one thousand petaflops or one million teraflops – sometime in the 2018 timeframe.

China's "Nebulae" ceepie-geepie, built from Xeon processors and Nvidia Teslas, is installed at the National Supercomputing Center in Shenzhen and ranks fourth on the Top 500 list. Nebulae has a total of 120,640 cores across its CPUs and GPUs, which are housed in a blade server chassis crafted by Chinese server maker Dawning. It has 1.27 petaflops of sustained performance on the Linpack test.

Number five is the is the Tsubame 2.0 super, built from Hewlett-Packard's ProLiant SL390s G7 tray servers and sporting Xeons CPUs and Nvidia Tesla GPUs and some help from prime contractor NEC – a political necessity to get a machine installed at the Tokyo Institute of Technology (and why Sun Microsystems, which built Tsubame 1.0, needed NEC as a prime contractor, too). Tsubame 2.0 has 73,278 cores and a Linpack sustained performance of 1.19 petaflops.

Cray's "Cielo" XE6 super at Los Alamos National Laboratory, based on eight-core Opteron 6136 processors and using the "Gemini" XE interconnect, ranks number six, as it did in June. It has 142,272 cores and delivered 1.11 petaflops of sustained Linpack.

Silicon Graphics, which just last week said it had scored a deal with NASA to upgrade the "Pleiades" Xeon cluster to 10 petaflops over the next several years, has spot number seven with the current implementation of the Pleiades machine. It has 111,104 Xeon cores of various vintages and is installed at NASA's Ames Research Center; it delivers 1.09 petaflops of number-crunching oomph.

Cray holds the number eight position on the November 2011 list with an XE6 machine called "Hopper" based on the twelve-core Opteron 6100s processors. This machine is installed at the DOE's Lawrence Berkeley National Laboratory and has 153,408 cores; it delivers 1.05 petaflops.

Number nine on the list is the Bullx parallel cluster built by Bull for the Commissariat a l'Energie Atomique (CEA) in France, which is based on the Xeon 7500 processors from Intel and uses Quad Data Rate (QDR) InfiniBand to link nodes. It delivers 1.05 petaflops as well on the Linpack test.

That leaves number 10, the machine that first broke the petaflops barrier and which is the poster child for ceepie-geepies: IBM's "Roadrunner" hybrid Opteron-Cell blade super. This machine is rated at 1.04 petaflops.

Those 10 machines are the only ones to break the petaflops barrier as gauged by the Linpack test. (No one is claiming that is a perfect test here at El Reg, by the way.) There are a whole bunch of machines that come close.

The University of Stuttgart has an XE6 machine using the new Opteron 6200s that rates 831 teraflops. Meanwhile, the Sunway BlueLight parallel machine, which was announced two weeks ago at the National Supercomputing Center in Jinan, China – and using what is rumored to be a modified Alpha processor – ranked number 14 on the list with 795 teraflops of sustained performance. And Appro International has a new Xtreme-X machine at Lawrence Livermore National Laboratory that using Intel's Xeon E5 chips and hit 773.7 teraflops on the test with its 46,208 cores.

In fact, there are another 10 systems on the list that have more than 500 teraflops of sustained performance.

To everything, churn, churn, churn

While the top machines in the Top 500 list didn't change much in the November 2011 list, there was plenty of churn going on underneath these "capacity systems" as academic research institutions, government labs, and companies upgrade their HPC systems.

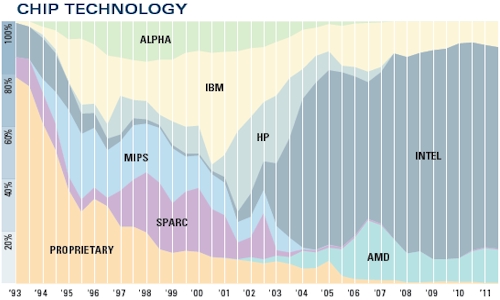

The number of Power-based systems on the list rose to 49, up from 45 six months ago. The number of systems using Intel Xeon or Itanium processors was down by two to 384 machines, and the number using AMD's Opterons slipped by three to 63 machines. That leaves five other machines using other processors – K with its Sparc64s, one NEC vector machine, and some other oddities. If you adjust those numbers by cores, obviously Sparc cores represent a very large portion of the aggregate core count on the Top 500 list.

By the way, there are six Cray XE6 supers on the list already using the just-announced 16-core "Interlagos" Opteron 6200 processors and there is another machine in the Rackable line from Silicon Graphics using a 12-core Opteron 6200. All told, there are 363,656 cores right there, the vast majority of the half million cores that AMD says it has already shipped to HPC and cloud computing customers before today's launch of the Opteron 4200 and 6200 processors at the SC11 show. Similarly, there are 10 supers on the list that are using the not-yet-announced "Sandy Bridge-EP" Xeon E5 processors from Intel, and these machines have a combined 162,656 cores.

There are over 9.2 million cores in the Top 500 list and even with some very large Power and Sparc machines, most of them are x86 cores of one shape or another. Intel's "Westmere" Xeon 5600 and E7 processors are used in 244 systems, up from 175 machines in the June 2011 ranking.

Power and x86 systems dominate the Top 500 HPC systems list

And with this iteration of the list, the researchers behind the Top 500 – Hans Meuer of the University of Mannheim; Erich Strohmaier and Horst Simon of Lawrence Berkeley National Laboratory; and Jack Dongarra of the University of Tennessee – have started counting GPU cores as well as CPU cores (but have stopped short of reckoning how much each does of the Linpack work on any given machine).

In the November 2011 ranking, there are 39 machines that have GPU accelerators, up from 17 only six months ago. Two are using IBM's Cell coprocessors, which are GPUs of a sort, two use AMD's ATI Radeon cards, and the remaining 35 use one or another form of Nvidia Tesla GPU coprocessors or Quadro graphics cards. For some reason, the Roadrunner hybrid Opteron-Cell machine has not been given a GPU core count in the list, but for those using Nvidia or AMD GPUs to goose floating point performance, the GPUs represent 63.1 per cent of the aggregate 532,480 cores in those systems.

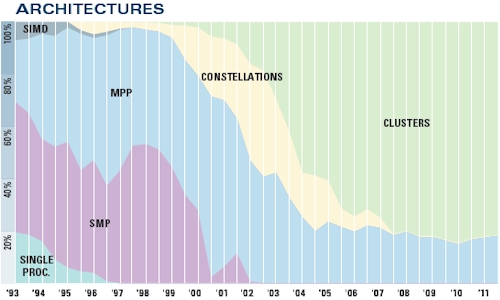

MPPs and clusters dominate the upper echelons of HPC

The Top 500 machines have an aggregate sustained performance of 74.2 petaflops, so we are nowhere near even having an exaflops of computing capacity even installed at the top supercomputer centers of the world, much less cramming it all into one system. Six months ago, the combined performance on the list was 58.7 petaflops, and a year ago it was 43.7 petaflops. The near-linear growth in aggregate performance on the Top 500 list continues apace. To even get on the list this time around, a machine has to have at least 50.9 teraflops of oomph, up from 39.1 teraflops in the June 2011 list.

While you would think that the Top 500 was all about performance and therefore would be comprised of systems using only the fastest interconnects between server nodes in the clusters, there are plenty of jobs that are not latency or bandwidth sensitive – and HPC shops are notorious cheapskates – so Gigabit Ethernet is still the most popular networking used in machines on the list. It is, however, down from 230 machines in the June list. InfiniBand has seen a slight bump, rising to 213 machines, up by five from six months ago. If you look at it by system capacity, InfiniBand wins hands down, with 28.7 petaflops of aggregate floating point power compared to 14.2 petaflops for Gigabit Ethernet.

By vendor, IBM is the king of the list still, with 223 systems, and Hewlett-Packard is second, with 140 machines. IBM is up a bit and HP is down a bit. Cray has 27 machines on the list, Silicon Graphics has 17 machines, Bull has 15 machines, and Appro International, which has scored some big deals in the US and Japan in recent months, has 13 machines.

If you look at the list by vendor and aggregate performance, then IBM actually increased its lead by nearly a point and now has 27.3 per cent of the total flops on the Top 500 list. (And when IBM drops 30 petaflops of BlueGene/Q machinery on the list sometime next year, it will get even more share.) Fujitsu jumped from nowhere a year ago to the number two spot in aggregate performance, with the K super and one other tiny machine together accounting for 14.7 per cent of the list. Cray is right behind with 14.3 per cent of the performance pie and down 1.2 points (and also due to change when some very large machines come online later this year or early next year). HP ranks fourth in terms of aggregate performance on the Top 500, with a 12.9 per cent share.

Supercomputing is a proxy for war in some ways of thinking, and is even used to build and maintain war machines as well as perform do-gooder science that makes all the press releases. The United States has 263 machines on the list and Europe has 127 machines, still a little larger than the Asian systems at 106 machines. The US added nine machines in the past six months, Europe two, and Asia 22. I think you can see where this is heading. Japan has 30 machines on the list, four more than in June, but China ain't messing around with HPC and has 75 machines (up from 64 in June). The United Kingdom has 27 supers on the list, France has 23, and Germany has 20. ®