This article is more than 1 year old

Top 500 Supers: Détente in East, West petaflops race

An arsenal of big iron deploying soon

To everything, churn, churn, churn

While the top machines in the Top 500 list didn't change much in the November 2011 list, there was plenty of churn going on underneath these "capacity systems" as academic research institutions, government labs, and companies upgrade their HPC systems.

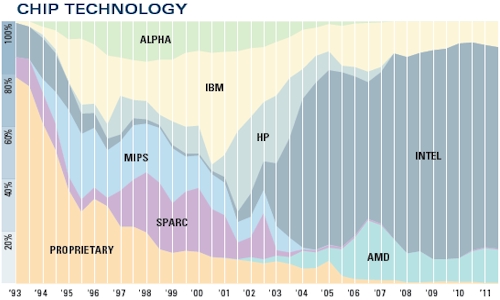

The number of Power-based systems on the list rose to 49, up from 45 six months ago. The number of systems using Intel Xeon or Itanium processors was down by two to 384 machines, and the number using AMD's Opterons slipped by three to 63 machines. That leaves five other machines using other processors – K with its Sparc64s, one NEC vector machine, and some other oddities. If you adjust those numbers by cores, obviously Sparc cores represent a very large portion of the aggregate core count on the Top 500 list.

By the way, there are six Cray XE6 supers on the list already using the just-announced 16-core "Interlagos" Opteron 6200 processors and there is another machine in the Rackable line from Silicon Graphics using a 12-core Opteron 6200. All told, there are 363,656 cores right there, the vast majority of the half million cores that AMD says it has already shipped to HPC and cloud computing customers before today's launch of the Opteron 4200 and 6200 processors at the SC11 show. Similarly, there are 10 supers on the list that are using the not-yet-announced "Sandy Bridge-EP" Xeon E5 processors from Intel, and these machines have a combined 162,656 cores.

There are over 9.2 million cores in the Top 500 list and even with some very large Power and Sparc machines, most of them are x86 cores of one shape or another. Intel's "Westmere" Xeon 5600 and E7 processors are used in 244 systems, up from 175 machines in the June 2011 ranking.

Power and x86 systems dominate the Top 500 HPC systems list

And with this iteration of the list, the researchers behind the Top 500 – Hans Meuer of the University of Mannheim; Erich Strohmaier and Horst Simon of Lawrence Berkeley National Laboratory; and Jack Dongarra of the University of Tennessee – have started counting GPU cores as well as CPU cores (but have stopped short of reckoning how much each does of the Linpack work on any given machine).

In the November 2011 ranking, there are 39 machines that have GPU accelerators, up from 17 only six months ago. Two are using IBM's Cell coprocessors, which are GPUs of a sort, two use AMD's ATI Radeon cards, and the remaining 35 use one or another form of Nvidia Tesla GPU coprocessors or Quadro graphics cards. For some reason, the Roadrunner hybrid Opteron-Cell machine has not been given a GPU core count in the list, but for those using Nvidia or AMD GPUs to goose floating point performance, the GPUs represent 63.1 per cent of the aggregate 532,480 cores in those systems.

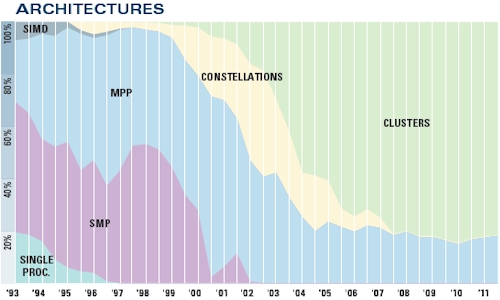

MPPs and clusters dominate the upper echelons of HPC

The Top 500 machines have an aggregate sustained performance of 74.2 petaflops, so we are nowhere near even having an exaflops of computing capacity even installed at the top supercomputer centers of the world, much less cramming it all into one system. Six months ago, the combined performance on the list was 58.7 petaflops, and a year ago it was 43.7 petaflops. The near-linear growth in aggregate performance on the Top 500 list continues apace. To even get on the list this time around, a machine has to have at least 50.9 teraflops of oomph, up from 39.1 teraflops in the June 2011 list.

While you would think that the Top 500 was all about performance and therefore would be comprised of systems using only the fastest interconnects between server nodes in the clusters, there are plenty of jobs that are not latency or bandwidth sensitive – and HPC shops are notorious cheapskates – so Gigabit Ethernet is still the most popular networking used in machines on the list. It is, however, down from 230 machines in the June list. InfiniBand has seen a slight bump, rising to 213 machines, up by five from six months ago. If you look at it by system capacity, InfiniBand wins hands down, with 28.7 petaflops of aggregate floating point power compared to 14.2 petaflops for Gigabit Ethernet.

By vendor, IBM is the king of the list still, with 223 systems, and Hewlett-Packard is second, with 140 machines. IBM is up a bit and HP is down a bit. Cray has 27 machines on the list, Silicon Graphics has 17 machines, Bull has 15 machines, and Appro International, which has scored some big deals in the US and Japan in recent months, has 13 machines.

If you look at the list by vendor and aggregate performance, then IBM actually increased its lead by nearly a point and now has 27.3 per cent of the total flops on the Top 500 list. (And when IBM drops 30 petaflops of BlueGene/Q machinery on the list sometime next year, it will get even more share.) Fujitsu jumped from nowhere a year ago to the number two spot in aggregate performance, with the K super and one other tiny machine together accounting for 14.7 per cent of the list. Cray is right behind with 14.3 per cent of the performance pie and down 1.2 points (and also due to change when some very large machines come online later this year or early next year). HP ranks fourth in terms of aggregate performance on the Top 500, with a 12.9 per cent share.

Supercomputing is a proxy for war in some ways of thinking, and is even used to build and maintain war machines as well as perform do-gooder science that makes all the press releases. The United States has 263 machines on the list and Europe has 127 machines, still a little larger than the Asian systems at 106 machines. The US added nine machines in the past six months, Europe two, and Asia 22. I think you can see where this is heading. Japan has 30 machines on the list, four more than in June, but China ain't messing around with HPC and has 75 machines (up from 64 in June). The United Kingdom has 27 supers on the list, France has 23, and Germany has 20. ®