This article is more than 1 year old

Intel's Tri-Gate gamble: It's now or never

Deep dive into Chipzilla's last chance at the low end

All well and good, but so what?

All this techy-techy yumminess is good geeky fun, but what does it all add up to? How much improvement are we to expect out of the Tri-Gate process upgrade?

Quite a bit, if Intel – and Bohr – are to be believed.

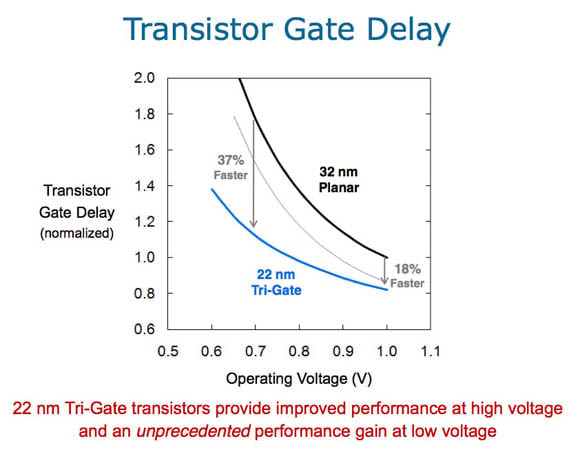

The 22nm Tri-Gate transistors will, according to Bohr, provide much-improved performance at low voltages. The examples he provided were based on gate delay as one variable, which in a transistor increases as power decreases. "When you operate [any transistor] at a lower votage, it tends to slow down. Think of gate delay as the inverse of frequency: higher gate delay means slower frequency," he said.

Much faster at far less power – what's not to like?

"In this example," he explained while displaying the slide above, "at 0.7 volts they're about 37 per cent faster than today's 32nm planar transistors." And to cut naysayers off at the pass, he added: "And I want to emphasize that this 32-nanometer curve – that's not just some dummy straw man, those are the fastest planar transistors in the industry today," adding with a trace of pride: "That's Intel's technology."

What happens, though, when you operate a 32nm transistor and a 22nm Tri-Gate transistor at the same gate delay? You'd assume lower power consumption for the Tri-Gate, right? But how much lower? A glance at Bohr's Gate Delay slide shows that when the 32nm planar and 22nm Tri-Gate transistors are both operating at a gate delay normalized to 1.0, the power savings are considerable.

"If you operate them at the same gate delay, the same frequency," he said, refering to the two transistor types, "to get the same performance from [Tri-Gate] as planar, you can do so at about two-tenths of a volt lower voltage – in other words, at 0.8 volts instead of 1 volt. That voltage reduction, combined with the capacitance reduction that comes from a smaller transistor, provides more than a 50 per cent active-power reduction."

Then, in Wednesday's understatement, Bohr concluded: "And that's very important."

To sum up: at the same voltage, 37 per cent faster performance. At the same clock frequency – inferred from gate delay – a 50 per cent reduction in power.

Not too shabby, if true. Remember, though, that these aren't benchmark figures derived by independent testing, they're numbers taken from slides presented by an Intel senior fellow at the rollout of his baby.

Your mileage may vary.

Still, Tri-Gate may allow Intel to both maintain IA's lead in the server space while lowering cooling and power costs, and find it a new home in the low-power consumer space.

If, however, Tri-Gate doesn't perform as promised, Intel may have blown its last chance of entering the lucrative, growing, and ultimately consumer-consuming low-power mobile market.

Or, for that matter, Intel could license the ARM architecture and start buiding its own ARM variants in its own fabs, using its 22nm Tri-Gate process. That's unlikely, but stranger things have happened – such as Intel, once the seemingly unchallengeable PC overlord, being threatened in the consumer-PC market by Windows-running, 40-bit addressing, multicore ARM Cortex-A15 chips. ®