This article is more than 1 year old

Pillar pillages SPC-1 benchmark

Er, where is EMC?

Pillar has announced a sparkling SPC-1 benchmark, bettering IBM's Storwize V7000. Oddly EMC is not present in SPC-1 results and NetApp's results are 2008 vintage. What's going on?

The SPC-1 benchmark is for block-access storage, not for filers, where the SPECsfs2008 benchmark is used. There are high-end SPC-1 results, ranging from roughly 150,000 IOPS up to 400,000 where IBM's SAN Volume Controller recorded 380,489.3 IOPS with a cost of $18.83/IOPS. This involved a clustered 6-node SVC with several high-end DS8700 arrays behind it.

A DS8700 on its own, with SSDs and EasyTier data tiering, recorded just 32,998.24 IOPS at a cost of $47.92/IOPS. The SVC improved its performance enormously.

Then there are 100,000-ish and sub-90,000 IOPS results. A Fujitsu Eternus DX440 recorded 97,488.25 IOPS at a cost of $5.51, good value, and a 3PAR F400 scored 93,050.06 IOPS at a cost of $5.89.

Now come a cluster of sub-90,000 scores headed up by Pillar's latest result, an Axiom 600 series 3, which achieved 70,102.27 IOPS, costing $7.32/IOPS. In January 2009 an earlier version of the Axiom 600 achieved 64,992.77 IOPS with a price-performance result of $8.79/IOPS. Pillar then said its was: "the most cost effective SPC-1 result for business-class storage arrays."

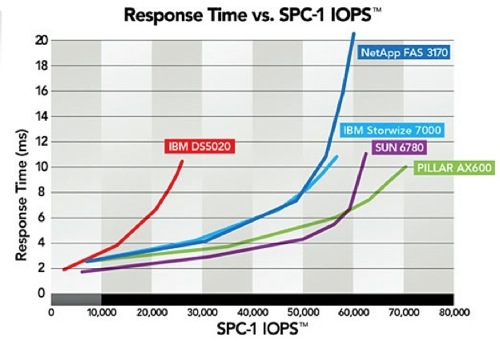

The latest result improves on that, with Pillar CEO Mike Workman blogging that he was particularly pleased with the latency, and including a chart showing how this was better than a group of competing systems.

Pillar Data SPC-1 chart

The cost per IOPS is not so favourable to Pillar, with a 2-node IBM Storwize V7000 recording $7.24/IOPS but then it only achieved 56,510.85 IOPS, a lot less than Workman's Axiom 600. For the moment the Axiom is the leader of this particular pack.

Looking at the SPC-1 results, and remembering that EMC has been very active in the SPECsfs2008 filer benchmark area, you ask yourself where are the EMC SPC-1 results. There aren't any, not one, apart from some NetApp-submitted CLARiiONs from a few years ago.

NetApp products are present in the SPC-1 tables but the results are all old, 2008 vintage, with no newer systems represented, although some are in the separate SPC-1 (E) - for energy - listing. It makes you think. There is no ability here to compare newer NetApp arrays with their Flash Caches against EMC VNX arrays or the high-end V-MAX. That's annoying. We could imagine EMC and NetApp both running SPC-1 benchmarks but refusing to submit results to the SPC-1 council until and unless they have category-winning scores. Why would their respective marketing departments countenance submitting results showing that their products are second rate in SPC-1 terms?

There is another obvious missing product: IBM's XIV array. Maybe its performance in SPC-1 terms leaves something to be desired?

In the high-end SPC-1 area, one result stands out. It's a Texas Memory Systems RamSan-620 which scored 254,994.21 IOPS, easily beaten by IBM's SVC and also Huawei-Symantec (300,062.04 with Oceanspace S8100 8-node system), but its cost/IOPS is a remarkable $1.13. No one else comes close. There is an Infortrend ESVA F60 which did well on that measure, coming in at $5.12 with its 180,488.53 IOPS – bet you didn't realise Infortrend could perform so well – and IBM's DS8700 doing least well, costing $47.92 per IOPS. But then you don't buy an 8700 for sheer SPC-1 IOPS grunt and cost/efficiency.

Look out for fresh SPC-1 results at the high-end and mid-range as both EMC and NetApp present their latest flash-enhanced arrays, but only if they are winners. ®